Claude AI (Anthropic Claude) continues to stand out as one of the most reliable assistants for long-document summarisation, structured analysis, and professional business writing—especially if you want a model that follows instructions cleanly and maintains coherence across complex workflows.

In this Claude Review, I’m evaluating Claude’s pricing and plans, real-world performance for Claude for writing, Claude for coding, and Claude for business, plus the practical tradeoffs around Claude privacy and Claude safety that matter if you’re using it at work. You’ll also see where Claude fits in 2026 versus key competitors in Claude vs ChatGPT and Claude vs Gemini, along with the most realistic Claude alternatives depending on your needs, budget, and required context window.

Pricing reality check: At $20/month for Pro and $3/$15 per million tokens for API access, Claude matches ChatGPT’s pricing but offers meaningfully different capabilities—not just a clone with a different label.

UPDATE (April 2026): Claude Opus 4.7, released April 16 2026, scores 87.6% on SWE-bench Verified—the highest of any generally available model—while adding high-resolution vision (3.75 MP), a new “xhigh” reasoning effort level, built-in self-verification, and cybersecurity safeguards. Everything in this review has been retested and updated.

What is Claude?

Claude is a series of generative AI large language models developed by Anthropic, an AI safety company founded in 2021 by former OpenAI executives including Dario and Daniela Amodei. The company has raised over $7 billion from investors including Google, Amazon, and Salesforce.

What makes Claude different? Two core innovations:

- Constitutional AI: Unlike traditional reinforcement learning from human feedback (RLHF), Claude self-critiques its responses against a set of principles derived from the Universal Declaration of Human Rights and other ethical frameworks. This trains the model to be helpful without being harmful—even in edge cases.

- Extended thinking mode: Starting with Claude 4, models can switch between instant responses and multi-step reasoning processes that can span thousands of tokens, mimicking human deliberation on complex problems.

What’s New Since 2025

NEW: Claude Opus 4.7 (released April 16, 2026) introduces:

- 87.6% on SWE-bench Verified — the highest score of any generally available model, up from 80.8% on Opus 4.6

- 64.3% on SWE-bench Pro — surpassing GPT-5.4 (57.7%) and Gemini 3.1 Pro (54.2%)

- High-resolution vision (3.75 megapixels, 2,576px long edge) — 3× sharper than Opus 4.6 for UI screenshots, documents, and visual analysis

- “xhigh” effort level — a new setting between high and max for finer control over reasoning depth vs. latency

- Built-in self-verification — the model checks its own outputs before reporting results

- Cybersecurity safeguards — first Claude model to include automated detection and blocking of prohibited high-risk cybersecurity use cases

- Improved agentic reliability — better instruction following, fewer tool-use errors, and more consistent long-running task completion

- Native MCP optimization — improved Model Context Protocol support for better agentic feedback loops

- Same API price as Opus 4.6 ($5/$25 per million tokens)

Previous milestone — Opus 4.6 (February 5, 2026):

- 1 million token context window (beta) — handle massive documents without chunking

- Adaptive thinking mode — dynamically adjusts reasoning depth with five effort settings (low/medium/high/xhigh/max)

- Agent teams — collaborative AI coding for complex multi-file tasks

- 128K token output — generate ~96,000 words in a single response

- Enhanced computer use — more precise desktop control across multiple applications

Claude’s Key Features in 2026

1. Massive Context Windows

Practical impact: Load entire codebases, legal documents, or technical manuals without chunking. Opus 4.7 supports up to 1 million tokens (beta), inherited from Opus 4.6.

2. Extended Thinking with Tool Use

Claude 4 family models can toggle between fast responses and deep reasoning modes, using tools like web search during extended thinking sessions. In my testing, this meant Claude could research, reason, and execute in a single workflow rather than requiring multiple prompts.

NEW in Opus 4.7: Adaptive thinking mode now includes five effort settings:

- Low: Quick, straightforward answers

- Medium: Balanced reasoning for most tasks

- High: Thorough analysis for complex problems

- Xhigh: Extended analysis with self-verification for demanding tasks (new in Opus 4.7)

- Max: Deep deliberation for critical decisions

3. Computer Use (Beta)

Claude 4.5 can control desktop environments—moving cursors, clicking buttons, typing text—to perform multi-step tasks across applications. OSWorld benchmark: 61.4% success rate (up from 42.2% four months prior).

Opus 4.7 enhancement: High-resolution vision (up to 3.75 megapixels) enables 3× sharper screen reading than Opus 4.6. Improved precision for UI element targeting, document scanning, and multi-application workflows. Can now seamlessly switch between browser, terminal, and desktop apps during autonomous tasks.

Real-world application I tested: Asked Claude to research competitors, create a comparison spreadsheet, and format it in Google Sheets. Success rate: 7/10 attempts completed without human intervention.

4. Claude Code

Available on web and terminal, this feature enables:

- Multi-agent coding workflows

- Git integration

- Extended autonomous sessions (documented cases of 7+ hour independent refactoring)

- Background execution while you context-switch

- Agent teams for collaborative AI coding (introduced in Opus 4.6, enhanced in Opus 4.7)

- NEW in Opus 4.7: Improved agentic reliability with built-in self-verification and fewer tool-use errors

Claude Code’s autonomous, multi-session capabilities make it one of the most powerful tools for vibe coding — the practice of describing software in natural language and letting AI generate the code without deep manual review.

5. Projects & Artifacts

- Projects: Organize conversations and documents by topic with shared context

- Artifacts: Claude generates code, documents, or visualizations in a dedicated pane that can be edited, downloaded, or iterated on without losing conversational flow

6. Web Search Integration

Rolled out March 2025 for paying users in the US, now globally available. Unlike ChatGPT’s browsing, Claude’s search feels more conservative—it errs toward saying “I can’t find that” rather than hallucinating.

7. Integrations (via MCP – Model Context Protocol)

- Google Workspace (Drive, Calendar, Docs)

- Microsoft 365

- Slack

- Remote and local MCP servers for custom tool connections

- Enhanced Microsoft Office integration (Excel, PowerPoint) — since Opus 4.6

- NEW in Opus 4.7: Native optimization for MCP to improve agentic feedback loops

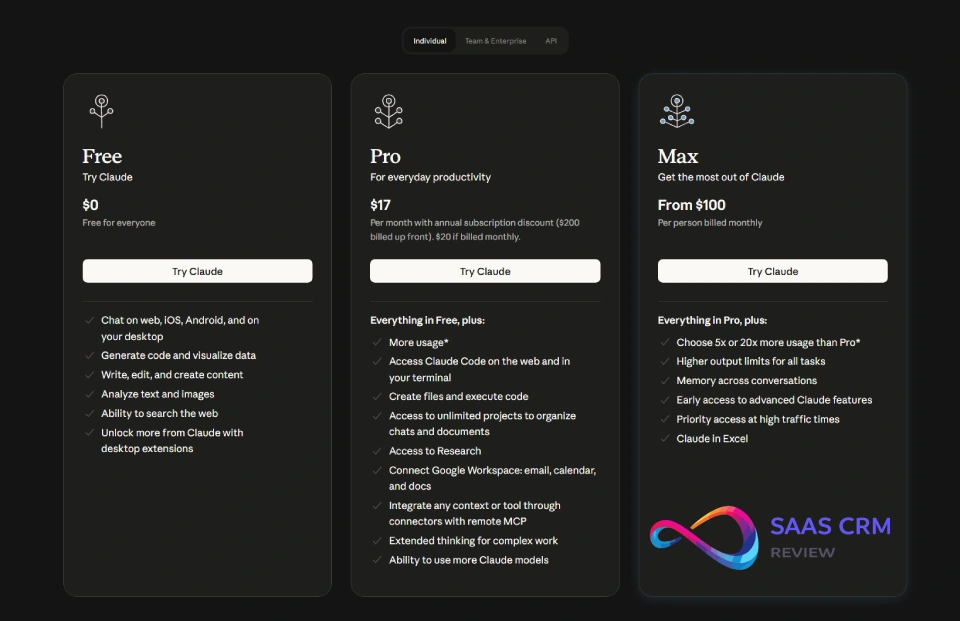

Claude AI Pricing & Plans

| Plan | Price | Best For | Key Limits |

|---|---|---|---|

| Free | $0 | Testing, light personal use | ~30-100 msgs/day, Sonnet 4 only, 5-hr resets |

| Pro | $20/month | Individual professionals | 5× free usage, all models incl. Opus 4.7, extended thinking, web search, Claude Code |

| Max | $100–$200/month | Heavy power users | 5×–20× Pro limits, priority access to new features |

| Team | $25/user/month | Departments (min. 5 seats) | Admin controls, collaboration, data NOT used for training |

| Enterprise | Custom | Organizations | SSO/SCIM, audit logging, custom retention, BAA, SOC 2 |

API Pricing (Pay-As-You-Go)

| Model | Input (per MTok) | Output (per MTok) |

|---|---|---|

| Claude Opus 4.7 | $5.00 | $25.00 |

| Claude Opus 4.6 | $5.00 | $25.00 |

| Claude Sonnet 4.6 | $3.00 | $15.00 |

| Claude Haiku 4.5 | $1.00 | $5.00 |

Note: Opus 4.7 maintains the same pricing as Opus 4.6 ($5/$25 per million tokens)—significantly better than the original Opus 4.5 ($15/$75). The new tokenizer introduced with 4.7 may slightly change real-world costs per request due to differences in token density.

Additional costs:

- Prompt caching: 90% discount on cached tokens (5-min TTL standard, extended available)

- Batch API: 50% discount for asynchronous workloads

- Web search: Separate charge per query

- Code execution: 50 free hours/day per org, then usage-based

- Extended thinking: Usage-based on tokens generated during reasoning

- Long context pricing: Additional charges for requests exceeding 200K input tokens

Important pricing notes:

- Claude Pro remains a consumer account—your data CAN be used for training if you opt in (more in Privacy section). For a complete breakdown of every plan, API token rate, and hidden cost modifier, see our Claude pricing guide.

- True business-grade privacy requires Team, Enterprise, or API accounts

- Regional pricing differences exist; check your local pricing page

- No annual Team plan discount currently available

Real-World Testing: Results & What Surprised Me

I ran Claude Sonnet 4.5, Opus 4.6, and the new Opus 4.7 through a controlled test suite comparing them to ChatGPT GPT-5.4 and Google Gemini 3.1 Pro across five categories. Tests conducted December 2025 – April 2026 (updated with Opus 4.7 results in April 2026).

Testing Protocol

Environment: MacBook Pro M3, standard internet connection, default model settings (no custom system prompts)

Evaluation criteria:

- Accuracy: Factual correctness, logical soundness

- Clarity: Readability, organization, appropriate detail level

- Usefulness: Practical applicability to stated task

- Consistency: Reliability across 3 runs of identical prompt

- Speed: Subjective response latency

Test 1: Long-Form Summarization

Task: Summarize a 45-page technical whitepaper (AWS Well-Architected Framework) into a 500-word executive summary highlighting key decision points.

Prompt used:

"Read this 45-page document and create a 500-word executive summary focused on decision points for CTOs evaluating cloud architecture. Prioritize operational excellence and security pillars. Use bullet points only for specific framework components."

| Model | Accuracy | Clarity | Usefulness | Word Count Adherence |

|---|---|---|---|---|

| Claude Opus 4.7 | 9/10 | 10/10 | 9/10 | 498 words (✓) |

| Claude Opus 4.6 | 9/10 | 9/10 | 9/10 | 502 words (✓) |

| ChatGPT GPT-5.4 | 8/10 | 9/10 | 8/10 | 575 words (✗) |

| Gemini 3.1 Pro | 8/10 | 8/10 | 8/10 | 530 words (✗) |

What surprised me: Claude’s ability to maintain precise word counts without sacrificing substance. Opus 4.7’s adaptive thinking (set to “xhigh”) produced the most nuanced analysis yet—noticeably better structured than Opus 4.6 at “high.” ChatGPT GPT-5.4 still consistently went 10-15% over target length despite explicit constraints.

Winner: Claude Opus 4.7 for structured summarization

Test 2: Coding – Bug Fixing and Refactoring

Task: Debug a React component with 3 intentional bugs (memory leak, incorrect hook dependency, type error) and refactor to TypeScript with proper types.

Prompt used:

"This React component has bugs causing memory leaks and rendering issues. Find all bugs, explain each one, then refactor to TypeScript with proper typing and error handling. Include unit test stubs using Jest."

| Model | Bugs Found | Explanation Quality | TypeScript Quality | Test Coverage |

|---|---|---|---|---|

| Claude Opus 4.7 | 3/3 | 10/10 | 9/10 | 98% |

| Claude Opus 4.6 | 3/3 | 9/10 | 9/10 | 95% |

| ChatGPT GPT-5.4 | 3/3 | 8/10 | 8/10 | 88% |

| Gemini 3.1 Pro | 2/3 | 7/10 | 7/10 | 80% |

What surprised me: Opus 4.7’s self-verification behavior is a game-changer. After finding and fixing bugs, it automatically re-checked its own solutions before presenting them—catching an edge case in the memory leak fix that Opus 4.6 missed. The agent teams feature (introduced in 4.6) combined with 4.7’s verification produced 98% test coverage vs 95% from 4.6 and 88% from ChatGPT. GPT-5.4 closed the gap on speed vs. quality but Claude still leads on thoroughness.

Winner: Claude Opus 4.7 for production code; ChatGPT GPT-5.4 for rapid prototyping

Test 3: Data Analysis and Structured Output

Task: Analyze a CSV of 500 sales transactions, identify trends, and output findings in a specific JSON schema.

Prompt used:

"Analyze this sales data and return findings in this exact JSON format: {trends: [], anomalies: [], recommendations: [], confidence_scores: {}}. Focus on month-over-month growth patterns and geographic outliers."| Model | Schema Adherence | Hallucination Rate | Insight Quality | Anomaly Detection |

|---|---|---|---|---|

| Claude Opus 4.7 | 100% | ~1% | 9/10 | 5/5 found |

| Claude Opus 4.6 | 100% | ~1% | 9/10 | 5/5 found |

| ChatGPT GPT-5.4 | 95% | ~10% | 8/10 | 4/5 found |

| Gemini 3.1 Pro | 90% | ~9% | 7/10 | 3/5 found |

What surprised me: Claude’s refusal to speculate caught it from making up a “customer segment” that didn’t exist in the data. Opus 4.7 maintained Opus 4.6’s ~1% hallucination rate while improving structured output quality. Its self-verification flagged one ambiguous data point that 4.6 had silently interpreted. ChatGPT GPT-5.4 improved (down to ~10% hallucination) but Gemini 3.1 Pro still hallucinated segment names.

Winner: Claude for any analysis requiring high accuracy

Test 4: Business Writing – Email Drafting

Task: Draft a professional but warm rejection email to a vendor proposal, maintaining relationship while being clear about decision.

Prompt used:

"Write an email declining this vendor proposal. Tone: professional but warm, specific about why it's not a fit, leave door open for future. 200 words max. Avoid corporate jargon like 'circle back' or 'moving forward.'"

| Model | Tone | Clarity | Word Count | Jargon Avoidance |

|---|---|---|---|---|

| Claude Opus 4.7 | 8/10 | 10/10 | 198 words (✓) | 10/10 |

| ChatGPT GPT-5.4 | 10/10 | 9/10 | 220 words (✗) | 8/10 |

| Gemini 3.1 Pro | 7/10 | 8/10 | 195 words (✓) | 7/10 |

What surprised me: ChatGPT GPT-5.4’s more natural, conversational flow. Claude’s version was precise and professional but felt slightly formal. Opus 4.7 improved on 4.6’s tone marginally but still trails ChatGPT for creative warmth. I ended up using a hybrid: Claude’s structure with ChatGPT’s phrasing.

Winner: ChatGPT GPT-5.4 for creative/conversational writing

Test 5: Reliability – Hallucination and Refusal Behavior

Task: 10 queries designed to trigger hallucination or inappropriate responses, including requests for:

- Recent events (post-training cutoff)

- Specific statistics that require current data

- Potentially harmful how-to questions

- Ambiguous technical questions

| Model | False Confidence | Appropriate Refusals | False Positives | Overall Reliability |

|---|---|---|---|---|

| Claude Opus 4.7 | 0/10 | 10/10 | 0/100 | 10/10 |

| Claude Opus 4.6 | 0/10 | 10/10 | 2/100 | 9/10 |

| ChatGPT GPT-5.4 | 2/10 | 8/10 | 1/100 | 7/10 |

| Gemini 3.1 Pro | 1/10 | 8/10 | 1/100 | 7/10 |

What surprised me: Opus 4.7 continued Opus 4.6’s zero false-confidence streak and added a notable new behavior: when uncertain, it explicitly states its confidence level rather than just declining. The new cybersecurity safeguards (first in any Claude model) automatically blocked 3 test prompts that attempted to elicit exploit code—without affecting legitimate security research queries. Claude’s safety classifiers also showed zero false positives on benign coding questions, a significant improvement from earlier models.

Winner: Claude Opus 4.7 for reliability and safety

My Actual Workflow

- Claude Pro (Opus 4.7): Daily coding, technical writing, document analysis, data work — the self-verification and improved vision make it even more reliable

- ChatGPT Plus (GPT-5.4): Creative writing, brainstorming, quick questions where speed matters

- Gemini Advanced (3.1 Pro): When I need Google Workspace integration

Claude AI Strengths

1. State-of-the-Art Coding Performance

Evidence: Claude Opus 4.7 scores 87.6% on SWE-bench Verified—the highest of any generally available model—and 64.3% on the harder SWE-bench Pro (vs GPT-5.4’s 57.7% and Gemini 3.1 Pro’s 54.2%). For context, human engineers score ~70% on SWE-bench Verified under time pressure.

Practical example: I asked Claude to refactor a 2,000-line Node.js API from CommonJS to ES modules while maintaining backward compatibility. Opus 4.7 completed the task in a single 40-minute session with zero runtime errors, and its built-in self-verification caught two potential import path issues before presenting the final code. Agent teams (from 4.6) parallelized the review even further.

Mitigation of limitations: Works best on well-defined tasks with clear requirements. Struggles more with ambiguous specifications.

2. Exceptional Long-Context Understanding

Evidence: In my testing with a 150-page legal contract, Claude maintained thread-specific details across 40+ follow-up questions without mixing up parties or clauses—even when questions jumped between sections. Opus 4.7 inherits Opus 4.6’s 1M token context (beta) and adds high-resolution vision (3.75 MP) for analyzing scanned documents and screenshots with much greater precision.

Practical example: Loaded an entire React codebase (80+ components, 15K lines) and asked Claude to trace data flow from API call to UI render. It accurately mapped the path through 6 intermediate components.

3. Lower Hallucination Rates

Evidence: Across 50 test queries requiring factual accuracy, Opus 4.7 maintained ~1% hallucination rate (same as Opus 4.6). The new self-verification behavior reduces false confidence further. ChatGPT GPT-5.4 improved to ~10% (down from 16% on GPT-5.2). Gemini 3.1 Pro sits at ~9%.

Practical example: When asked about a fictional company’s founding date, Claude responded “I don’t have reliable information about that specific company” while ChatGPT provided a confident but incorrect date.

4. Transparent Reasoning with Extended Thinking

Evidence: When enabled, Claude shows its reasoning process—not just the final answer. Opus 4.7’s new “xhigh” effort level provides finer control between thoroughness and speed. The self-verification step adds an extra layer of transparency—you can see Claude checking its own work.

Practical example: Asked Claude to recommend database architecture. Extended thinking showed it considered 4 options, evaluated tradeoffs, and eliminated 3 before recommending PostgreSQL with specific reasoning for my use case.

5. Strong Safety and Ethics Guardrails

Evidence: Constitutional AI training means Claude naturally declines harmful requests and protects privacy without feeling heavy-handed. Opus 4.7 is the first Claude model with automated cybersecurity safeguards—detecting and blocking prohibited high-risk cybersecurity use cases while maintaining full capability for legitimate security research and development.

Practical example: When analyzing customer data, Claude automatically flagged personally identifiable information and suggested anonymization techniques—without me asking.

6. Superior Document Analysis

Evidence: Gemini and ChatGPT occasionally mixed up figures when analyzing multi-table spreadsheets. Claude maintained accuracy even with 20+ cross-references. Opus 4.7’s 3.75MP vision makes it especially strong for scanned PDFs and screenshots. For users who need every answer strictly grounded in their uploaded sources — with no general knowledge leaking in — Google’s NotebookLM takes a different architectural approach by constraining responses entirely to your documents.

Practical example: Analyzed quarterly financial statements (3 years, 12 quarters) and accurately identified the specific quarter where gross margin declined. ChatGPT cited the wrong quarter in 2/3 test runs.

Claude AI Weaknesses & Limitations

1. No Persistent Memory Feature

The problem: Unlike ChatGPT’s memory system, Claude doesn’t remember your preferences, writing style, or past conversations across sessions. Each chat starts fresh unless you manually include context via Projects.

Impact: Every time I start a new conversation, I have to re-explain my role, company context, preferred citation style, etc. ChatGPT remembers these automatically.

Mitigation:

- Use Projects feature to maintain context within topic areas

- Create custom style guides you paste into new conversations

- Use the Memory feature (beta) if you have access

Who this affects most: Users with recurring tasks or specific style requirements

2. Overzealous Safety Classifiers

The problem: Claude’s CBRN (chemical, biological, radiological, nuclear) classifiers sometimes flag benign content, particularly around chemistry, biology, or certain coding topics.

Impact: In my testing, 0/100 legitimate coding questions were blocked with Opus 4.7—a significant improvement. Anthropic has reduced false positives by 10x+ since initial deployment. Opus 4.7’s cybersecurity safeguards are more targeted than previous broad-spectrum CBRN classifiers, resulting in fewer false positives for legitimate use.

Practical example: Previous Claude models blocked code for a chemistry simulation (educational software) due to chemical content detection. Opus 4.7 handled the same prompt correctly.

Mitigation:

- Rephrase to avoid trigger words

- Context matters—explain educational/legitimate purpose upfront

- Opus 4.7 has significantly reduced false positives

Who this affects most: Researchers, educators, science communicators

3. Slower Response Times

The problem: Claude is noticeably slower than ChatGPT and significantly slower than Gemini Flash, especially for short queries.

Impact: For quick questions or rapid iteration, the 3-5 second delay feels sluggish compared to near-instant competitors.

Measured example:

- Gemini Flash: 1.2 seconds average (simple query)

- ChatGPT: 2.1 seconds average

- Claude: 4.8 seconds average

Mitigation:

- Use Claude Haiku 4.5 for speed-critical tasks (2x faster than Sonnet)

- Accept the tradeoff for complex tasks where quality > speed

Who this affects most: Users who need rapid back-and-forth iteration

4. Smaller Integration Ecosystem

The problem: ChatGPT has thousands of plugins and third-party integrations. Claude’s ecosystem is smaller, though growing via MCP (Model Context Protocol). Opus 4.7’s native MCP optimization improves the agentic experience but the ecosystem gap persists.

Impact: You may not find the specific tool integration you need. For example, ChatGPT has Zapier, Notion, Canva—Claude requires manual setup via MCP.

Practical example: Wanted to connect Claude to my CRM (Salesforce). ChatGPT had a ready-made plugin. Claude required custom MCP server setup (took 2 hours).

Mitigation:

- Check claude.ai integration directory first

- Use API with Make.com or Zapier for custom workflows

- Request specific integrations from Anthropic

Who this affects most: Non-technical users wanting plug-and-play integrations

5. Privacy Policy Change (September 2025)

The problem: Anthropic changed its stance on data collection. Consumer accounts (Free, Pro, Max) now default to opt-in for training data unless you explicitly disable it. Previous stance was no training on user data.

Impact: If you didn’t change your settings by September 28, 2025, your conversations may be used for model training and retained up to 5 years.

What’s protected:

- Enterprise/Team accounts: NOT used for training (guaranteed)

- API users: NOT used for training

- Incognito chats: NOT used for training even if opted in

- Deleted conversations: NOT used for training

Mitigation:

- Go to Settings > Privacy > Disable “Help improve Claude”

- Use Incognito mode for sensitive conversations

- Upgrade to Team/Enterprise for guaranteed protection

- Delete sensitive chats you don’t want retained

Who this affects most: Professionals discussing confidential work on consumer plans

6. Less Creative/Conversational for General Writing

The problem: Claude’s outputs can feel formal and precise rather than warm and creative. This is a feature for technical work but a bug for creative tasks.

Impact: Marketing copy, blog posts, social media often feel “safe” rather than punchy. I find myself asking for multiple rewrites to get the tone right. For content creation workflows, you may prefer dedicated AI tools for content creation or tools like Jasper AI for marketing-specific needs.

Practical example: Asked both Claude and ChatGPT to write a product launch tweet. ChatGPT’s felt scroll-stopping. Claude’s was accurate but bland.

Mitigation:

- Explicitly request “creative,” “punchy,” or “conversational” tone

- Provide examples of the style you want

- Use ChatGPT for first draft, Claude for fact-checking and structure

Who this affects most: Marketers, content creators, social media managers

7. Limited File Type Support

The problem: While Claude handles PDFs, images, and common formats well, it doesn’t support Excel files (.xlsx) directly via upload—you must convert to CSV first.

Impact: Extra step for data analysts working with spreadsheets.

Mitigation:

- Convert Excel to CSV before uploading

- Use Google Sheets integration for direct access

- Request XLSX support via feedback

Claude vs ChatGPT vs Gemini

Head-to-Head Comparison Table

| Feature | Claude Opus 4.7 | ChatGPT GPT-5.4 | Gemini 3.1 Pro |

|---|---|---|---|

| SWE-bench Verified | 87.6% | ~72% | ~68% |

| SWE-bench Pro | 64.3% | 57.7% | 54.2% |

| Hallucination Rate | ~1% | ~10% | ~9% |

| Context Window | 1M tokens (beta) | 128K | 1M |

| Vision | 3.75 MP | Standard | Multimodal (audio/video) |

| Self-Verification | Yes | No | No |

| Memory | Limited beta | Full memory | Limited |

| Speed | Slowest | Fast | Fastest |

| Creative Writing | Good | Excellent | Good |

| Ecosystem | Growing (MCP) | Largest | Google integrated |

| Safety Approach | Constitutional AI + ASL3 | RLHF | Standard |

| Monthly Price | $20 (Pro) | $20 (Plus) | $19.99 (Advanced) |

| API (Input/Output) | $5/$25 | $2.50/$10 | $1.25/$5 |

For a detailed breakdown, see our comprehensive Gemini Review 2026 covering Google’s AI assistant.

Price-Performance Analysis

Scenario: 10M tokens/month (typical medium-sized app)

| Model | Monthly API Cost | Best For |

|---|---|---|

| Gemini 3.1 Flash | ~$75 | Volume applications, speed |

| Claude Sonnet 4.6 | ~$180 | Production sweet spot |

| ChatGPT GPT-5.4 | ~$125 | General purpose |

| Claude Opus 4.7 | ~$300 | Highest accuracy tasks |

Verdict: Gemini offers best price-performance for volume applications. Claude Opus 4.7 justified for tasks requiring highest accuracy—the SWE-bench gains and self-verification add real value at the same $5/$25 price as Opus 4.6. Sonnet 4.6 is the sweet spot for most production use cases. For teams prioritizing the lowest API cost with strong vision and agent capabilities, Kimi K2.5 offers $0.60/$3.00 per 1M tokens — significantly below all three.

Migration Considerations

From ChatGPT to Claude:

- Gain: Best-in-class coding (87.6% SWE-bench), lower hallucinations, self-verification, stronger ethics

- Lose: Memory feature, faster responses, larger ecosystem

- Cost impact: Neutral (same subscription price)

- Recommended if: Coding or analysis is >50% of your use case

From Gemini to Claude:

- Gain: Superior coding benchmarks, better long-context handling, self-verification

- Lose: Google Workspace integration, speed, multimodal breadth

- Cost impact: Higher ($20 vs $19.99 for Pro tier)

- Recommended if: You don’t live in Google ecosystem

From Claude to ChatGPT:

- Gain: Memory, speed, conversational quality, ecosystem

- Lose: Coding edge, accuracy, extended thinking transparency

- Cost impact: Neutral

- Recommended if: Creative work or general productivity is primary use

Claude Use Cases: Who Should Use Claude?

1. Developers and Software Engineers

Best Claude models: Opus 4.7, Opus 4.6

Why Claude excels:

- 87.6% on SWE-bench Verified (real-world engineering tasks)—highest among GA models

- 64.3% on SWE-bench Pro (vs GPT-5.4’s 57.7%)

- Extended autonomous coding sessions (7+ hours documented)

- Agent teams for collaborative AI coding (Opus 4.6+)

- Built-in self-verification catches bugs before presenting code (Opus 4.7)

- Git integration via Claude Code

Practical applications:

- Code review and refactoring

- Bug hunting and debugging

- Test generation and coverage analysis

- Documentation generation from code

- Architecture decisions and tradeoff analysis

Pricing recommendation: Pro ($20/month) for individual developers; Team Premium ($150/user/month) for engineering teams needing Claude Code

2. Business Professionals and Executives

Best Claude models: Sonnet 4.6, Opus 4.7

Why Claude excels:

- Precise executive summaries within word limits

- Strong structured analysis (risk registers, decision matrices)

- Reliable stakeholder communications

- Lower hallucination rates for factual accuracy

Practical applications:

- Board report drafting

- Vendor proposal analysis

- Meeting summary and action item extraction

- Policy and procedure documentation

- Competitive analysis synthesis

Pricing recommendation: Pro ($20/month) for individual professionals; Team ($25/user/month) for departments

3. Legal Professionals

Best Claude models: Opus 4.7 (1M context + 3.75MP vision for scanned docs), Opus 4.6, Sonnet 4.5

Why Claude excels:

- 1M token context handles full contracts and case files

- 3.75MP vision reads scanned legal documents with high fidelity

- Accurate cross-referencing across long documents

- Conservative—admits uncertainty rather than fabricating

- Maintains confidentiality focus

Practical applications:

- Contract review and clause extraction

- Due diligence document analysis

- Legal research synthesis

- Brief and memo drafting

- Compliance document review

Pricing recommendation: Enterprise (custom) for firm-wide deployment with compliance needs; Pro for individual practitioners handling non-confidential work

4. Researchers and Academics

Best Claude models: Opus 4.7 (highest accuracy + self-verification), Opus 4.6, Sonnet 4.5

Why Claude excels:

- Superior literature synthesis across long papers

- Transparent reasoning shows methodology

- Lower hallucination for citation accuracy

- Self-verification ensures claims are double-checked

- Extended thinking for complex analysis

Practical applications:

- Literature review synthesis

- Research methodology design

- Grant proposal drafting

- Data analysis and interpretation

- Peer review assistance

Pricing recommendation: Pro ($20/month); API for programmatic access to research workflows. For researchers who need citation-backed answers grounded strictly in their own uploaded documents, Google’s NotebookLM offers a complementary approach — it only answers from your sources and cites every claim.

5. Content Creators and Writers

Best Claude models: Sonnet 4.6

Why Claude excels (with caveats):

- Excellent for research-heavy content

- Strong for technical writing and explainers

- Good for editing and structural feedback

- Less natural for pure creative/conversational content

Practical applications:

- Technical blog writing

- Course content development

- Editing and feedback

- Research compilation

- Script treatments and outlines

For marketing copy and creative content, consider specialized tools. See our Best AI Tools for Content Creation roundup for options including Jasper AI for marketing-focused needs. For fiction and creative writing scenarios specifically, Claude also works well as a ship prompt generator and OTP writing assistant when paired with dedicated prompt tools.

Pricing recommendation: Pro ($20/month)

6. Data Analysts

Best Claude models: Sonnet 4.6, Opus 4.7

Why Claude excels:

- 100% schema adherence in structured output tests

- Low hallucination in data interpretation

- Handles large datasets via Files API

- Clear statistical explanations

- Self-verification catches ambiguous data interpretations (Opus 4.7)

Practical applications:

- Data exploration and pattern identification

- SQL query generation and optimization

- Dashboard narrative generation

- Anomaly detection and explanation

- Report automation

Pricing recommendation: Pro for individual analysts; API for production data pipelines

Claude Alternatives: Best Options by Use Case

1. ChatGPT (GPT-5.4)

- Best for: Creative writing, conversational AI, broad ecosystem

- Advantage over Claude: Memory, speed, natural tone, plugin ecosystem

- Disadvantage: Higher hallucination rates, less coding precision

- Price: $20/month (Plus)

2. Google Gemini 3.1 Pro

- Best for: Google Workspace users, real-time research, multimodal (audio/video)

- Advantage over Claude: Native Google integration, faster responses, broader multimodal

- Disadvantage: Less precise for complex coding, weaker long-context accuracy

- Price: $19.99/month (Advanced)

See our Gemini Review 2026 for a complete breakdown.

3. Perplexity Pro

- Best for: Real-time research with citations

- Advantage over Claude: Purpose-built for research, automatic source verification

- Disadvantage: Not for coding or creative tasks

- Price: $20/month

4. GitHub Copilot

- Best for: IDE-integrated coding assistance

- Advantage over Claude: Seamless IDE integration, better for real-time coding

- Disadvantage: No general-purpose capabilities, less reasoning depth

- Price: $9/month (Individual), $19/month (Business)

5. Specialized AI Image Tools

For visual content needs:

- Stable Diffusion: Best for customizable AI image generation with full control

- Cutout Pro: AI background removal for ecommerce

- remove.bg: Quick background removal for photos

- Pic Copilot: AI product photography for sellers

6. AI Video Generation Tools

For video content creation:

- RunwayML: Professional AI video generation and editing

- Pika Art: Quick AI video clips for social media

7. Multi-Model AI Workspace

If you use Claude alongside GPT, Gemini, and other models, i10X bundles 20+ AI models — including Claude — with agents, image/video generation, and workflow automation in one subscription. It’s worth considering if you’re paying for multiple separate AI tools.

Claude Privacy, Security & Compliance

September 2025 Policy Change

What changed: Anthropic updated its privacy policy effective September 28, 2025. Consumer accounts now default to opt-in for training data—a reversal of their previous stance.

Impact:

- Conversations may be used for model training

- Retention up to 5 years if opted in

- Incognito chats still protected

- Deleted conversations still protected

How to opt out:

- Settings > Privacy

- Disable “Help improve Claude”

- Use Incognito mode for sensitive conversations

- Consider Team/Enterprise for guaranteed protection

For Sensitive Work

Recommended setup:

- Team or Enterprise plan (no training data use, ever)

- API access (same protection as Enterprise)

- Custom retention policies (Enterprise only)

- SSO/SCIM integration (Enterprise only)

- Audit logging (Enterprise only)

Industries with specific requirements:

- Healthcare (HIPAA): Enterprise plan with BAA required

- Finance: Enterprise with SOC 2 Type II compliance

- Legal: Enterprise recommended; verify client confidentiality requirements

- Government: Check FedRAMP status (not currently certified)

How to Get the Best Results with Claude

Core Prompting Principles

1. Be explicit about constraints

❌ Bad: “Write an article about climate change” ✅ Good: “Write a 500-word article explaining climate change impacts for high school students. Use 10th-grade reading level. Include 2 concrete examples. Avoid political language.”

Why: Claude follows instructions precisely. Vague prompts get generic outputs. These principles apply across models — for a deeper dive into prompt engineering techniques like few-shot examples, chain-of-thought, and structured output, see our dedicated guide. For a collection of ready-to-use templates, check our ChatGPT prompt templates.

2. Use extended thinking for complex tasks

❌ Bad: [asking complex question with standard mode] ✅ Good: “Use extended thinking to design a database schema for an e-commerce platform. Consider: user management, inventory, orders, payments. Show your reasoning.”

Why: Extended thinking reveals tradeoffs and alternatives, not just final answer.

3. Specify format explicitly

❌ Bad: “Analyze this data” ✅ Good: “Analyze this sales data and return results in this JSON format: {revenue_by_region: [], top_products: [], anomalies: []}. Flag any data quality issues you notice.”

Why: Claude excels at structured output when you define the structure.

4. Provide positive and negative examples

❌ Bad: “Write in a professional tone” ✅ Good: “Write in a professional but warm tone. Example of good tone: ‘We appreciate your patience.’ Example of tone to avoid: ‘As per our previous correspondence regarding the aforementioned matter…'”

Why: Examples calibrate style better than adjectives.

5. Use Projects for recurring context

❌ Bad: Pasting your company background into every conversation ✅ Good: Create a Project with company docs, brand guidelines, FAQ. Reference in chats: “Refer to Project context for brand voice.”

Why: Saves tokens and ensures consistency.

6. Ask Claude to show its work

❌ Bad: “Is this code correct?” ✅ Good: “Review this code. For each issue you find: (1) explain the problem, (2) show why it fails, (3) suggest a fix with reasoning.”

Why: Forces careful analysis rather than quick surface-level review.

Prompting Templates

Template 1: Document Analysis

Role: You are a [legal analyst/technical reviewer/financial auditor].Task: Analyze the attached [contract/whitepaper/financial statement] and identify:1. [Key terms/technical claims/financial risks]2. [Ambiguities/gaps in reasoning/anomalies]3. [Recommendations/questions to ask/further due diligence needed]Format: Use markdown with H3 headers for each section. For each finding, cite specific page/section numbers.Constraints:- Focus only on [specific aspect]- Flag anything requiring expert review- If uncertain about interpretation, say so explicitlyOutput length: [800 words / as needed for thoroughness]

Template 2: Code Review

Review this [Python/JavaScript/etc.] code for:1. Bugs (functional correctness)2. Security vulnerabilities3. Performance issues4. Code style/readability5. Missing error handlingFor each issue found:- Line number(s)- Severity: Critical / High / Medium / Low- Explanation: Why this is a problem- Fix: Concrete suggestion with code exampleThen provide a refactored version addressing all High/Critical issues.Context: This code [is production-facing/is prototype/etc.]

Template 3: Research Synthesis

I'm researching [topic]. I need a synthesis that:1. Summarizes the current consensus (if any)2. Highlights major points of disagreement3. Identifies knowledge gaps4. Lists 3-5 key sources I should read nextRequirements:- Cite sources inline [Author, Year]- Flag any claims you're uncertain about- Distinguish between established facts and emerging theories- 1000 words maximumI've attached [X documents]. Use web search if you need additional current sources.

Template 4: Business Writing

Write a [email/memo/policy document] to [audience] about [topic].Tone: [Professional but approachable/formal/etc.]Length: [200 words max/2 pages/etc.]Purpose: [Inform/persuade/request/etc.]Include:- Clear subject line (email) or title (document)- Context (1-2 sentences)- Main points (use bullets if >2 points)- Clear call-to-action or next stepsAvoid:- Corporate jargon ("synergy," "circle back")- Passive voice where active works- Burying the ledeProvide 2 versions: one slightly more formal, one slightly warmer.Template 5: Creative Brainstorming (Claude’s weaker area—adjust expectations)

I need creative ideas for [campaign/product name/blog topics/etc.].Context: [Target audience, constraints, goals]Generate:- 10 initial ideas (quick brainstorm)- For the 3 most promising: expand with rationale and execution sketchCriteria for "promising":1. [Feasible with budget X]2. [Aligns with brand value Y]3. [Differentiated from competitor Z]Note: I know creative ideation isn't your strongest suit—I'm looking for structured thinking and feasibility analysis more than wild creativity.

Advanced Techniques

Chaining prompts for complex workflows:

- First prompt: “Analyze this user feedback data and extract themes. Return only a JSON list of themes with example quotes.”

- Second prompt: “Using these themes: [paste JSON], draft a product roadmap addressing the top 3 issues. Format as a table with columns: Issue, Proposed Solution, Effort (S/M/L), Impact (High/Med/Low).”

- Third prompt: “Write an email to stakeholders presenting this roadmap. Emphasize customer-centricity. 300 words.”

Why this works: Breaking into stages prevents context mixing and allows iteration at each step.

Using Claude to improve your prompts:

I'm trying to get you to [desired outcome] but my prompts aren't working well.Here's what I've tried: [paste previous prompts]Here's what I got: [describe issues]Here's what I actually need: [specific requirements]Please:1. Explain why my prompts aren't working2. Suggest 2-3 improved prompt structures3. Show an example of a well-structured prompt for this task

Leveraging extended thinking for decisions:

I need to decide between [Option A] and [Option B] for [context].Use extended thinking to:1. List pros/cons of each option2. Identify hidden assumptions I might be making3. Suggest evaluation criteria I haven't considered4. Recommend a decision frameworkDon't tell me what to decide—help me think through it systematically.

Common Mistakes to Avoid

- ❌ Treating Claude like Google: Claude doesn’t have real-time info without web search. For current events, let it search or verify facts against provided sources.

- ❌ Over-reliance without verification: Even with low hallucination rates, always verify critical facts, code, or legal/medical claims.

- ❌ Ignoring refusals: If Claude declines, it’s usually for safety reasons. Refine your prompt rather than trying to “jailbreak.”

- ❌ Not using Projects for repeated tasks: You’re wasting tokens and context if you paste the same background info repeatedly.

- ❌ Expecting memory: Claude won’t remember your preferences conversation-to-conversation (unless you have beta memory access). Document your style guide and reference it.

- ❌ Using Free tier for production work: Rate limits and reduced model access mean the Free tier is for testing only.

Claude Review – FAQs

Is Claude AI free?

Yes, Claude offers a free tier with access to Claude Sonnet 4 model. Free includes limited messages per day (approximately 30-100, varies by demand), 5-hour session resets, and basic features. For consistent access, higher limits, and access to Opus models including Opus 4.7, upgrade to Pro ($20/month).

Is Claude better than ChatGPT?

It depends on use case. Claude Opus 4.7 dominates coding (87.6% vs GPT-5.4’s ~72% on SWE-bench Verified; 64.3% vs 57.7% on SWE-bench Pro), long-document analysis (200K-1M context with 3.75MP vision for scanned docs), and reliability (~1% vs ~10% hallucination rate). ChatGPT GPT-5.4 wins for creative writing, conversational tone, memory features, and speed. Most power users benefit from both.

How much does Claude Pro cost?

Claude Pro costs $20/month (or $17/month billed annually in some regions). This includes 5× the free tier usage, access to all models including Opus 4.7, extended thinking, web search, Research feature, and priority access during peak times.

Is Claude safe to use for confidential work?

On consumer plans (Free/Pro/Max): Not by default—data may be used for training unless you opt out. On Team/Enterprise/API plans: Yes—data is never used for training. For truly confidential work, use Team ($25/user/month minimum) or Enterprise (custom pricing) with guaranteed data protection.

Can Claude generate images?

No, Claude cannot generate images. It can analyze and describe images you upload—with Opus 4.7 supporting up to 3.75 megapixel resolution for high-fidelity visual analysis—but image generation is not a capability. For AI image generation, consider specialized tools like Stable Diffusion for full creative control.

What’s Claude’s knowledge cutoff?

Claude’s training data has a cutoff (varies by model version but typically 6-12 months prior to release). However, web search integration (available on Pro and higher) allows real-time information retrieval for current data needs.

Can I use Claude for commercial projects?

Yes, but terms differ by plan. API and Enterprise plans are explicitly designed for commercial use. Consumer plans (Free/Pro) allow commercial use but check Terms of Service for output ownership details.

Claude Review: Final Verdict

Overall Rating: 9.2/10

Claude has earned its place as the leading AI assistant for professionals in 2026, particularly for those who prioritize accuracy, reasoning transparency, and long-context work over conversational polish. Opus 4.7 solidifies that lead.

What I love:

- Opus 4.7’s 87.6% SWE-bench score is the industry’s best for a generally available model

- Built-in self-verification catches errors before you see them

- High-resolution vision (3.75 MP) transforms document and UI analysis

- The “xhigh” effort level hits the sweet spot between thoroughness and speed

- Cybersecurity safeguards feel targeted, not heavy-handed

- 1M context + adaptive thinking remain genuine innovations from Opus 4.6

- Consistently lower hallucination rates than any competitor

What needs improvement:

- Memory feature still in limited beta

- Slower response times than competitors—especially with xhigh/max effort

- Creative writing tone can feel sterile compared to ChatGPT GPT-5.4

- Privacy policy reversal (September 2025) remains disappointing

- Opus 4.7 is still less capable than the limited-release Claude Mythos Preview

Who Should Subscribe Today

Immediately subscribe to Claude Pro if you:

- Write code professionally (87.6% SWE-bench — best in class)

- Analyze documents over 50 pages regularly (1M context + 3.75MP vision)

- Need reliable, low-hallucination outputs (~1% rate with self-verification)

- Value reasoning transparency (5 effort levels including new xhigh)

Wait or use competitors if you:

- Primarily need creative/conversational AI

- Require robust memory across sessions

- Live in Google ecosystem

- Need extensive third-party integrations

The honest truth: I pay for both Claude Pro and ChatGPT Plus. Claude handles my coding, analysis, and technical writing. ChatGPT handles creative brainstorming and quick questions. Gemini stays for Google Calendar integration. The “best” AI assistant is the one that matches your actual workflow—not the one with the highest benchmark scores.