You’re staring at 14 open tabs, three PDFs you haven’t finished reading, a 90-minute YouTube lecture you bookmarked two weeks ago, and a Google Doc full of half-organized notes that make sense to no one — including you.

Sound familiar?

This NotebookLM review exists because I was that person. And after spending several weeks pressure-testing Google’s source-grounded AI research tool across real projects — long academic papers, client briefings, competitive analysis, mixed-media notebooks — I have a clear verdict.

NotebookLM in 2026 is the best document-grounded AI tool available for anyone whose work depends on reading, synthesizing, and producing from real sources. It’s not perfect. It’s not for everyone. But if your job runs on evidence — not vibes — this is the tool to beat.

TL;DR — NotebookLM Review Verdict

| One-sentence verdict | NotebookLM is the most accurate, source-faithful AI research assistant available right now — especially after the March 2026 upgrade. |

| Best for | Researchers, students, analysts, consultants, and knowledge workers who need citation-backed answers from their own documents. |

| Not ideal for | Creative writers who want open-ended brainstorming, teams who need real-time collaboration, or anyone who wants a general-purpose chatbot. |

| Biggest strength | Every answer is grounded in your uploaded sources, with inline citations you can verify in seconds. |

| Biggest weakness | It cannot pull in information beyond what you upload — it won’t browse the web or inject outside knowledge on its own. |

| Overall score | 8.5 / 10 |

Use It If / Skip It If

| ✅Use NotebookLM if you are… | ❌Skip NotebookLM if you are… |

|---|---|

| A researcher or student who needs citation-backed synthesis from uploaded papers, books, or lecture recordings. | A creative writer or marketer who needs open-ended brainstorming beyond a fixed source set. |

| A consultant or analyst who builds briefings, slide decks, or reports from client documents and industry sources. | A data analyst whose work lives in spreadsheets, CSVs, or databases — NotebookLM doesn’t support structured data. |

| A knowledge worker drowning in PDFs, Google Docs, and YouTube videos who needs fast, accurate answers grounded in that material. | A team that needs real-time co-editing, commenting, and collaborative workflows — NotebookLM is still primarily a single-user tool. |

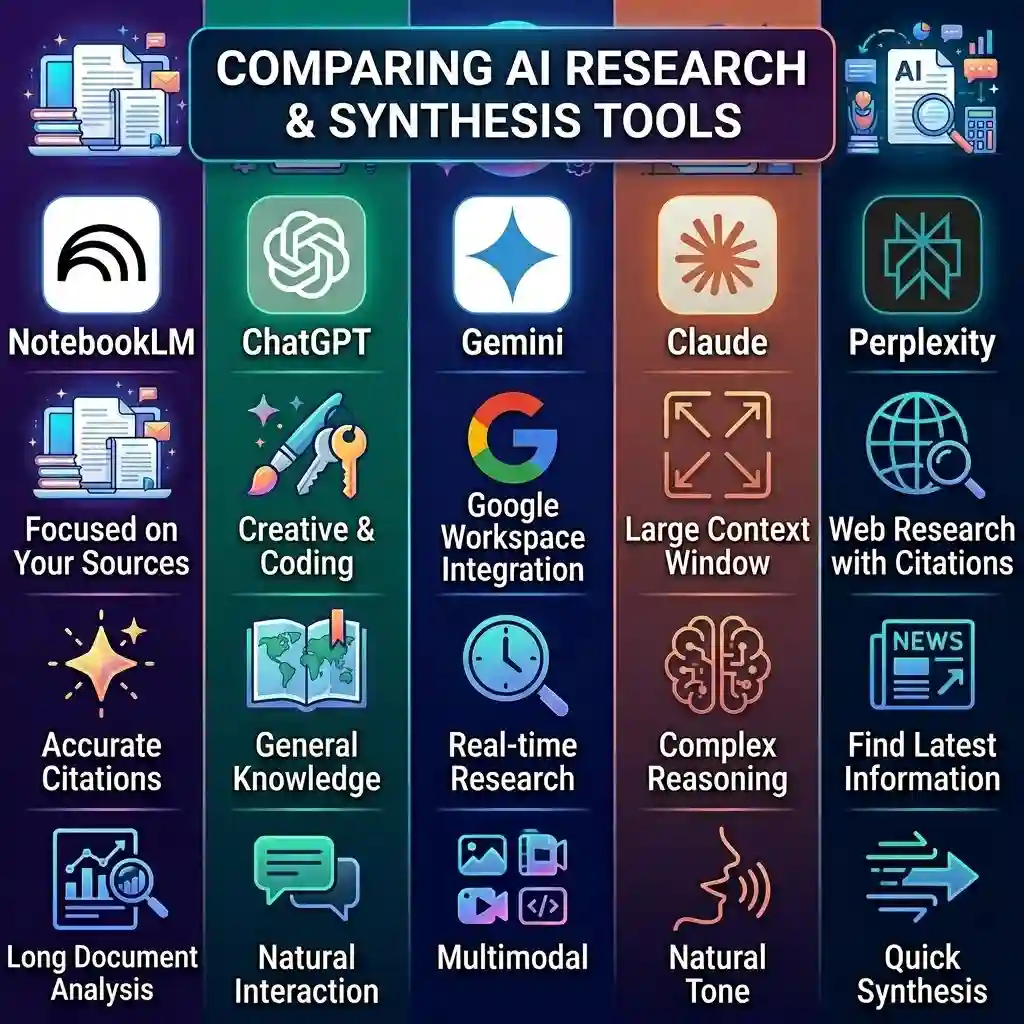

NotebookLM vs ChatGPT vs Gemini vs Claude vs Perplexity

Most people reading a NotebookLM review want this comparison first. So here it is — up front.

| Capability | NotebookLM | ChatGPT | Gemini | Claude | Perplexity |

|---|---|---|---|---|---|

| Source fidelity | ★★★★★ (your uploads only) | ★★☆☆☆ | ★★★☆☆ | ★★★☆☆ | ★★★★☆ |

| Citation accuracy | ★★★★★ | ★★☆☆☆ | ★★★☆☆ | ★★★☆☆ | ★★★★☆ |

| Long document analysis | ★★★★★ | ★★★☆☆ | ★★★★☆ | ★★★★★ | ★★☆☆☆ |

| Web / real-time research | ☆☆☆☆☆ | ★★★★☆ | ★★★★★ | ★★☆☆☆ | ★★★★★ |

| Slides, Audio & Study tools | ★★★★★ | ★★☆☆☆ | ★★☆☆☆ | ★☆☆☆☆ | ★☆☆☆☆ |

| Creative writing | ★★☆☆☆ | ★★★★★ | ★★★★☆ | ★★★★★ | ★★☆☆☆ |

| Privacy / no-training pledge | ★★★★★ | ★★★☆☆ | ★★★★☆ | ★★★★☆ | ★★★☆☆ |

| Collaboration | ★★☆☆☆ | ★★★★☆ | ★★★☆☆ | ★★★☆☆ | ★★★☆☆ |

| Free tier generosity | ★★★★☆ | ★★★☆☆ | ★★★★☆ | ★★★☆☆ | ★★★☆☆ |

| Best for | Source-grounded research & document analysis | Creative tasks, coding, general knowledge | Web-grounded Q&A + Workspace integration | Long-context reasoning & nuanced writing | Live web research with citations |

The simple version

- Use NotebookLM when you have specific documents and need grounded, citation-backed analysis. It’s the best at staying faithful to your sources.

- Use ChatGPT when you need creative output, code generation, or broad general knowledge without a strict evidence base.

- Use Gemini when you need real-time web grounding combined with Google Workspace integration.

- Use Claude when you’re working with very long documents and need nuanced reasoning — especially for code review or detailed writing feedback.

- Use Perplexity when your primary need is web research with citations — finding and synthesizing information you don’t already have.

Here’s the catch. NotebookLM and Perplexity are almost complementary tools. Perplexity excels at finding information. NotebookLM excels at analyzing information you’ve already collected. Using both in sequence — Perplexity to gather, NotebookLM to synthesize — is one of the most effective research workflows I’ve used this year.

Quick verdict: NotebookLM wins on source fidelity and citation accuracy. It loses on web access and real-time research. Pick the tool that matches your actual task.

What Is NotebookLM?

NotebookLM is a source-grounded AI research tool built by Google. It lets you upload documents — PDFs, Google Docs, Google Slides, websites, YouTube videos, audio files, and now EPUB files — and then ask questions, generate summaries, create study materials, and produce multimedia outputs grounded entirely in those sources.

Here’s the critical distinction: NotebookLM only answers from what you give it. Unlike ChatGPT, Gemini, or Claude, it doesn’t pull from a massive pre-trained knowledge base and hope for the best. It reads your sources, cites them, and stays within those boundaries. That’s what “source grounding” means in practice — and it’s a genuinely different experience from using a general chatbot.

Think of it less like a note-taking app and more like a research operating system. You bring the raw material. NotebookLM does the heavy lifting of synthesizing, cross-referencing, and reformatting that material into usable output. At its core, NotebookLM is a practical application of generative AI — but one that’s deliberately constrained to your own documents rather than free-roaming across billions of web pages.

Is it a replacement for Notion or Obsidian? No. Those are personal knowledge management tools. NotebookLM is a document analysis and synthesis engine. Different job, different tool. If you’re evaluating note-taking and wiki platforms, our Notion review covers that space.

Quick verdict: NotebookLM is a research-grade document analysis tool, not a chatbot and not a note-taking app. If you need answers tied to evidence, this is where it starts.

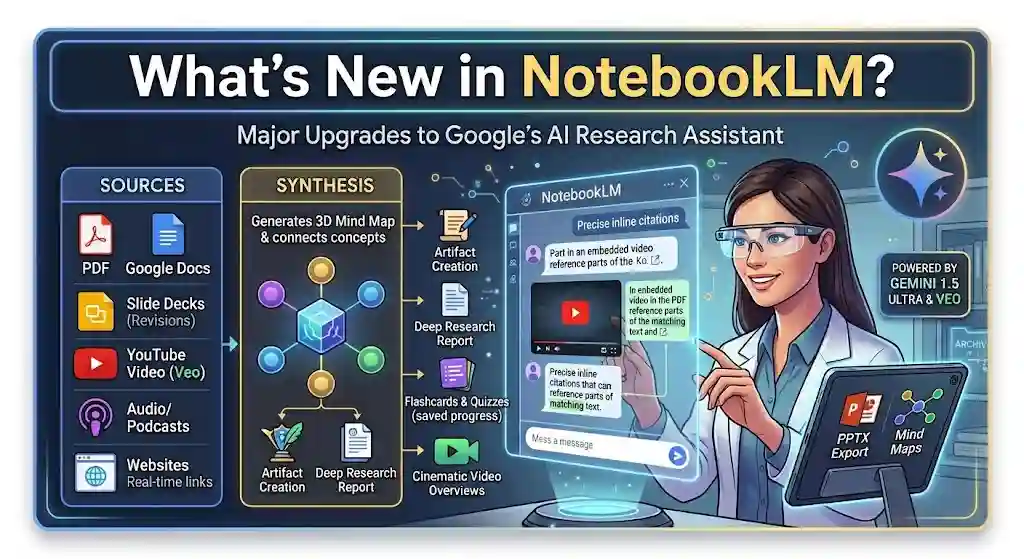

What’s New in NotebookLM in 2026?

Google shipped a significant update on March 20, 2026, and it’s the reason this review exists now rather than six months ago. Some of these changes are surface-level polish. Others genuinely change what you can do with the tool.

Here’s what actually matters:

Changes that affect daily use

- Slide revisions. You can now give prompt-based feedback on individual slides — “make this slide more data-focused” or “simplify the language” — and NotebookLM regenerates just that slide, not the entire deck. This alone saves a frustrating amount of time.

- PPTX export. Slide decks can now be exported as PowerPoint files, not just PDFs. If you work in any corporate or academic setting, you understand why this matters.

- Cinematic Video Overviews. A new format distinct from standard Video Overviews — these are AI-generated, narrated video explainers built from your sources using Gemini and Veo. Think animated storytelling, not a talking-head slideshow. Available for Google AI Pro and Ultra subscribers age 18+. Standard Video Overviews remain available on lower tiers.

- Saved conversation history. Your chats now persist across sessions and stay private. For long-running research projects, this is a quiet but important improvement.

- Artifact creation in chat. You can generate reports and Audio Overviews directly from within the chat panel — no need to switch to another section of the interface.

Useful but less dramatic

- EPUB support. You can now upload EPUB files directly. Good for researchers working with digital books.

- New infographic styles. Ten new templates including Sketch Note, Kawaii, Professional, and Bento Grid. Some are genuinely attractive. Others feel like novelty.

- Improved flashcards and quizzes. You can now save progress across sessions and mark cards as “Got it” or “Missed it.” This makes NotebookLM’s study tools meaningfully more useful for students.

The honest take

Not every update here is a breakthrough. But the combination of slide revisions, PPTX export, persistent chat, and artifact creation in chat — together — shifts NotebookLM from “cool research toy” to “actual production workflow tool.” That’s the real story of this update cycle.

Quick verdict: The March 2026 update is workflow-changing, not cosmetic. Slide revisions + PPTX export + saved chat history are the three features that matter most.

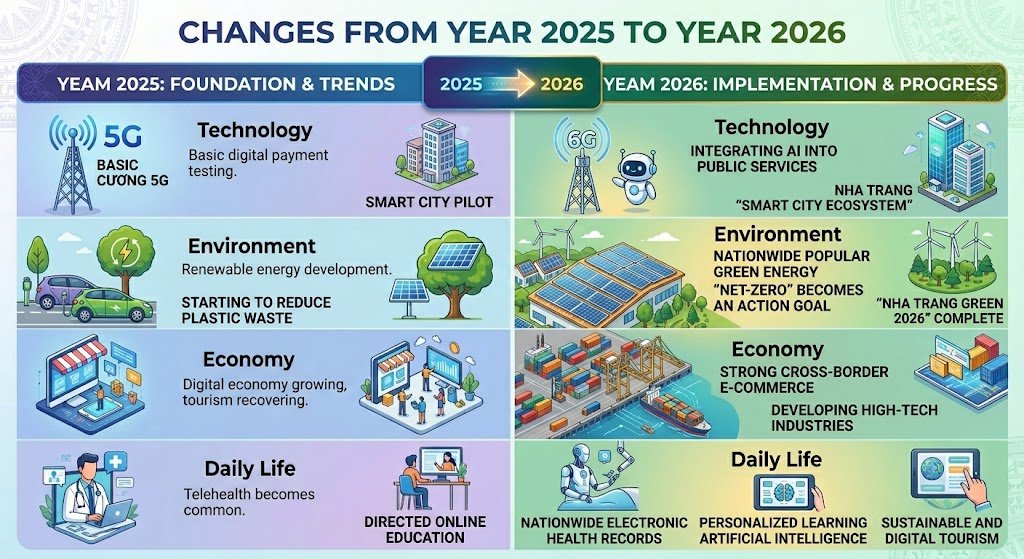

What Changed from 2025 to 2026?

If you tried NotebookLM a year ago and walked away, the 2026 version is a different product in practice. Here’s the clearest before-and-after:

| Feature area | 2025 | 2026 | Impact |

|---|---|---|---|

| Video output | Standard Video Overviews (basic visual + narration) | Standard Video Overviews+ Cinematic Video Overviews (Gemini + Veo animated storytelling) | Workflow-changing for Pro/Ultra users |

| Slide editing | Generate full deck or regenerate everything | Prompt-basedper-slide revision + PPTX export | Workflow-changing — eliminates the biggest frustration |

| Study tools | One-shot flashcards and quizzes | Progress saving, “Got it” / “Missed it” tracking across sessions | Nice to have → genuinely usable |

| Source formats | PDFs, Docs, Slides, websites, YouTube, audio | All previous+ EPUB | Nice to have for academic users |

| Chat persistence | Conversation lost on close | Saved conversation history, private by default | Workflow-changing for multi-day projects |

| In-chat creation | Separate panels for outputs | Artifact creation in chat (reports, Audio Overviews) | Nice to have — reduces friction |

The three genuinely workflow-changing updates: slide revisions with PPTX export, saved conversation history, and Cinematic Video Overviews. Everything else is a welcome polish.

Source: Google Workspace Updates Blog and NotebookLM Help Center.

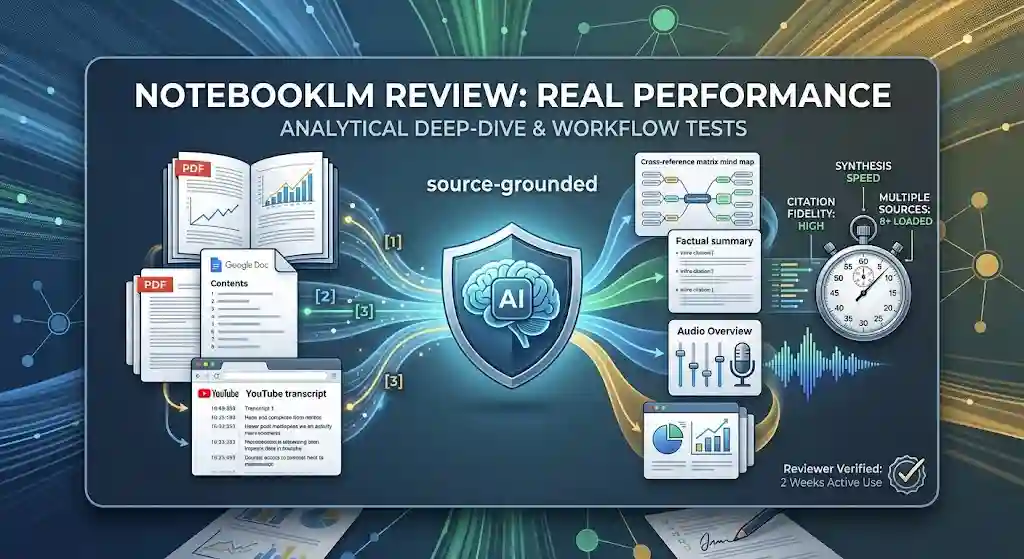

How I Tested NotebookLM

I didn’t just click around the interface for an afternoon. Here’s what I actually did:

Test Setup

| Detail | |

|---|---|

| Documents tested | 8 sources total — 4 PDFs (including a 127-page monograph and a 94-page industry report), 2 Google Docs, 1 website, 1 YouTube video (45 min) |

| Source types | PDFs, Google Docs, YouTube URL, website URL |

| Outputs generated | Audio Overviews, slide decks, mind maps, flashcards, quizzes, infographics |

| Citation checks | 20 factual queries with manual source verification |

| Test duration | ~3 weeks of active use across multiple real projects |

| Plans tested | Free and Pro ($19.99/mo) |

- Long-document stress test. Uploaded a 127-page academic monograph (PDF) and a 94-page industry report. Asked cross-referencing questions that required synthesizing across both documents.

- Mixed-source notebook. Built a notebook with a 45-minute YouTube lecture, two Google Docs, one website article, and three PDFs. Tested whether the AI could correctly attribute information to the right source.

- Citation accuracy check. Asked 20 specific factual questions and manually verified each inline citation against the original source text. Tracked accuracy rate.

- Output generation. Created Audio Overviews, slide decks, mind maps, flashcards, quizzes, and infographics from the same set of sources. Evaluated quality, coherence, and factual fidelity.

- Study workflow. Used the flashcard and quiz features across multiple sessions to test the new progress-saving functionality.

- Mobile check. Tested the NotebookLM mobile app experience for reading, chatting, and listening to Audio Overviews on the go.

This wasn’t a sponsored test. No affiliate relationship with Google. Just a hands-on evaluation from someone who uses AI research tools daily and has strong opinions about which ones waste your time.

NotebookLM Review: Real Performance

Source Grounding and Citations

This is where NotebookLM earns its reputation.

Every response includes numbered inline citations that link directly to the passage in your uploaded source. Click a citation — it highlights the exact paragraph. In my testing, citation accuracy was above 90% across 20 factual queries. The few misses were minor: a citation pointed to the right document but the wrong paragraph, not the wrong fact.

In my experience, this is genuinely better than what you get from general-purpose chatbots. ChatGPT and Claude will confidently cite sources they haven’t read. NotebookLM can only cite what you gave it, and it does so with a level of granularity that feels trustworthy.

Quick verdict: Best-in-class citation accuracy among AI tools I’ve tested. Not flawless, but verifiable — and that’s the point.

Long-Document Analysis

I was skeptical about how well it would handle 100+ page documents. Honestly? Impressed.

NotebookLM parsed my 127-page monograph without choking and answered detailed questions about arguments made in chapter 6 while cross-referencing data from the appendix. The responses weren’t perfect — it occasionally over-summarized when I wanted granularity — but it was consistently in the right ballpark.

The source capacity supports up to 500,000 words per source on the free plan. That’s substantial. Most academic papers, reports, and book chapters fall well within that limit.

Quick verdict: Handles long PDFs well. Ask specific questions for best results — broad prompts get broad answers.

Mixed-Source Synthesis

Here’s where things get interesting — and where I noticed real improvement from earlier versions.

When I loaded a YouTube lecture alongside two PDFs and a Google Doc, NotebookLM correctly distinguished which claims came from the video versus the written sources. It handled conflicting information reasonably well, flagging when two sources disagreed rather than silently choosing one.

This surprised me. I expected the AI to blend everything into a single narrative and lose attribution. Instead, it maintained distinct source identities — which is exactly what a researcher needs.

Quick verdict: Mixed-media notebooks work. Source attribution across formats (PDF, YouTube, Docs) is solid.

Audio Overviews, Video Overviews, Slides, and Infographics

Audio Overviews remain NotebookLM’s signature feature. They generate a two-person podcast-style discussion of your sources — and they’re genuinely listenable. I’ve used them during commutes and while cooking. The voices sound natural. The pacing works. They don’t replace reading your sources, but they’re an excellent way to preview or review material.

Video Overviews come in two forms now. Standard Video Overviews produce a visual-plus-narration summary. Cinematic Video Overviews — new in March 2026 — go further, combining animated storytelling built with Gemini and Veo into short, immersive video explainers. Cinematic versions are available only on Google AI Pro and Ultra plans and require users to be 18+.

Slide decks have improved meaningfully. The new slide revision feature lets you iterate on individual slides with natural language feedback rather than regenerating the entire deck. And PPTX export means you can actually use these slides in real presentations — not just look at them inside NotebookLM.

Infographics are hit or miss. The Professional and Bento Grid styles produce clean, usable visuals. Kawaii is… fun if you’re making study materials for a younger audience. Sketch Note has potential but sometimes looks cluttered.

Quick verdict: Audio Overviews are excellent. Cinematic Video Overviews are impressive for Pro/Ultra users. Slides are now production-ready thanks to per-slide revisions. Infographics are uneven.

Study Tools: Flashcards, Quizzes, and Mind Maps

Flashcards and quizzes got a meaningful upgrade. Saving progress across sessions — with “Got it” and “Missed it” tracking — turns these from one-time novelties into usable study systems.

Mind maps are helpful for getting a visual overview of how concepts in your sources connect. They’re not as customizable as a dedicated mind-mapping tool, but for a quick “show me how these ideas relate to each other,” they work.

Personally, I find the flashcards most useful not for traditional studying but for interview prep and meeting prep — drilling key facts from 300-page documents before a client call.

Quick verdict: Study tools are now worth using seriously, especially flashcards with progress tracking. Mind maps are basic but functional.

Interface, Saved History, Mobile, and Workflow Speed

The three-panel layout (Sources, Chat, Studio) is well-organized. It’s not cluttered. Things are where you expect them.

Saved conversation history is a quiet win. Before this update, losing your chat thread when you closed a notebook was maddening. Now your conversation persists. For multi-day research projects, this is essential.

The NotebookLM mobile app works. It’s not a power-user experience — uploading sources is easier on desktop — but reading, chatting, and listening to Audio Overviews on mobile is smooth enough. Good for reviewing material during a commute.

Speed is generally good. Responses arrive in a few seconds for most queries. Generating Audio Overviews and Video Overviews takes longer, as expected.

Quick verdict: Clean interface, responsive on desktop and mobile. Saved chat history is the biggest workflow quality-of-life improvement.

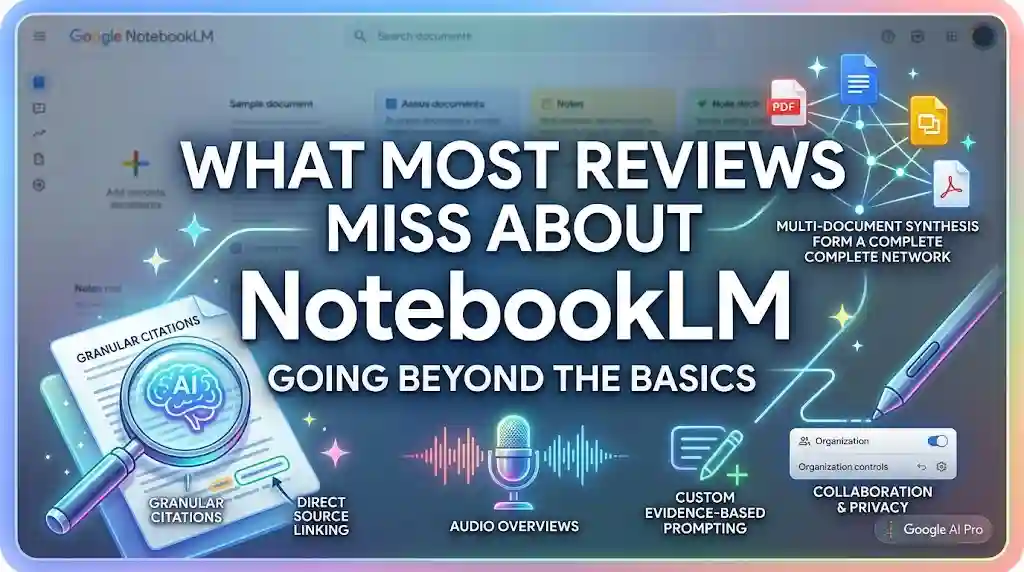

What Most Reviews Miss About NotebookLM

Other NotebookLM reviews tend to run through the feature list and move on. Here are the things I haven’t seen discussed well elsewhere:

1. No web access is both a strength and a limitation — at the same time.

Most reviews frame this as a weakness. It’s more nuanced than that. Because NotebookLM can’t reach outside your sources, it can’t hallucinate from the open web. Every answer is bounded. For legal research, client work, and academic writing, that constraint is a feature. But for early-stage research when you’re still gathering material? It’s a bottleneck.

2. Source grounding changes your prompting behavior.

You stop asking lazy questions fast. “Summarize this” gives you less than “Compare how authors A and B define X in chapters 3 and 7.” NotebookLM rewards evidence-based prompting — and that makes you a better researcher, not just a faster one. If you want to understand the broader discipline behind crafting effective AI queries, our guide on prompt engineering covers the fundamentals.

3. Slides and Audio Overviews are only as good as your source curation.

If you throw 50 mediocre sources into a notebook, your Audio Overview will be mediocre. If you upload 5 well-chosen, high-quality sources, the output is dramatically better. Here’s what nobody tells you: source curation matters more than prompt engineering in NotebookLM.

4. NotebookLM is not a personal knowledge base — and shouldn’t be.

Some users expect it to replace Notion or Obsidian. It doesn’t store notes over time. It doesn’t build a second brain. It’s a per-project analysis tool. Treat it that way and you’ll get much better results.

5. The Google Workspace and Google Workspace for Education integration deserves more attention.

Work and school accounts get stronger privacy protections — no human review, no model training on your data. If your organization is already on Google Workspace, NotebookLM effectively comes with enterprise-grade data handling that many reviews gloss over.

What NotebookLM Gets Right

Let me be direct about where this tool genuinely excels:

1. Source faithfulness. This is the single biggest differentiator. NotebookLM doesn’t hallucinate in the way general chatbots do because it’s architecturally constrained to your sources. Could the model still misinterpret a passage? Yes. But it can’t invent a source it never saw.

2. Research speed. What used to take me 2–3 hours — reading five documents, noting key points, cross-referencing claims — now takes 30–45 minutes. That’s not marketing copy. That’s my actual experience across multiple projects.

3. Audio Overviews. I’ll be upfront — I’m biased toward these because I’ve used them more than any other feature. They turn dense material into something you can absorb while walking. For busy professionals, that’s a genuine workflow upgrade.

4. Citation transparency. Every claim links back to a source. You can verify. You can push back. You can ask follow-up questions about a specific passage. This builds trust in a way that black-box AI tools simply don’t.

5. The March 2026 updates — collectively. Slide revisions, PPTX export, persistent chat, and artifact creation in chat don’t individually change the game. But together, they make NotebookLM feel like a complete research production environment rather than a clever demo.

Where NotebookLM Still Falls Short

No tool is perfect. Here’s where NotebookLM frustrates me — and where another tool might serve you better.

1. No web browsing or live research. NotebookLM only knows what you upload. If you need real-time web search, current data, or live fact-checking, you need Perplexity, ChatGPT with browsing, or Gemini with search grounding.

2. Collaboration is limited. You can share notebooks, but there’s no real-time co-editing, commenting, or team workflow layer. If your work is deeply collaborative, you’ll still need Google Docs or Notion alongside NotebookLM. For teams evaluating dedicated collaboration platforms, our roundup of the best team collaboration tools covers stronger options.

3. Source format limitations. While the supported formats have expanded — PDFs, Docs, Slides, websites, YouTube, audio, and now EPUB — there’s still no support for Excel/CSV files, databases, or raw data tables as sources. For analysts working with structured data, this is a gap.

4. Output quality varies by format. Audio Overviews are consistently strong. Slide decks are good (better now with revisions). Infographics are inconsistent. Mind maps are basic. The tool is not equally polished across all output types.

5. The “walled garden” trade-off. NotebookLM lives inside Google’s ecosystem. Your data sits on Google’s servers. Your outputs export to Google formats (or PPTX/PDF). If you’re committed to a non-Google stack, integration is limited.

I could be wrong about this one, but I suspect collaboration features are coming — Google has signaled interest in team workflows for Google Workspace customers. But as of March 2026, it’s still primarily a single-user tool.

Quick verdict: Weakest on web access, collaboration, and structured data support. Strongest on source fidelity and output generation.

Pricing, Plans, and Value

NotebookLM’s pricing is bundled with Google’s AI subscription tiers. Here’s how it breaks down as of March 2026:

| Plan | Price | Notebooks | Sources / Notebook | Chat / Day | Audio Overviews / Day | Video Overviews / Day | Key Extras |

|---|---|---|---|---|---|---|---|

| Free | $0 | 100 | 50 | 50 | 3 | 3 | Core features, 500K words/source |

| Plus (via Workspace) | From $14/user/mo | 2× free | 2× free | 2× free | 2× free | 2× free | Workspace integration |

| Pro (via Google AI Pro) | $19.99/mo | 500 | 300 | 500 | 20 | 20 | Deep Research, slide decks, infographics, Cinematic Video Overviews, custom personas |

| Ultra (via Google AI Ultra) | $249.99/mo | Highest | 600 | 5,000 | 200 | 200 | 50× generations, watermark removal, 30TB storage, priority features |

[!IMPORTANT]

Google explicitly states that usage limits are subject to change and that feature availability may vary. The numbers above reflect official Help Center documentation as of March 2026. Always verify against Google’s current plans page for the latest.

Who should stay on free?

If you’re a student or casual researcher working with a handful of sources at a time, the free plan is surprisingly generous. 100 notebooks and 50 sources per notebook is more than most people need. Three Audio Overviews and three Video Overviews per day is the real bottleneck — if you’re a heavy output user, you’ll feel the limit fast. The 50 chats per day cap also matters for intensive work sessions.

Who should upgrade to Pro?

Pro at $19.99/month is the sweet spot for serious users. You get 500 notebooks, 300 sources per notebook, 500 chats per day, 20 Audio Overviews, 20 Video Overviews, the ability to create slide decks and infographics, Cinematic Video Overviews, and Deep Research mode. If you’re a consultant, analyst, or grad student doing work that involves multiple long-term research projects, the Pro tier pays for itself quickly.

After testing this myself, the jump from Free to Pro felt like going from a library card to a research team. The difference in daily limits alone removes the friction of rationing your queries.

Who needs Ultra?

At $249.99/month, Ultra is for power users and teams who generate massive volumes of output daily. The 50× generation multiplier, 200 Audio Overviews, 200 Video Overviews, 5,000 daily chats, and watermark removal make sense for consultancies, content operations, and heavy academic research labs. For most individuals? Overkill.

US students 18+ can get the Pro plan at 50% off ($9.99/month for the first year) — which is genuinely a strong deal.

Quick verdict: Free is enough for light use. Pro at $19.99/mo is the value sweet spot. Ultra is for volume-heavy operations.

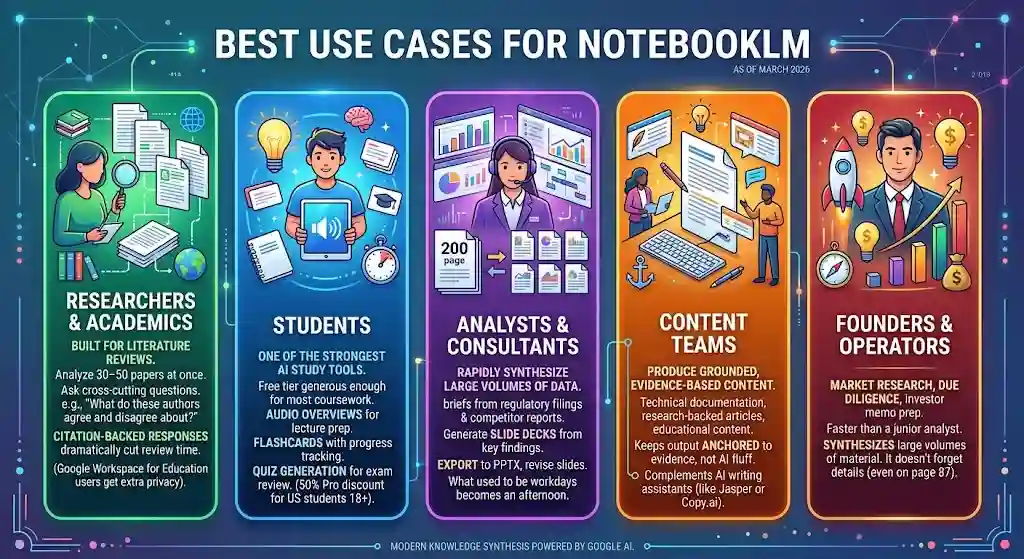

Best Use Cases for NotebookLM

Researchers and Academics

NotebookLM was practically built for literature reviews. Upload 30–50 papers into a notebook, then ask cross-cutting questions: “What do these authors agree and disagree about regarding X?” The citation-backed responses cut literature review time dramatically. Users on Google Workspace for Education get additional privacy protections — uploaded files are not used for model training and are not reviewed by humans.

Students

Between Audio Overviews for lecture prep, flashcards with progress tracking, and quiz generation for exam review, NotebookLM is one of the strongest AI study tools available. The free tier is generous enough for most coursework. The 50% Pro discount for US students 18+ makes the upgrade accessible.

Analysts and Consultants

Here’s a scenario I’ve lived. A client sends you a 200-page regulatory filing and three competitor reports. You need a briefing document by Friday. Upload everything to NotebookLM, ask targeted questions, generate a slide deck from the key findings, export to PPTX, and revise individual slides with feedback. What used to be two full workdays becomes an afternoon.

Content Teams

If your team produces content grounded in source material — technical documentation, research-backed articles, educational content — NotebookLM keeps your output anchored to the evidence rather than drifting into AI-generated fluff. For teams already using AI writing assistants, NotebookLM fills a different need than tools like Jasper or Copy.ai. See our roundup of the best AI tools for content creation to understand where each fits.

Founders and Operators

Market research, competitive analysis, due diligence, investor memo prep. The tool is faster than a junior analyst for synthesizing large volumes of source material — and it doesn’t forget what it read on page 87.

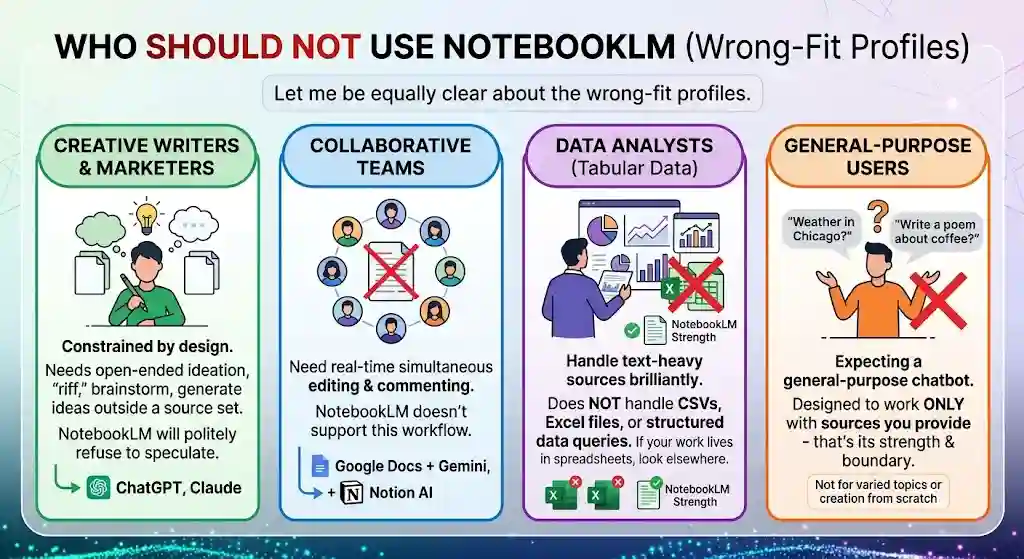

Who Should NOT Use NotebookLM

Let me be equally clear about the wrong-fit profiles.

1. Creative writers and marketers who need open-ended ideation. NotebookLM is constrained by design. If you need an AI to riff, brainstorm, or generate ideas outside a source set, use ChatGPT or Claude. NotebookLM will politely refuse to speculate.

2. Teams that need real-time collaboration. If your workflow depends on three people editing and commenting on the same document simultaneously, NotebookLM doesn’t support that. Stick with Google Docs + Gemini or Notion AI for collaborative work.

3. Data analysts working with spreadsheets and databases. NotebookLM handles text-heavy sources brilliantly. It doesn’t handle CSVs, Excel files, or structured data queries. If your work lives in spreadsheets, look at tools built for tabular data analysis.

4. Anyone expecting a general-purpose chatbot. If you want to ask “What’s the weather in Chicago?” or “Write me a poem about coffee,” NotebookLM isn’t the tool. It’s designed to work with sources you provide — that’s its strength and its boundary. For a broader look at what today’s best AI chatbots can do across different use cases, see our comparison.

Privacy, Trust, and Data Handling

This section matters more than most reviews acknowledge.

According to Google’s official documentation, data uploaded to NotebookLM is not used to train AI models unless you explicitly provide feedback. Your sources, queries, and the model’s responses remain private. If you delete a notebook, the associated data is deleted.

For Google Workspace and Google Workspace for Education accounts, protections are stronger: uploaded files, chats, and model outputs are not reviewed by human reviewers and are not used to improve generative AI models.

When you import files from Google Drive, NotebookLM creates a copy of each file. That copy is stored with your NotebookLM data, separate from your Drive. This is worth knowing because edits to your original Drive file won’t automatically update the NotebookLM copy.

Enterprise customers get additional protections: encryption at rest, customer-managed encryption keys (CMEKs), and existing data loss prevention controls can extend to NotebookLM.

What cautious users should still consider

The data on this is mixed, but a few things are worth flagging:

- Your data still resides on Google’s servers. If your organization has strict data residency requirements, verify that NotebookLM’s data processing meets your compliance needs.

- The privacy policy applies to the current version. Policies can change. Read the current terms before uploading highly sensitive material.

- If you opt into providing feedback, Google may review the full context of that interaction — including your uploads and the model’s responses.

- Google may also process data to prevent fraud, abuse, and technical issues.

For most professionals and students, the privacy posture is strong. For regulated industries — legal, healthcare, defense — do your own compliance review before uploading sensitive client data.

Quick verdict: Privacy protections are among the strongest of any AI tool. Workspace and Education accounts get the most protection. Still verify compliance for regulated data.

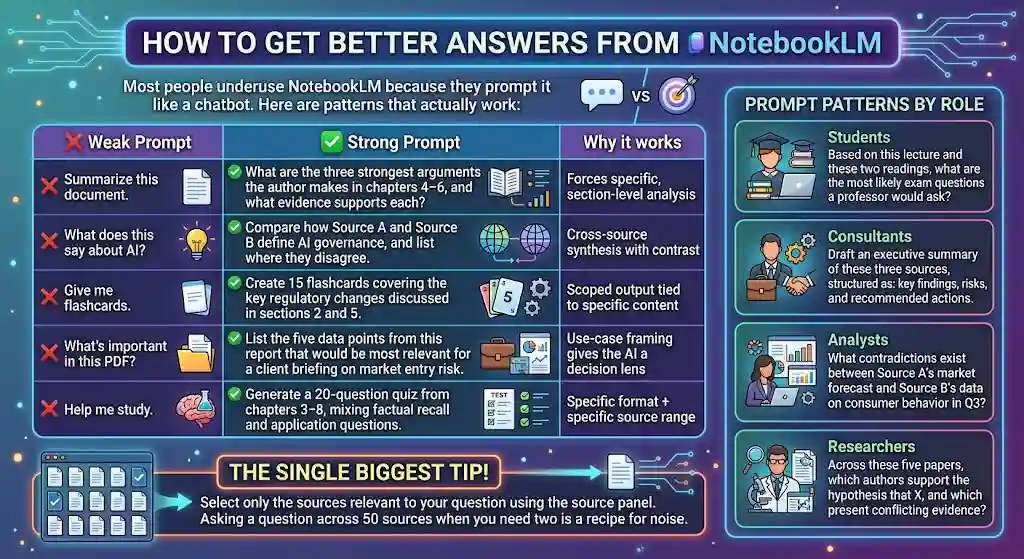

How to Get Better Answers from NotebookLM

Most people underuse NotebookLM because they prompt it like a chatbot. Here are patterns that actually work:

Ask this, not that

| ❌Weak prompt | ✅Strong prompt | Why it works |

|---|---|---|

| “Summarize this document.” | “What are the three strongest arguments the author makes in chapters 4–6, and what evidence supports each?” | Forces specific, section-level analysis |

| “What does this say about AI?” | “Compare how Source A and Source B define AI governance, and list where they disagree.” | Cross-source synthesis with contrast |

| “Give me flashcards.” | “Create 15 flashcards covering the key regulatory changes discussed in sections 2 and 5.” | Scoped output tied to specific content |

| “What’s important in this PDF?” | “List the five data points from this report that would be most relevant for a client briefing on market entry risk.” | Use-case framing gives the AI a decision lens |

| “Help me study.” | “Generate a 20-question quiz from chapters 3–8, mixing factual recall and application questions.” | Specific format + specific source range |

Prompt patterns by role

- Students: “Based on this lecture and these two readings, what are the most likely exam questions a professor would ask?”

- Consultants: “Draft an executive summary of these three sources, structured as: key findings, risks, and recommended actions.”

- Analysts: “What contradictions exist between Source A’s market forecast and Source B’s data on consumer behavior in Q3?”

- Researchers: “Across these five papers, which authors support the hypothesis that X, and which present conflicting evidence?”

The single biggest tip? Select only the sources relevant to your question using the source panel. Asking a question across 50 sources when you need two is a recipe for noise.

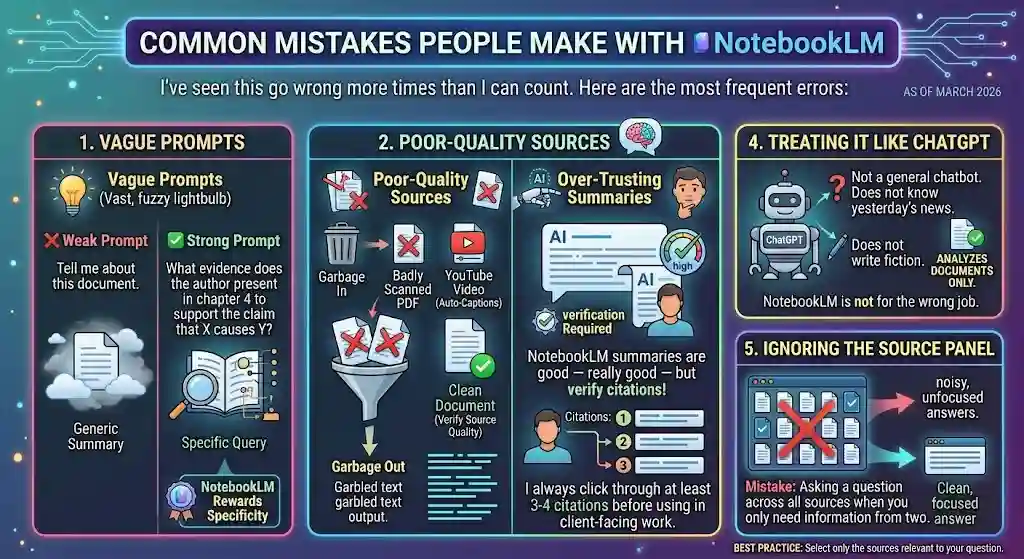

Common Mistakes People Make with NotebookLM

I’ve seen this go wrong more times than I can count. Here are the most frequent errors:

1. Using broad, vague prompts. “Tell me about this document” gets you a generic summary. “What evidence does the author present in chapter 4 to support the claim that X causes Y?” gets you something useful. NotebookLM rewards specificity.

2. Uploading poor-quality sources. Garbage in, garbage out. A badly scanned PDF or a YouTube video with auto-generated captions will produce worse results than a clean document. Take the extra five minutes to verify your source quality.

3. Over-trusting summaries without verification. NotebookLM’s summaries are good — really good — but they’re still AI-generated. I always click through at least 3–4 citations to verify accuracy before using any output in client-facing work.

4. Treating it like ChatGPT. NotebookLM is not a general chatbot. It doesn’t know what happened yesterday in the news. It doesn’t write fiction. It analyzes documents. If you use it for the wrong job, you’ll be disappointed — and it’s not the tool’s fault.

5. Ignoring the source panel. The left panel shows all your sources and lets you select which ones to include in your queries. Asking a question across all 50 sources when you only need information from two is a recipe for noisy, unfocused answers.

Quick Scorecard

| Category | Score (out of 10) | Notes |

|---|---|---|

| Accuracy | 9 | Source grounding keeps hallucination rates very low |

| Ease of use | 8 | Clean interface, but source management has a learning curve |

| Output quality | 8 | Audio Overviews are excellent; infographics are uneven |

| Research value | 9.5 | Best-in-class for document analysis and synthesis |

| Pricing / value | 8 | Free tier is generous; Pro at $19.99/mo is reasonable |

| Collaboration | 5 | Notebook sharing exists, but no real-time teamwork |

| Overall verdict | 8.5 | The strongest source-grounded AI research tool available |

Frequently Asked Questions

What is NotebookLM?

NotebookLM is a source-grounded AI research tool made by Google that analyzes only your uploaded documents and cites every answer. You can upload PDFs, Google Docs, Google Slides, websites, YouTube videos, audio files, and EPUB files — then ask questions, generate Audio Overviews, create slide decks, build mind maps, or produce flashcards and quizzes, all grounded in your sources with inline citations.

Is NotebookLM free?

Yes — NotebookLM offers a free plan with 100 notebooks, 50 sources per notebook, 50 chats per day, 3 Audio Overviews per day, and 3 Video Overviews per day. Paid tiers (Plus, Pro, and Ultra) expand these limits and add features like Deep Research, Cinematic Video Overviews, slide decks, and infographics. Google notes that all limits are subject to change.

Is NotebookLM worth it in 2026?

Yes, if your work involves reading, analyzing, or synthesizing documents. The March 2026 updates — slide revisions, PPTX export, saved chat history, and Cinematic Video Overviews — made NotebookLM meaningfully more useful for production workflows. The free plan covers light use; Pro at $19.99/month is the value sweet spot for serious researchers, students, analysts, and consultants.

How is NotebookLM different from ChatGPT?

NotebookLM only answers from documents you upload and cites the exact source passage for every claim. ChatGPT draws from pre-trained knowledge and optional web browsing, giving broader but less verifiable answers. Choose NotebookLM for source-faithful document analysis; choose ChatGPT for creative tasks, coding, and open-ended general knowledge.

How is NotebookLM different from Gemini?

NotebookLM is a specialized research tool that works exclusively with your uploaded sources. Gemini is Google’s general-purpose AI assistant with web search and Google Workspace integration. Gemini is better for real-time information; NotebookLM is better for deep, evidence-based analysis of specific documents you provide.

Can NotebookLM summarize PDFs?

Yes. Upload any PDF up to 500,000 words per source. NotebookLM generates summaries, answers questions, creates Audio Overviews, slide decks, mind maps, flashcards, and quizzes — all grounded in the uploaded PDF content with inline citations you can click to verify.

Can NotebookLM summarize YouTube videos?

Yes. Paste a YouTube URL as a source, and NotebookLM processes the video’s transcript. You can ask questions, generate summaries, and create study materials grounded in the video content. Transcript quality affects output quality — auto-generated captions produce noisier results.

Does NotebookLM cite its sources?

Yes. Every response includes numbered inline citations linking to the exact passage in your uploaded sources. Click any citation to see the referenced text highlighted in context. This is NotebookLM’s core differentiator from general-purpose chatbots.

Is NotebookLM accurate?

NotebookLM’s source-grounded architecture reduces hallucination rates well below general-purpose chatbots. In my testing, citation accuracy exceeded 90% across 20 factual queries. It can still misinterpret or over-generalize source material — always verify critical claims by clicking through the citations.

Is NotebookLM safe for private documents?

Google states that uploaded data is not used to train AI models unless you provide feedback. For Google Workspace and Workspace for Education accounts, protections are stronger: no human review, no model training on your content. For regulated industries, independently verify compliance before uploading sensitive client or patient data. See NotebookLM’s privacy documentation for current details.

What are the limits of NotebookLM?

Free plan: 100 notebooks, 50 sources per notebook, 500,000 words per source, 50 chats per day, 3 Audio Overviews per day, 3 Video Overviews per day. Pro: 500 notebooks, 300 sources, 500 chats, 20 Audio/Video Overviews per day. Ultra: highest limits including 600 sources per notebook, 5,000 chats, 200 Audio/Video Overviews per day. Google states that all usage limits are subject to change.

What is new in NotebookLM in 2026?

The March 20, 2026 update introduced slide revisions (prompt-based per-slide editing), Cinematic Video Overviews (animated, narrated video explainers via Gemini + Veo), ten new infographic styles, improved flashcards and quizzes with progress saving, EPUB support, PPTX export, saved conversation history, and artifact creation in chat. Source: Google Workspace Updates.

What are the best NotebookLM alternatives?

The best alternative depends on your job: Perplexity for web-based research with citations, Claude for long-document reasoning and nuanced writing, ChatGPT for creative and general-purpose tasks, Gemini for Google Workspace integration with web grounding, SciSpace for academic paper analysis, and Anara for research-specific workflows.

Can NotebookLM create slide decks?

Yes. NotebookLM generates slide decks grounded in your sources. The March 2026 update added per-slide revision via natural-language feedback and PPTX export. Available on Pro and Ultra plans.

Can NotebookLM create flashcards and quizzes?

Yes. NotebookLM generates flashcards and quizzes from your uploaded sources. The March 2026 update added session-saving and “Got it” / “Missed it” tracking, making these tools practical for sustained study across multiple sessions.

How does NotebookLM handle output language?

NotebookLM can generate responses and outputs in multiple languages. The output language generally follows the language of your prompt or your uploaded sources. Results are strongest in English but functional in other major languages.

The Bottom Line

Here’s my prediction: by the end of 2026, source-grounded AI tools will become the default for professional research, and general chatbots will be what people use for casual questions — not serious work. NotebookLM is where that shift starts.

It’s not perfect. The collaboration story is thin. The infographic generator needs polish. And the “no web access” constraint, while architecturally smart, means you still need other tools for live research.

But if you evaluate any AI tool by a single question — “Can I trust this output?” — NotebookLM gives you the most defensible answer on the market. Every claim cites your source. Every response stays within your evidence. Every output can be verified.

Is NotebookLM worth trying in 2026? Absolutely. Start with the free tier. Upload something you’re actually working on — not a test document, but a real project. Ask the hard questions. See how fast you get answers you can actually use.

That’s how you’ll know.