Make is one of the most capable no-code automation tools for building multi-step workflows—especially when you need branching (routers), webhooks, data mapping, and error handling. But it’s not the easiest platform to learn, and the credits-based pricing can surprise you if you build inefficient scenarios.

In this Make Review, I’m evaluating Make.com the same way I’d assess it for a client: what it’s best at, where it breaks down, what it realistically costs, and when you should choose Zapier, n8n, Power Automate, Pabbly, or IFTTT instead. You’ll get clear decision rules, practical use cases, and implementation tips that reduce “automation debt.”

Quick Summary – Make.com Review

- Visual builder superiority: Make’s interface shows your entire automation flow at once, making complex branching and error paths easier to understand than linear tools

- Operations-based pricing: You pay per action executed, not per workflow—this can be cheaper or more expensive depending on your automation patterns

- Steep but worthwhile learning curve: Expect 3–5 hours to build your first meaningful scenario; after that, productivity accelerates significantly

- Data handling wins: Iterators, aggregators, and array functions let you process bulk data that would require custom code elsewhere

- Governance gaps: Limited role-based controls and audit trails compared to enterprise platforms like Power Automate

Quick Scorecard

| Criterion | Rating | Notes |

|---|---|---|

| Ease of Use | 6.5/10 | Powerful once learned, but intimidating initially |

| Flexibility | 9/10 | Handles complex logic Zapier can’t touch |

| Value | 8/10 | Competitive for heavy users; watch operation counts |

| Integrations | 8/10 | 1,500+ apps; HTTP module fills gaps |

| Reliability | 7.5/10 | Stable but requires manual error handling setup |

What is Make.com? (Make Review context)

Make.com—formerly Integromat before its 2021 rebrand—is a visual workflow automation platform that connects apps, moves data, and executes business logic without code. It sits in the no-code automation category alongside Zapier and IFTTT, but with a distinctly different philosophy: instead of hiding complexity, Make exposes it through a visual canvas where you can see every decision point, data transformation, and error path.

Unlike Zapier’s linear “trigger → action → action” model, Make uses scenarios built from modules (individual app actions) connected by routers (branching logic), filters, and iterators. This means you can build workflows that say “if the email attachment is a PDF and the sender is in our CRM, parse it with AI, otherwise send to a review queue”—all in one scenario instead of chaining multiple automations together.

The pricing model reflects this difference: Make charges per operation (each module execution), while Zapier charges per task (each automation run). For workflows with multiple branches that don’t all execute, Make is often cheaper. For simple one-path automations that run constantly, Zapier’s per-task model may cost less.

My evaluation criteria

I evaluated Make.com by building production automations over a three-month period, focusing on scenarios that stress-test the platform’s capabilities rather than simple “add row to spreadsheet” demos.

What I looked for:

- Speed to build: How long does it take to go from idea to working automation for someone with moderate technical comfort?

- Debugging clarity: When something breaks at 2am, can I figure out why without a computer science degree?

- Error handling: Does the platform help me build resilient workflows, or do I need to anticipate every failure mode myself?

- Scalability: What happens when a workflow that processes 50 items daily suddenly needs to handle 500?

- Pricing transparency: Can I predict my monthly bill, or will I get surprised?

What I tested:

- Lead capture workflow: Typeform submission → Clearbit enrichment → conditional routing to HubSpot (hot leads) or Airtable (nurture queue) → Slack notification with custom formatting

- E-commerce operations: Shopify order webhook → inventory check in Google Sheets → conditional email (in-stock vs backorder) → update Notion dashboard

- Content operations: Airtable content calendar → check Notion for draft status → send Slack reminders to writers → aggregate weekly metrics

- AI-assisted ticket routing: Gmail parser → OpenAI classification → route to different Slack channels based on urgency → log to database

Each scenario involved branching logic, data transformations, error handling, and at least one HTTP/API call. I intentionally avoided the simplest use cases to see where Make’s strengths and limitations emerge.

Make.com features explained (with practical examples)

Visual scenario builder + modules

Make’s canvas-based builder is its defining feature. You drag modules (app actions) onto a canvas and connect them with lines that represent data flow. Each module is a single operation—”search for a contact,” “add a row,” “send an HTTP request”—and clicking a module shows its configuration panel on the right.

What surprised me: the visual approach actually makes complex automations easier to debug. When a scenario fails, I can see exactly which module errored and trace the data path backwards. With Zapier’s vertical list, I often lose track of where I am in a 15-step automation.

The tradeoff: this power comes with visual clutter. A scenario with 20+ modules and multiple branches can feel overwhelming until you learn to collapse sections and use notes.

Routers, filters, branching logic

Routers let you split a workflow into multiple paths based on conditions. Example: a new customer order might route to “high-value customer flow” if order total exceeds $500, and “standard fulfillment flow” otherwise. Both paths can run in parallel, or you can set conditions so only one executes.

Filters sit between modules and stop execution if conditions aren’t met. They’re simpler than routers—just a gate that says “only proceed if X is true.” I use filters to prevent unnecessary operations (and wasted executions) when data doesn’t meet criteria.

Practical example: In my lead routing workflow, a router checks the enriched lead score. Scores above 80 route to “hot lead path” (immediate CRM entry + Slack ping to sales), scores 50–80 go to “warm lead path” (Airtable nurture list), and everything else hits a filter that stops execution. This prevents cluttering my CRM with low-quality leads.

Iterators/aggregators + data mapping (why it matters)

This is where Make pulls away from simpler tools. An iterator takes an array (list) of items and processes each one individually. An aggregator does the opposite: collects multiple items and combines them.

Why it matters: Suppose you receive a webhook with 50 new orders. Zapier would require 50 separate automation runs (50 tasks). Make’s iterator processes all 50 in a single scenario execution, using 50 operations but only 1 trigger operation. For high-volume workflows, this dramatically reduces costs and complexity.

Aggregators let you do things like “collect all failed payment attempts from the last hour, format them into a table, and send one summary Slack message” instead of 47 individual notifications.

The learning curve here is real. Understanding when to use iterators vs when to process arrays directly took me several failed attempts. Make’s documentation explains the mechanics but doesn’t always clarify when each approach is appropriate.

Webhooks + API connections

Make treats webhooks as first-class citizens. You can instantly generate a webhook URL to receive data from any service, and the data becomes available to your scenario immediately. The HTTP module lets you make GET, POST, PUT, DELETE requests to any API with full control over headers, authentication, and body formatting.

I use webhooks extensively for event-driven automations. When a customer completes a specific action in our app, we send a webhook to Make, which then orchestrates downstream actions—updating our CRM, sending personalized emails, logging analytics, and triggering follow-up sequences. This feels more reliable than polling-based triggers that check for changes every 15 minutes.

Gotcha: Webhook-triggered scenarios don’t automatically retry on failure. You need to add error handling modules to catch failures and either retry or log them. This tripped me up initially when a downstream API was temporarily unavailable, and I lost incoming webhook data.

Scheduling & triggers

Scenarios can run on schedules (every hour, daily at 9am, etc.) or in response to triggers (new row, new email, webhook received). The scheduling interface is straightforward, though I wish there were more granular options—”every 10 minutes during business hours only” requires workarounds.

Most app modules support both polling triggers (Make checks for new data) and instant triggers (the app pushes notifications to Make). Instant triggers are dramatically faster but not available for every integration.

Error handling, retries, and logging

Make provides error handler routes—special paths that only execute when a module fails. You can configure them to send alerts, log errors to a database, or attempt alternative actions. However, setting these up is entirely manual. The platform doesn’t prompt you to add error handling, so beginners often skip it and only discover the need after a production failure.

Execution logs show every module’s input, output, and processing time. This is invaluable for debugging, but logs are only retained for a limited period depending on your plan. For critical workflows, I pipe execution data to an external logging service.

What I’d do differently: I now add error handlers as I build, not after deployment. The 10 extra minutes upfront has saved hours of troubleshooting.

Templates, versioning, collaboration considerations

Make offers templates for common workflows, though they’re hit-or-miss. Some are genuinely useful starting points; others feel like marketing demos that need significant modification for real use.

Scenario versioning exists but is limited—you can duplicate scenarios to create “versions,” but there’s no Git-like diffing or rollback. For teams, this means establishing your own version control convention (I use date-stamped scenario names).

Collaboration features are basic. You can share scenarios with team members, but there’s no granular permission control (viewer vs editor roles), no approval workflows, and no audit trail of who changed what. For agencies managing client automations, this creates governance headaches.

Integrations quality (and what to watch out for)

Make advertises 1,500+ app integrations, and in practice, the quality varies significantly.

Tier 1 integrations (Google Workspace, Airtable, Notion, Slack, HubSpot, Shopify, Salesforce): These have comprehensive module coverage, reliable authentication, and handle edge cases well. I’ve had virtually zero issues with these.

Tier 2 integrations (smaller SaaS tools, niche apps): Basic operations work fine, but advanced features often require the HTTP module to call the API directly. Documentation is sometimes thin.

Tier 3 (the long tail): Some integrations exist in name only—they might have 3–4 modules that cover 20% of the API. You’ll spend more time building custom HTTP requests than using pre-built modules.

When you’ll need the HTTP module: More often than you’d think. Even with “supported” apps, I’ve needed custom API calls for operations like bulk updates, advanced search queries, or accessing beta features. The HTTP module is powerful but assumes you can read API documentation and construct requests. If that sounds intimidating, you’ll hit a ceiling faster with Make than with Zapier.

Rate limits & authentication friction: Most Make modules handle OAuth smoothly, but I’ve encountered persistent authentication issues with Google Sheets (tokens expiring randomly) and Shopify (requiring re-authorization after platform updates). API rate limits are your responsibility to manage—Make doesn’t automatically throttle requests to stay within provider limits, so high-volume scenarios need manual rate-limiting logic.

Maintenance reality: Integrations break when APIs change. Over three months, I’ve had two scenarios break due to API deprecations—one for a marketing tool that changed their webhook payload format, another for a CRM that restricted API access on lower-tier plans. Make notifies you of errors, but fixing them requires you to understand what changed and update your scenario accordingly.

Make Pricing

Make’s pricing model confuses people initially, but it’s actually more transparent than most competitors once you understand operations vs scenarios.

How it works:

- Operations: Each module execution costs one operation. Triggers usually cost one operation per check or per received item.

- Scenarios: The number of active automations you can have. Even paused scenarios count toward your limit.

- Data transfer: Included in plans; you won’t hit limits unless processing massive files.

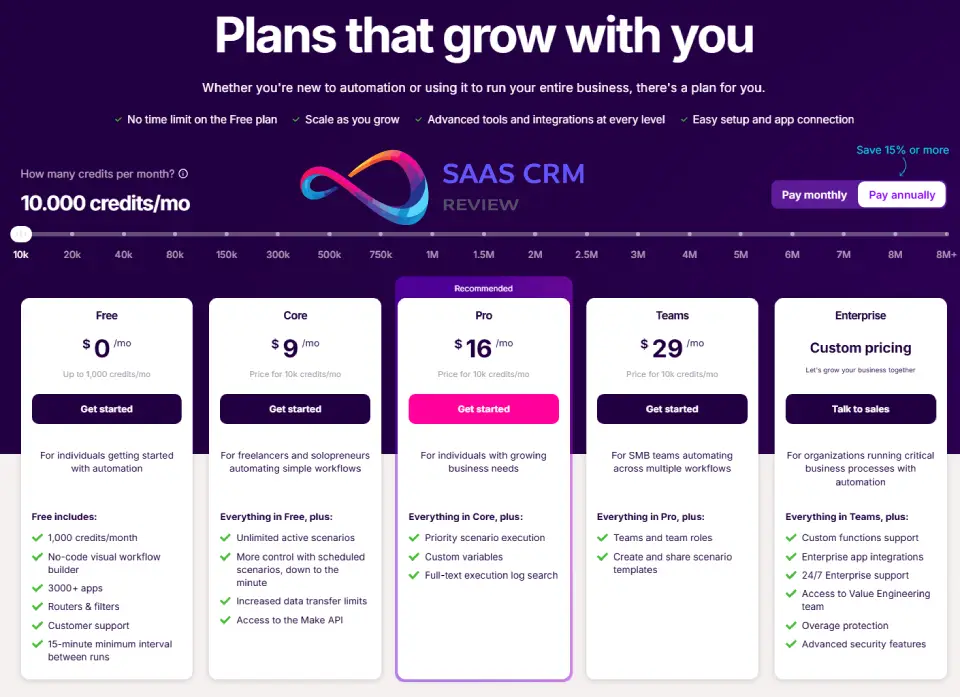

Plans (as of early 2026):

- Free: 1,000 operations/month, 2 active scenarios—genuinely useful for experimenting

- Core ($9/month): 10,000 operations, 5 active scenarios

- Pro ($16/month): 10,000 operations, unlimited scenarios, priority support

- Teams ($29/month): 10,000 operations, team features, multiple users

- Enterprise: Custom pricing, governance features, dedicated support

Important: Operations scale with add-on packs. If you exceed your plan’s operations, you can buy additional operations at $1 per 1,000 operations, which is reasonable.

Who the free plan is for: Solo users testing ideas or running ultra-lightweight automations (one daily report, a simple webhook handler). It breaks the moment you need multiple active scenarios or run anything high-volume.

Realistic monthly cost examples

Persona 1: Solo creator (light automation)

- 3 scenarios: Lead capture form → CRM, content calendar reminders, weekly report generator

- ~5,000 operations/month (mostly scheduled checks with occasional executions)

- Cost: Free plan or Core ($9/month) for room to grow

Persona 2: Small marketing team (operations automation)

- 12 scenarios: Multi-step lead routing, e-commerce fulfillment, content workflow, social media scheduling, analytics reporting, CRM hygiene

- ~40,000 operations/month (mix of webhooks, scheduled tasks, polling triggers)

- Cost: Pro ($16) + 3 operation packs (~$30/month) = ~$46/month

Persona 3: Agency (client automation management)

- 50+ scenarios across multiple clients: various lead gen, CRM integrations, reporting dashboards, custom client workflows

- ~120,000 operations/month (high volume across multiple clients)

- Cost: Teams ($29) + 11 operation packs (~$110) = ~$139/month, or Enterprise for governance

Avoid surprise costs checklist

- Count your iterators: If you process arrays with 100 items, that’s 100+ operations per run, not just one

- Watch polling triggers: Modules that check for new items every 15 minutes use operations even when nothing is new (though Make has optimized this)

- Test execution frequency: A scenario that runs every 5 minutes executes 8,640 times per month—multiply by your module count

- Monitor error retries: Failed modules that auto-retry consume additional operations

- Account for seasonal spikes: E-commerce workflows might 3x during holidays

Make’s dashboard shows real-time operation usage, which I check weekly to avoid surprises. Setting up usage alerts is manual but recommended.

- Zapier Review 2026: Is It Worth It? Features, Pricing, Pros/Cons, and Best Alternatives

Make Pros and Cons

Pros

Visual clarity for complex workflows: Once you adjust to the canvas interface, understanding a 20-step automation with multiple branches is dramatically easier than scrolling through Zapier’s vertical list. I can trace data flow at a glance.

Superior data handling: Iterators, aggregators, and built-in array functions let you manipulate data that would require custom code elsewhere. Processing bulk data, transforming JSON, and combining multiple data sources is genuinely easier in Make.

Branching and conditional logic: Routers and filters enable sophisticated decision trees within a single scenario. I’ve replaced 5 separate Zapier automations with one Make scenario that handles all cases.

Value for complex automations: If your workflows involve branching, iteration, or data transformation, Make’s operation-based pricing often beats Zapier’s per-task model. My lead routing scenario costs ~300 operations per qualified lead in Make vs 5–7 tasks per lead in Zapier.

Webhooks as first-class citizens: Instant webhook URLs, flexible payload handling, and the ability to respond synchronously make event-driven architecture actually feasible.

HTTP module flexibility: When an app integration falls short, the HTTP module gives you full API access. This is liberating for power users.

Cons

Learning curve is real: Expect several hours of confusion before things click. The documentation explains “what” but not always “why” or “when.” I wasted time building overcomplicated solutions before understanding simpler approaches.

Governance gaps: No granular role-based access control, limited audit trails, basic team collaboration features. For agencies or enterprises, this creates manual workarounds and compliance concerns.

Error handling is manual: The platform doesn’t prompt you to handle failures. Beginners ship brittle workflows that break silently, discovering issues only when someone asks “why didn’t this run?”

Maintenance burden: You’re responsible for monitoring API changes, managing rate limits, and keeping scenarios updated. Make doesn’t auto-fix broken integrations when APIs evolve.

Visual complexity at scale: A scenario with 30+ modules becomes visually cluttered. While you can add notes and organize modules, it never feels as clean as starting fresh.

Documentation inconsistency: Some features have excellent guides; others have sparse technical references that assume significant prior knowledge. Community forums fill gaps, but hunting for answers slows development.

Hidden tradeoffs

Scenario duplication as version control is clunky: No proper versioning means you’re duplicating scenarios to preserve “working versions” before making changes. This quickly clutters your workspace.

Team handoffs are painful: Without detailed comments (which you must manually add), another team member looking at your scenario will struggle to understand the logic. I now enforce a documentation standard, but Make doesn’t encourage this.

Monitoring is reactive, not proactive: You get notified when a scenario fails, but there’s no built-in way to detect degraded performance (slower execution times, increasing error rates before total failure). I’ve added external monitoring for critical workflows.

The “free trial” trap: You can build scenarios on the free plan, but they might not be viable under the 1,000 operation limit. Testing realistic volumes requires a paid plan or careful operation counting.

Make vs Zapier vs n8n vs Power Automate (comparison)

| Factor | Make | Zapier | n8n | Power Automate |

|---|---|---|---|---|

| Best for | Complex branching, data transformation, power users | Simple automations, non-technical users | Self-hosted control, developer-friendly | Microsoft-centric enterprises |

| Learning curve | Moderate-steep (3–5 hours to proficiency) | Gentle (30 min to first automation) | Steep (requires technical comfort) | Moderate (familiar to Microsoft users) |

| Hosting | Cloud only | Cloud only | Self-hosted or cloud | Cloud only |

| Flexibility | High (visual logic, iterators, HTTP module) | Medium (multi-step, limited branching) | Very high (JavaScript, full code access) | Medium-high (within Microsoft ecosystem) |

| Cost model | Operations (~$0.001 per operation) | Tasks (~$0.01 per task for complex workflows) | Free (self-hosted) or $20/month (cloud) | Included in Microsoft 365 or separate license |

| Integrations | 1,500+ apps | 5,000+ apps | 400+ nodes (expandable via code) | Deep Microsoft + 1,000+ connectors |

| Enterprise controls | Basic (limited RBAC, no SSO on lower tiers) | Good (team features, audit logs) | Full control (self-hosted) | Excellent (AD integration, DLP, compliance) |

| Governance | Weak | Moderate | DIY (you implement) | Strong |

| Data residency | Limited control | Limited control | Full control (self-hosted) | Configurable (Microsoft cloud regions) |

- Non-technical marketer who wants quick wins: Choose Zapier—the interface is friendlier, the template marketplace is better, and simple use cases are faster to build.

- Power user who needs conditional logic and data manipulation but wants cloud hosting: Choose Make—you’ll learn faster than n8n and get more flexibility than Zapier.

- Developer or technical team that values control and wants to avoid vendor lock-in: Choose n8n—self-hosting gives you full control, and JavaScript access removes most limitations. Be prepared to maintain infrastructure.

- Enterprise team deep in Microsoft 365 needing governance and compliance: Choose Power Automate—native integration with Azure AD, SharePoint, Teams, and enterprise DLP policies makes this the pragmatic choice despite the learning curve.

- Agency managing client automations at scale: Choose Make for technical flexibility or Zapier for client-friendly interfaces, depending on your team’s skill level and client needs. Both have limitations; neither is perfect for multi-tenant scenarios.

n8n Review 2026: Is This Open-Source Automation Platform Worth It?

Best use cases for Make (with mini walkthroughs)

1. Lead form → enrichment → CRM → Slack alert

Complexity: Moderate

Flow:

- Typeform submission triggers scenario (instant webhook)

- Router checks if email domain is corporate (filter out @gmail, @yahoo)

- If corporate: call Clearbit API to enrich with company data (HTTP module)

- Second router based on enrichment result:

- High-value target (revenue > $10M): Create HubSpot contact, add to hot leads list

- Mid-value: Create Airtable record in nurture pipeline

- Low-value/no data: Log to Google Sheets for review

- Aggregate branch outputs and send single formatted Slack message with lead details

- Error handler: Log failures to Airtable error log + send DM to ops lead

Expected time to build: 2–3 hours for first attempt; 30 minutes once you understand routers and error handling.

Operations per execution: 8–12 depending on which path executes.

2. Shopify order → inventory check → conditional email → Sheets

Complexity: Low-moderate

Flow:

- Shopify webhook receives new order

- Iterator processes each line item in order

- For each item: HTTP GET request to inventory API (or Google Sheets lookup)

- Router: If in stock, send “order confirmed” email; if backordered, send “delayed shipment” email with estimated date

- Aggregator collects all items and updates Notion order dashboard

- If any items backordered: Create Slack task for fulfillment team

Expected time to build: 1.5–2 hours.

Operations per execution: ~15–25 for a typical 3-item order (iterators multiply operations).

3. Content pipeline: Airtable → Notion → Slack + approvals

Complexity: Moderate-high

Flow:

- Scheduled trigger checks Airtable content calendar (runs daily at 9am)

- Search for records where status = “Draft due” and due date = today

- Iterator processes each due item

- For each: Check Notion database for matching draft

- If draft exists: Send Slack reminder to editor with link

- If draft missing: Send urgent Slack alert to writer + mark record “Overdue” in Airtable

- Aggregator creates weekly summary of all content statuses

- Send summary email to content lead every Friday

Expected time to build: 3–4 hours (Notion API can be tricky).

Operations per execution: 20–50 depending on volume of due items.

4. AI-assisted ticket routing: Gmail → OpenAI → Slack

**Complexity: High (involves AI, requires prompt engineering)

Flow:

- Gmail trigger watches for new emails in support inbox

- Extract email body, subject, sender

- HTTP request to OpenAI API with classification prompt: “Is this urgent/routine/sales/technical?”

- Router based on AI response:

- Urgent: Send to #support-urgent Slack channel + create Notion ticket

- Technical: Send to #engineering Slack + tag on-call engineer

- Sales: Send to #sales Slack + create HubSpot ticket

- Routine: Log to Airtable backlog

- Store full email + classification in database for training data

- Error handler: If OpenAI fails, send to default review queue

Expected time to build: 4–6 hours (prompt refinement takes time).

Operations per execution: ~6–10.

Gotcha: OpenAI rate limits and costs are separate from Make—monitor both.

5. Webhook-based event automation: app activity → multi-tool sync

**Complexity: Moderate

Flow:

- Custom webhook receives event from your app (e.g., “user upgraded to Pro”)

- Parse webhook payload with JSON module

- Router based on event type:

- Upgrade: Update Stripe subscription metadata, create HubSpot deal, send congratulations email, add to customer success CRM

- Cancellation: Remove from active email sequences, create Airtable churn record, alert retention team

- Feature usage milestone: Log to analytics database, send achievement email

- Aggregator creates daily summary of all events

- Error handler: Retry failed operations 3x, then log permanently

Expected time to build: 3–4 hours.

Operations per execution: 10–15 depending on event type.

6. Batch data processing: scheduled cleanup + enrichment

**Complexity: Low (but high operation count)

Flow:

- Scheduled trigger runs nightly

- Pull all Google Sheets rows where “Status = Pending”

- Iterator processes each row

- For each: HTTP request to validate email, enrich data, check for duplicates

- Update row with enriched data + change status to “Processed”

- Aggregator counts total processed and emails summary report

Expected time to build: 1.5 hours.

Operations per execution: Potentially hundreds if you have 100+ pending rows—this is where operation counts spike, so monitor usage.

Who should NOT use Make (and what to choose instead)

When Zapier is better

If you’re a non-technical user who needs automation fast. Zapier’s interface is more intuitive, templates are higher quality, and simple use cases deploy in minutes rather than hours. The learning curve matters: if you’re a solo marketer with 10 other priorities, spending 5 hours learning Make’s intricacies may not be worth the flexibility gains.

If your automations are simple linear flows. “New email → add to Sheets” doesn’t benefit from Make’s branching capabilities, and you’ll pay similar costs while navigating a more complex interface.

If you need best-in-class customer support. Zapier’s documentation and support are more polished. Make’s community forums are active, but official support on lower tiers is slower.

When n8n is better

If you have technical resources and want maximum control. n8n lets you self-host (no vendor lock-in), write custom JavaScript in workflows, and avoid all per-operation costs. If you’re comfortable managing infrastructure and want to embed automation in your product, n8n gives you freedom Make doesn’t.

If data residency or compliance requires on-premise hosting. Make is cloud-only; n8n can run in your own environment with full data control.

If you’re building automation products for clients. n8n’s white-labeling and self-hosting enable you to build automation-as-a-service businesses that aren’t feasible with Make’s cloud model.

When Power Automate is better

If your organization is all-in on Microsoft 365. Native integration with Teams, SharePoint, Outlook, Azure AD, Dynamics 365, and other Microsoft services makes Power Automate the path of least resistance. Authentication is simpler, governance is built-in, and it may already be included in your licensing.

If you need enterprise governance and compliance. Power Automate offers data loss prevention policies, detailed audit logs, Azure AD integration, and compliance certifications out of the box. Make’s governance features are basic in comparison.

If business users need to build automations with IT oversight. Power Automate’s approval workflows and admin controls let IT maintain governance while enabling citizen developers—a balance Make struggles with.

Reliability, security & compliance (careful, accurate)

Execution reliability

Make’s infrastructure is generally stable. Over three months of production use, I experienced:

- Zero platform outages that affected all scenarios

- Three isolated integration outages where specific apps (not Make itself) had connectivity issues

- Occasional slowdowns during what I assume were peak usage times (delays of 10–30 seconds, not failures)

Scenarios execute reliably when properly configured, but “properly configured” does heavy lifting. Without error handlers, temporary downstream API failures can cause silent data loss. Make doesn’t retry failed modules by default—you must add retry logic manually.

Monitoring and logs

Execution logs show detailed input/output for each module, which is invaluable for debugging. However:

- Log retention is limited (30 days on Pro tier; verify current docs for your plan)

- No built-in alerting for degraded performance (only complete failures)

- No aggregate metrics dashboard showing success rates, average execution time, or trends over time

For critical workflows, I recommend piping execution data to external monitoring (sending logs to a database or analytics tool) to track long-term health.

Data handling and security

Make processes data in transit but doesn’t store most workflow data long-term beyond execution logs. Credentials are encrypted, and OAuth connections use secure token storage.

For security and compliance details (SOC 2, GDPR, data processing agreements): I recommend verifying current documentation at Make.com’s official security page rather than relying on potentially outdated reviewer claims. Compliance certifications and data handling policies change, and I cannot confidently assert specific certifications here without risk of providing outdated information.

What I can confirm from usage:

- OAuth credentials have not leaked or required frequent re-authorization (unlike some competitors)

- HTTPS/TLS is used for all connections

- You can configure scenarios to avoid logging sensitive data (PII, credentials) by using Make’s “sensitive data” settings

What to verify if compliance matters:

- Whether Make has current SOC 2 Type II certification

- GDPR data processing agreement availability

- Data residency options for your region

- Whether your specific compliance framework (HIPAA, PCI-DSS, etc.) is supported

For high-security use cases, consult Make’s sales or compliance team rather than relying solely on public documentation.

Implementation tips (so users succeed)

Naming conventions matter

I learned this the hard way after accumulating 20+ scenarios with names like “New scenario (3)” and “Test workflow final v2.” Adopt a system early:

Format: [Client/Project] - [Trigger] → [Primary Action] - [Status]

Examples:

ACME Co - Shopify Order → Fulfillment + Notion - LIVEInternal - Daily Analytics → Summary Email - PAUSEDClient B - Typeform → CRM Routing - TEST

This helps when troubleshooting at 11pm: you can identify the scenario from an error notification instantly.

Scenario design patterns

Modular scenarios beat monoliths. I initially built mega-scenarios that did everything. Bad idea. Break workflows into focused scenarios that do one thing well and trigger each other via webhooks when needed. This improves reliability (one failure doesn’t cascade) and maintainability (easier to update).

Start with error handling, not as an afterthought. My standard template now includes:

- Error handler on every external API call

- At minimum: log error details to a Google Sheet or Airtable

- For critical workflows: alert via Slack or email + retry logic

Use filters aggressively to reduce operation waste. Before expensive operations (API enrichment, AI calls), add filters that check whether the operation is actually needed. This saves operations and money.

Testing checklist

Before deploying to production:

- Test with realistic data volumes (not just one item)

- Test error scenarios (deliberately break an API call to verify error handler works)

- Test with edge cases (empty fields, malformed data, unexpected types)

- Verify all paths in routers execute correctly

- Check operation count in test runs to estimate monthly costs

- Document assumptions and dependencies in scenario notes

- Set up monitoring/alerting for failures

- Confirm authentication connections are stable

Debugging workflow

When a scenario fails:

- Check the execution log immediately to see which module failed and why

- Trace data backwards: Click on failed module, examine input data, verify it matches expected format

- Re-run individual modules: Make lets you re-execute single modules with the same input to test fixes without re-running the entire scenario

- Simplify to isolate: Temporarily remove complexity (routers, filters) to test the core flow, then add back piece by piece

- Check API status pages: Sometimes the issue is downstream service outage, not your scenario

Prevent infinite loops: If scenarios trigger each other, add safeguards (maximum iteration counts, unique identifiers to detect duplicates, “circuit breaker” flags in your database).

Maintainability advice for teams/agencies

Document in the scenario itself. Use Make’s note modules to explain complex logic. Future you (or your teammates) will be grateful.

Establish review processes. For production workflows, require a second person to review before deployment. Catches mistakes I miss when heads-down building.

Version control workaround: Export scenarios as JSON regularly and store in Git. Not elegant, but lets you diff changes and roll back if needed.

Client handoff prep: If building for clients, create a simple documentation sheet:

- What the scenario does (in business terms)

- Trigger conditions and frequency

- Expected operation count per month

- What to do if it breaks (common failures + fixes)

- When to review/update (e.g., if