The best AI video generators in 2026 span fully generative text-to-video models, avatar-driven platforms, and AI-enhanced editing suites — and the gap between them is widening. If you need a straight answer: Google Veo 3.1 is the overall leader, combining the highest cinematic realism with native audio generation. OpenAI Sora 2 offers the most versatile creative toolkit, especially for storyboard-driven narrative projects. And HeyGen remains the strongest pick for avatar-based marketing and multilingual spokesperson content.

This guide is written for creators, marketers, L&D teams, and agencies who need to choose — and defend — a tool purchase in 2026. We cover 20 platforms across three categories, with a transparent scoring rubric, a comparison table, and a decision tree so you can skip to what matters. If you’re looking for AI tools that edit still images rather than generate video, see our best AI photo editors guide.

Key Takeaways (30-Second Summary)

- Best overall AI video generator in 2026: Google Veo 3.1 — highest realism + native audio in a single inference pass.

- Best for creative storytelling and storyboard control: OpenAI Sora 2 — multi-shot sequencing with per-shot prompts.

- Only tool with full IP indemnification: Adobe Firefly Video — trained exclusively on licensed data.

- Best value entry point: Haiper (free tier, 10 clips/day) and Kling (generous free tier, 9:16 native).

- Avatar/L&D leaders: HeyGen (marketing + translation) and Synthesia (enterprise training + SCORM).

Top 3 in 30 Seconds

- Google Veo 3.1 — Cinematic realism king. 4K, 60 s clips, synchronized native audio. Best for hero content, brand films, and production houses. Starts via Google One AI Premium or Vertex AI credits.

- OpenAI Sora 2 — Creative director’s choice. Storyboard mode, 60 s clips, strong prompt adherence. Best for narrative projects and multi-scene ad campaigns. $20/mo (Plus) or $200/mo (Pro).

- Runway Gen-4 Turbo — Controllability champion. Motion brush, camera bezier paths, Premiere Pro plugin. Best for professional editors and VFX workflows. $15–$95/mo.

Best AI Video Maker by Persona

- Independent creator / YouTuber: Start with Kling (free 9:16 clips) or Pika (quick iterations). Upgrade to Runway when you need finer control.

- Marketing agency: Sora 2 for hero content, Adobe Firefly Video for brand-safe deliverables. Use best AI tools for content creation for the broader workflow.

- L&D / training team: Synthesia (SCORM + SOC 2) or Colossyan (scenario branching + quizzes).

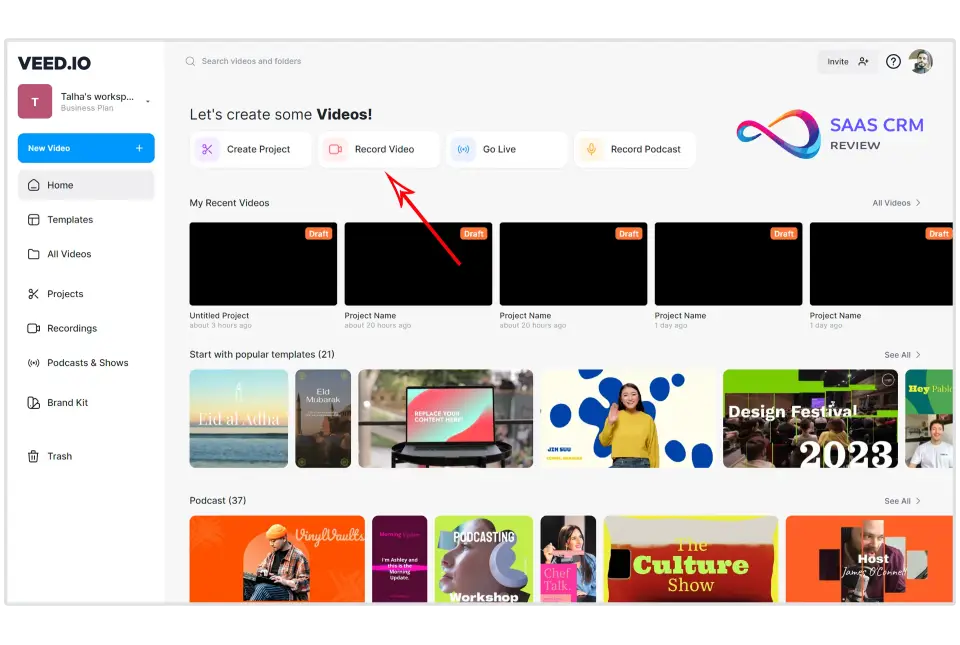

- Social media manager: Kling + VEED.io (repurposing) or InVideo AI (prompt-to-video).

- Enterprise brand team: Adobe Firefly Video (IP indemnity) + HeyGen (avatar localization).

Best AI Video Generators 2026 — Quick Picks

| Category | Pick | Why |

|---|---|---|

| Best overall | Google Veo 3.1 | Highest cinematic realism with native audio generation, strong temporal consistency, and deep integration with Google’s creative ecosystem. |

| Best for creative storytelling | OpenAI Sora 2 | Most versatile creative toolkit: storyboard mode, 60 s clips, remix/blend, strong prompt adherence for complex multi-shot narratives. |

| Best for TikTok / Reels (9:16) | Kling | Fast vertical-first generation, strong motion on human subjects, generous free tier for short-form creators. |

| Best for training & avatars | HeyGen | 175+ stock avatars, custom avatar cloning, Video Translate lip-sync in 40+ languages, API integration. |

| Best for commercially-safe brand work | Adobe Firefly Video | Only tool with full IP indemnification on paid plans, trained exclusively on licensed/public-domain data, tight Premiere Pro integration. |

| Best budget pick | Haiper | Most generous free tier (10 clips/day), usable quality for social content, no watermark on paid plans starting at $8/mo. |

| Best for professional control | Runway Gen-4 Turbo | Motion brush, bezier camera paths, keyframing, Premiere Pro/After Effects plugins. The editor’s tool. |

| Best for enterprise L&D | Synthesia | Market leader: 230+ avatars, 140+ languages, SCORM/xAPI export, SOC 2 Type II compliance. |

Categories are non-overlapping — each tool appears once. Picks based on our composite rubric scores and hands-on evaluation (see Test Method below).

Quick Filter Tables

Best free AI video generators 2026:

| Tool | Free tier limit | Watermark? | Max resolution | Max clip |

|---|---|---|---|---|

| Haiper | 10 clips/day | Yes | 720p | 8 s |

| Kling | ~66 clips/day | Yes | 720p | 15 s |

| Pika 2.0 | Limited daily | Yes | 720p | 10 s |

| Luma Ray 2 | 30 clips/mo | Subtle | 720p | 20 s |

Best for vertical (9:16) social video:

| Tool | Native 9:16? | Speed | Audio? | Price from |

|---|---|---|---|---|

| Kling | ✅ Composition-aware | Fast | Partial (SFX) | Free / $9.90/mo |

| Pika 2.0 | ✅ | Fast | ❌ | Free / $10/mo |

| Meta Vibes | ✅ | Fast | ✅ | Meta ecosystem |

| Haiper | ✅ | Fast | ❌ | Free / $8/mo |

Best for enterprise / regulated industries:

| Tool | IP indemnity? | SOC 2? | SSO? | C2PA? | SCORM? |

|---|---|---|---|---|---|

| Adobe Firefly Video | ✅ | ✅ | ✅ | ✅ | ❌ |

| Synthesia | ❌ | ✅ (Type II) | ✅ | ❌ | ✅ |

| HeyGen | ❌ | In progress | Enterprise | ❌ | ❌ |

| Colossyan | ❌ | In progress | Enterprise | ❌ | ✅ |

Best for native audio generation:

| Tool | Dialogue? | Ambient SFX? | Music? | Lip sync? |

|---|---|---|---|---|

| Google Veo 3.1 | ✅ | ✅ | ✅ | ✅ |

| Seedance 2.0 | Partial | ✅ | ✅ | Partial |

| MiniMax Hailuo | Partial | ✅ | ❌ | Partial |

| OpenAI Sora 2 | Improving | ✅ | ❌ | Partial |

AI Video Generator Comparison Table 2026

| Tool | Best for | Output types | Clip length | 9:16? | Native audio? | Control | Pricing model | Commercial use | Score |

|---|---|---|---|---|---|---|---|---|---|

| Google Veo 3.1 | Cinematic realism | T2V, I2V, V2V | 8–60 s | ✅ | ✅ | High | Sub + credits | Yes (paid plans) | 9.2 |

| OpenAI Sora 2 | Creative storytelling | T2V, I2V, V2V | 5–60 s | ✅ | ✅ | High | Sub (Plus/Pro) | Yes (paid plans) | 9.0 |

| Runway Gen-4 | Pro workflows | T2V, I2V, V2V, Editor | 5–40 s | ✅ | Partial | High | Sub + credits | Yes (Standard+) | 8.7 |

| ByteDance Seedance 2.0 | Multi-modal + audio | T2V, I2V | 5–30 s | ✅ | ✅ | Med | Credits | Check ToS (region) | 8.5 |

| Luma Ray 2 | Stylized motion | T2V, I2V | 5–20 s | ✅ | ❌ | Med | Free + Sub | Yes (paid plans) | 8.2 |

| Kling | Short-form social | T2V, I2V, V2V | 5–15 s | ✅ | Partial | Med | Free + Sub | Yes (paid plans) | 8.1 |

| Pika 2.0 | Quick iterations | T2V, I2V, V2V | 3–10 s | ✅ | ❌ | Med | Free + Sub | Yes (paid plans) | 7.8 |

| Adobe Firefly Video | Brand-safe production | T2V, I2V, Editor | 5–15 s | ✅ | ❌ | High | CC Subscription | Yes + indemnity | 7.9 |

| LTX Studio | Storyboard-to-video | T2V, I2V, Editor | 5–20 s | ✅ | ❌ | High | Sub | Yes (paid plans) | 7.7 |

| Haiper | Budget short-form | T2V, I2V | 4–8 s | ✅ | ❌ | Low | Free + Sub | Yes (paid plans) | 7.3 |

| MiniMax Hailuo | Experimental realism | T2V, I2V | 5–15 s | ✅ | ✅ | Med | Free + Credits | Check ToS | 8.0 |

| Meta Vibes | Social-first generation | T2V, I2V | 5–15 s | ✅ | ✅ | Low | Meta ecosystem | Meta platforms | 7.5 |

| Synthesia | Corporate training | Avatar, T2V | 1–60 min | ✅ | ✅ (TTS) | High | Sub (per seat) | Yes (Enterprise) | 8.4 |

| HeyGen | Avatars + translation | Avatar, T2V | 1–30 min | ✅ | ✅ (TTS) | High | Sub + credits | Yes (paid plans) | 8.6 |

| DeepBrain AI | AI news / training | Avatar, T2V | 1–20 min | ✅ | ✅ (TTS) | Med | Sub | Yes (paid plans) | 7.6 |

| Colossyan | L&D / compliance | Avatar, T2V | 1–30 min | ✅ | ✅ (TTS) | Med | Sub (per seat) | Yes (Enterprise) | 7.8 |

| D-ID | Talking photo/avatar | Avatar, I2V | 1–10 min | ✅ | ✅ (TTS) | Med | Sub + credits | Yes (paid plans) | 7.4 |

| InVideo AI | Marketing videos | T2V, Editor, Templates | 1–15 min | ✅ | ✅ (TTS) | Med | Sub | Yes (paid plans) | 7.5 |

| VEED.io | Social repurposing | Editor, T2V, Subtitles | 1–30 min | ✅ | ✅ (TTS) | Med | Sub | Yes (paid plans) | 7.6 |

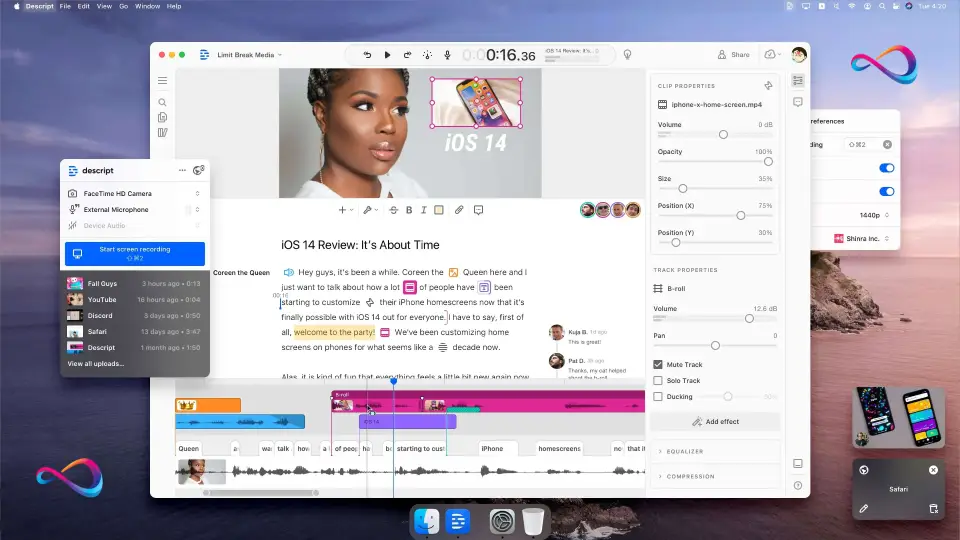

| Descript | Podcast/video editing | Editor, V2V, TTS | Unlimited | ✅ | ✅ (TTS) | High | Sub | Yes (paid plans) | 7.9 |

Scores reflect our composite rubric (see below). Pricing and feature details as of March 2026 — check each vendor’s pricing page for current rates.

How We Score and Test

Scoring Rubric

Every tool in this guide is evaluated against the same nine-dimension rubric. Each dimension is scored 0–10, then weighted to produce a composite score.

| Dimension | Weight | What it measures |

|---|---|---|

| Realism & detail | 15% | Visual fidelity, texture quality, lighting accuracy |

| Motion fidelity | 15% | Natural movement of people, objects, and physics |

| Temporal consistency | 12% | Frame-to-frame coherence; absence of flicker, morphing, or drift |

| Controllability | 12% | Camera controls, keyframes, motion brush, reference images, negative prompts |

| Audio / lip sync | 10% | Native audio generation quality; TTS clarity; lip-sync accuracy |

| Speed & reliability | 8% | Queue times, failure rates, uptime |

| Workflow & editing | 10% | Timeline editor, clip extension, storyboard, collaboration, export options |

| Rights & safety | 10% | Commercial licensing, indemnification, content provenance (C2PA), moderation |

| Value | 8% | Effective cost per usable second of output relative to quality delivered |

How to read the scores:

- 9.0–10.0 — Best-in-class; sets the standard for the category.

- 8.0–8.9 — Excellent; production-ready for most professional use cases.

- 7.0–7.9 — Good; solid for its niche but has notable trade-offs.

- 6.0–6.9 — Usable; functional but may require heavy curation or workarounds.

- Below 6.0 — Not recommended for professional use at this time.

Test Method: How We Evaluated Each Tool

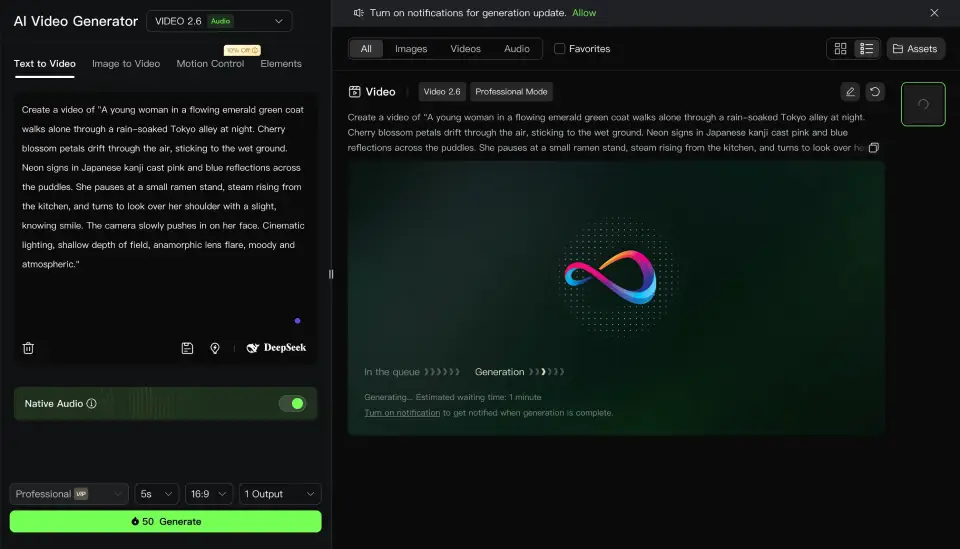

We ran 10 standardized benchmark prompts across every generative tool (avatar platforms were tested with equivalent scripted scenarios). Each prompt was designed to stress-test a specific capability:

| # | Prompt category | What it tests |

|---|---|---|

| P1 | Cinematic walk (rain + neon) | Realism, reflections, temporal consistency |

| P2 | Product hero shot (rotating object) | Detail fidelity, physics, lighting |

| P3 | Two-person conversation (dialogue) | Multi-subject, lip sync, audio |

| P4 | Fast action (running, jumping) | Motion fidelity, artifact rate |

| P5 | Abstract/painterly style | Stylization control, creative range |

| P6 | Vertical 9:16 social clip | Aspect ratio handling, composition |

| P7 | Orbital camera move on static scene | Camera control precision |

| P8 | Character consistency (2 shots, same person) | Reference conditioning, seed reliability |

| P9 | 30-second extended clip | Temporal drift, coherence at length |

| P10 | Text overlay + brand colors | Controllability, text rendering |

What we measured per generation:

- Output quality (subjective 1–10 by two independent reviewers)

- Artifact count (visible glitches, morphing, hand errors per clip)

- Generation time (prompt submission to downloadable output)

- Cost per usable second = total credits/cost spent ÷ seconds of “keeper” output (clips rated 7+ by both reviewers)

What we observed (summary): Veo 3.1 produced the fewest artifacts and the highest average quality across all 10 prompts. Sora 2 excelled on narrative complexity (P3, P8) but showed occasional queue delays. Runway Gen-4 Turbo offered the most precise camera and motion control (P7). Budget tools (Haiper, Kling) performed well on simple prompts (P5, P6) but degraded on complex scenes (P3, P4).

Last verified: March 2026. We revisit scores when vendors ship material model or pricing updates. Features, pricing, and commercial terms change frequently — always confirm details on the vendor’s official site before purchasing. Each tool section includes a “Last checked” date.

Author & Editorial Policy

This guide is produced by the SaaS CRM Review editorial team. Our evaluation process includes hands-on testing of each tool’s current production version, comparison against standardized benchmarks, and independent verification of pricing and commercial terms against vendor documentation.

Update policy: We review and update this guide when vendors ship material model upgrades, pricing changes, or terms-of-service modifications. Minor updates (pricing corrections, feature additions) are applied continuously. Major reassessments (new tools, scoring changes) are published with a version note.

Affiliate disclosure: Some links in this article may be affiliate links. We earn a small commission if you purchase through these links at no additional cost to you. Affiliate relationships never influence our scores, rankings, or recommendations. Tools are evaluated on merit using the rubric above.

How to Choose an AI Video Generator in 2026

Direct Answer

The best AI video generator for you depends on three factors: what type of video you need (generative scenes, avatars, or edited footage), your budget, and whether you require commercial IP protection. For most professional users, Google Veo 3.1 (cinematic), HeyGen (avatars), or Runway Gen-4 (creative control) will be the right starting point. It depends on your use case — read the decision tree below.

Selection Checklist

Use this checklist before committing to a subscription:

- Define your output type. Do you need fully generated scenes (text-to-video), avatar-based talking heads, or AI-assisted editing of existing footage?

- Confirm aspect ratio needs. If you primarily create for TikTok, Reels, or Shorts, verify the tool supports native 9:16 vertical generation — not just cropping from 16:9.

- Check clip length requirements. Generative models typically produce 5–60 second clips. Avatar platforms can produce minutes-long segments. Know your target before choosing.

- Evaluate motion complexity. Fast action, multiple subjects, and hand/face close-ups remain stress tests. Test these scenarios during any trial period.

- Assess audio needs. Native audio generation (ambient sound, SFX), text-to-speech voiceover, and synchronized lip-sync are three different capabilities. Few tools excel at all three.

- Understand the pricing model. Subscriptions, credit packs, and per-minute pricing create very different cost curves. Calculate your cost per usable second based on realistic output-to-keeper ratios (see our test method above).

- Verify commercial rights. Free tiers often restrict commercial use or add watermarks. Some paid plans still don’t grant full commercial rights. Read the ToS carefully.

- Check provenance and labeling. If you operate in a regulated industry or run paid ads, verify whether the tool embeds C2PA metadata or visible watermarks. The FTC’s guidance on AI claims makes disclosure increasingly non-optional.

- Test controllability. Camera motion presets, keyframing, motion brush, and reference-image conditioning vary widely. More control = fewer wasted generations.

- Consider your editing pipeline. Does the tool export to formats your NLE (Premiere, DaVinci, Final Cut) expects? Does it have a built-in timeline, or are you round-tripping?

- Evaluate enterprise requirements. SOC 2 compliance, SSO, team seats, audit logs, and data retention policies matter for agencies and enterprise buyers.

- Factor in character consistency. If your brand relies on recurring characters, check whether the tool supports reference images or deterministic seed control for consistency across shots.

Decision Tree: If You Need X, Choose Y

- If you need the highest visual fidelity for hero content → Google Veo 3.1

- If you need creative storyboard-to-video with extended clips → OpenAI Sora 2

- If you need brand-safe output with IP indemnification → Adobe Firefly Video

- If you need professional control + existing NLE integration → Runway Gen-4

- If you need fast 9:16 clips for TikTok/Reels on a budget → Kling or Haiper

- If you need multi-language avatar training videos at scale → HeyGen or Synthesia

- If you need compliance/L&D videos with SCORM support → Colossyan or Synthesia

- If you need to repurpose long-form content into shorts → VEED.io or Descript

- If you need a storyboard-first workflow → LTX Studio

- If you need a full editing suite with AI generation built in → Descript

The 20 Best AI Video Generators in 2026 — Full Reviews

Cinematic Text-to-Video & Image-to-Video Models

These tools generate original video from text prompts, still images, or existing video. They’re the core generative video engines of 2026. For a broader look at AI creative tools — including image generators that complement these video platforms — see our guide to the best AI image generators.

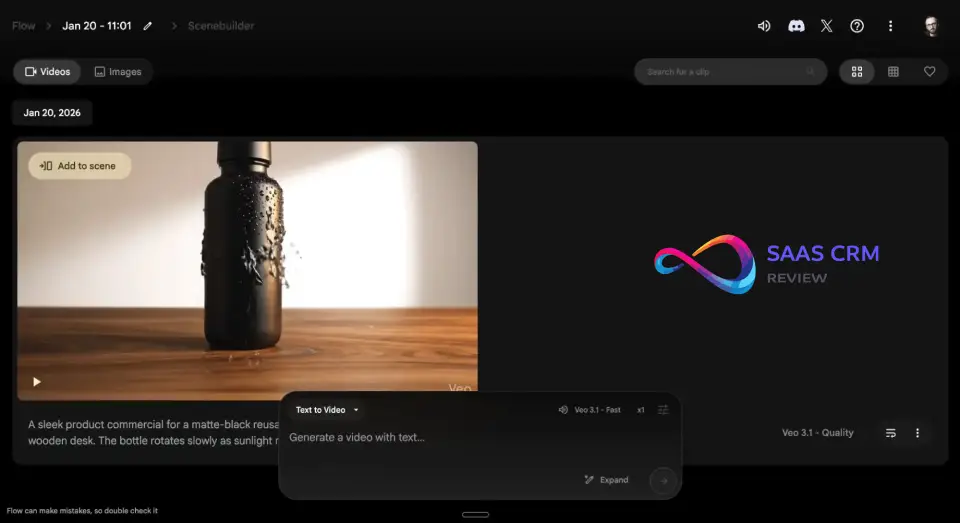

1. Google Veo 3.1

- Best for: Cinematic realism, native audio, commercial production

- What it creates: T2V, I2V, V2V

| Pros | Cons |

|---|---|

| 4K, 60 s cinematic realism — best-in-class | Google ecosystem lock-in — no native Adobe/DaVinci integration |

| Native audio (dialogue + SFX) in a single pass | Credit-based Vertex AI pricing escalates at scale |

| Excellent camera movement and lighting fidelity | Smaller creative community than Runway or Sora |

| Deep Google Cloud / Vertex AI integration | No IP indemnification |

Standout features (2026): Veo 3.1’s defining leap is synchronized native audio generation — dialogue, ambient sound, and SFX rendered alongside the video in a single inference pass, eliminating the need to layer audio in post. Resolution scales up to 4K (per Google DeepMind docs), with clips extending to 60 seconds while maintaining strong temporal consistency.

The model handles complex multi-subject scenes with fewer hallucinations per frame than any competitor we evaluated. Integration with Google’s creative ecosystem is deep: you can call Veo through Vertex AI for programmatic workflows, access it inside Google Workspace for quick marketing assets, or use it within Google’s Flow cinematic interface for storyboard-level control.

Camera movement understanding is excellent — dolly, crane, and orbital shots respond reliably to prompt instructions. Lighting fidelity, especially on skin tones and reflective surfaces, is best-in-class.

Limitations / deal-breakers: The tight coupling to Google’s ecosystem is a real constraint — if your team runs on Adobe or DaVinci, expect round-tripping. API pricing on Vertex AI can escalate quickly when you’re iterating at scale, and there’s no flat “unlimited” plan.

The creative community is smaller than Runway's or Sora's, so shared prompt libraries and presets are thinner. Some users report outputs that feel overly "clean" and polished, lacking the organic grain or stylistic edge that editorial and indie work demands. Fine-tuning or style LoRA support is not publicly available.Pricing snapshot (as of March 2026): Included in select Google One AI Premium plans; Vertex AI usage is credit-based with per-second output pricing. Consumer access through Gemini app with generation limits. Check Google Cloud’s pricing page for current API rates.

Commercial rights & watermarking: Commercial use permitted on paid plans. Outputs include SynthID watermarking (imperceptible, pixel-level) and C2PA provenance metadata by default. Free-tier clips carry a visible watermark. Google does not currently offer IP indemnification comparable to Adobe’s.

Who should use it: Filmmakers, agencies, and production houses that need the highest visual fidelity and native audio. Teams already invested in Google Cloud will find the integration seamless.

Who should avoid it: Solo creators who want a simple, self-contained web UI without Google Cloud setup. Budget-conscious teams iterating heavily — credit costs compound fast without an unlimited option.

Score breakdown:

| Dimension | Score |

|---|---|

| Realism & detail | 9.5 |

| Motion fidelity | 9.2 |

| Temporal consistency | 9.3 |

| Controllability | 8.8 |

| Audio / lip sync | 9.5 |

| Speed & reliability | 8.5 |

| Workflow & editing | 8.0 |

| Rights & safety | 9.5 |

| Value | 8.5 |

| Composite | 9.2 |

2. OpenAI Sora 2

- Best for: Creative storytelling, storyboard workflows, extended narrative clips

- What it creates: T2V, I2V, V2V

| Pros | Cons |

|---|---|

| Storyboard mode with per-shot prompts — best for narrative | Unpredictable queue times during peak hours |

| 60 s clips with strong temporal coherence | No native timeline editor — requires external NLE |

| Remix/blend mode for iterative visual direction | Pro tier ($200/mo) steep for solo creators |

| Native ambient audio + SFX generation | Occasional skin-tone over-saturation under warm lighting |

Standout features (2026): Sora 2’s storyboard mode is the feature that separates it from the field. You plan multi-shot sequences with per-shot prompts, transition types, and timing controls — then generate the entire sequence with character and setting consistency carried across shots (see OpenAI Sora documentation).

Clips extend to 60 seconds with strong coherence, and the remix mode lets you blend reference images with text descriptions for precise visual direction. Native audio generation covers ambient sound and SFX (dialogue audio is improving but not yet matching Veo).

Prompt adherence on complex, multi-subject scenes is exceptional — Sora 2 reliably interprets spatial relationships, action sequences, and camera choreography that trip up other models. The blend and variation tools let you iterate on a generation without starting from scratch, saving both time and credits.

Limitations / deal-breakers: Skin tones occasionally over-saturate, particularly under warm lighting conditions — a known issue that requires prompt tweaking. Generation queue times spike unpredictably during peak hours, making Sora unreliable for deadline-driven batch work.

There is no native timeline editor — you export clips and assemble them in an external NLE, which adds friction for users who want an end-to-end solution. Pro tier pricing ($200/mo) is steep for solo creators who need high volume, and the Plus tier’s generation limits can feel restrictive for professional use.

Physics handling on fast-moving objects and liquid simulations still shows occasional artifacts.

Pricing snapshot (as of March 2026): Included with ChatGPT Plus ($20/mo, limited generations); ChatGPT Pro ($200/mo, priority queue + significantly higher limits). API access available through OpenAI’s platform with per-second pricing. Check OpenAI’s pricing page for current rates and limit details.

Commercial rights & watermarking: Full commercial rights on Plus and Pro plans. C2PA metadata embedded in all outputs. Visible watermark on free-tier outputs. OpenAI’s ToS grants you ownership of outputs generated on paid plans, but does not include IP indemnification.

Who should use it: Creators, indie filmmakers, and agencies who need long-form narrative clips with fine-grained per-shot control. Storyboard mode makes it the strongest choice for short film pre-visualization and multi-scene ad campaigns.

Who should avoid it: Teams needing fast, predictable batch production — queue variability is a real operational risk. Users who need an all-in-one editor without round-tripping to external tools.

Score breakdown:

| Dimension | Score |

|---|---|

| Realism & detail | 9.3 |

| Motion fidelity | 9.0 |

| Temporal consistency | 9.0 |

| Controllability | 9.2 |

| Audio / lip sync | 8.8 |

| Speed & reliability | 7.8 |

| Workflow & editing | 8.5 |

| Rights & safety | 9.2 |

| Value | 8.0 |

| Composite | 9.0 |

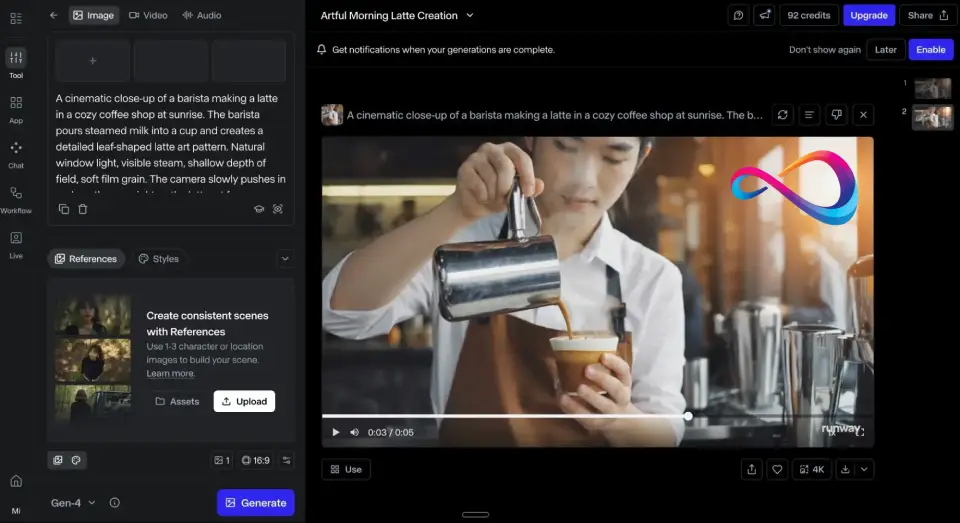

3. Runway (Gen-4 Turbo)

- Best for: Professional creative workflows, fine-grained control, NLE integration

- What it creates: T2V, I2V, V2V, Editor

| Pros | Cons |

|---|---|

| Best-in-class controllability: motion brush, bezier paths, keyframing | Credit-based pricing — cost per usable second unpredictable |

| Green Screen mode for compositing (unique among generators) | Failed/unusable outputs still consume credits |

| Premiere Pro + After Effects plugins for NLE integration | Audio limited to SFX — no dialogue or ambient |

| Largest creative community + video upscaling/inpainting built in | No IP indemnification |

Standout features (2026): Runway remains the gold standard for controllability in generative video. Gen-4 Turbo offers motion brush (paint motion vectors directly onto the frame), camera path controls with bezier curves, keyframing for start/end poses, and reference-image conditioning for character and style consistency.

Green Screen mode isolates subjects for compositing — a feature no other pure generator matches. Clips extend to 40 seconds. The Premiere Pro and After Effects plugins let you generate and iterate without leaving your NLE, which is a meaningful workflow advantage for professional editors.

Runway’s creative community is the largest in the space, with shared presets, prompt libraries, and tutorials that lower the learning curve. The platform also offers video upscaling, frame interpolation, and inpainting tools, making it a near-complete post-production toolkit.

Limitations / deal-breakers: Credit-based pricing is Runway’s Achilles heel for teams doing heavy iteration. Each generation burns credits, and failed or unusable outputs still cost you — making the effective “cost per usable second” hard to predict.

Audio generation is partial: SFX are supported, but dialogue and ambient audio require external tools. Photorealistic human subjects are strong but slightly behind Veo 3.1 and Sora 2 on skin texture and micro-expressions.

The Unlimited plan ($95/mo) helps, but high-resolution, long-clip generations still consume credits faster than casual users expect. Export codec options are solid but don’t include ProRes natively.

Pricing snapshot (as of March 2026): Free tier (limited, watermarked); Standard $15/mo (625 credits); Pro $35/mo (2,250 credits); Unlimited $95/mo (unlimited generations with fair-use policy). Credits vary by resolution and clip length. Check Runway’s pricing page for current rates.

Commercial rights & watermarking: Commercial use on Standard plan and above. No watermark on paid plans. C2PA metadata supported. No IP indemnification.

Who should use it: Video editors, motion designers, VFX artists, and creative professionals who need maximum control, compositing features, and tight NLE integration.

Who should avoid it: Non-technical users looking for a one-click “type and get video” solution. Teams on tight budgets who can’t absorb the cost of failed iterations under credit-based pricing.

Score breakdown:

| Dimension | Score |

|---|---|

| Realism & detail | 8.8 |

| Motion fidelity | 8.9 |

| Temporal consistency | 8.6 |

| Controllability | 9.5 |

| Audio / lip sync | 7.0 |

| Speed & reliability | 8.5 |

| Workflow & editing | 9.2 |

| Rights & safety | 8.8 |

| Value | 7.8 |

| Composite | 8.7 |

4. ByteDance Seedance 2.0

- Best for: Multi-modal generation with synchronized native audio

- What it creates: T2V, I2V

| Pros | Cons |

|---|---|

| Native audio (dialogue, ambient, music) in a single pass | Data-handling under US regulatory scrutiny |

| Best-in-class dance, music, and rhythmic content | No motion brush, limited camera path controls |

| 30–50% cheaper per second than Western competitors | Ambiguous English-language ToS for US commercial use |

| Fast generation — under 60 seconds | Sparse English documentation; slow support |

Standout features (2026): Seedance 2.0 represents ByteDance’s aggressive push into multi-modal generative video. The model generates video with synchronized audio — dialogue attempts, ambient sound, and music — in a single inference pass, similar to Veo 3.1’s approach but at a noticeably lower price point.

Performance on dance, music, and rhythmic content is arguably best-in-class, which is unsurprising given ByteDance’s deep investment in TikTok’s content understanding stack. Visual quality is competitive with mid-to-upper-tier Western models, particularly on outdoor scenes, crowd dynamics, and full-body human motion.

The model handles multi-modal conditioning well: you can combine text prompts with reference images, audio cues, and motion hints to guide output. Generation speed is fast, with most clips returning in under 60 seconds.

Limitations / deal-breakers: Availability and Terms of Service vary by region, and this is the central concern for US-based buyers. Data handling policies have drawn regulatory scrutiny — enterprise buyers should audit their compliance posture carefully before deploying Seedance outputs in commercial campaigns.

Controllability lags behind Runway and Sora: there’s no motion brush, limited camera path control, and no keyframing. English-language documentation is improving but still sparse compared to Western competitors.

The editing and workflow tools are minimal — Seedance is a generation engine, not a creative suite. Customer support response times for non-Chinese-speaking users can be slow.

Pricing snapshot (as of March 2026): Credit-based pricing through the Seedance platform and ByteDance developer portal. Rates tend to undercut Western competitors by 30–50% on a per-second basis. Check the Seedance/ByteDance developer portal for current rates — pricing structure has changed multiple times.

Commercial rights & watermarking: Commercial rights depend on plan and region — the English-language ToS can be ambiguous on derivative works and distribution rights. Verify ToS carefully before using in US ad campaigns or client deliverables.

Who should use it: Creators focused on music, dance, and rhythmic content who prioritize native audio sync and competitive pricing. Independent creators comfortable navigating regional ToS differences.

Who should avoid it: Enterprise buyers with strict data sovereignty, GDPR, or SOC 2 compliance requirements. Agencies who need clear, unambiguous commercial licensing for US client work.

Score breakdown:

| Dimension | Score |

|---|---|

| Realism & detail | 8.7 |

| Motion fidelity | 8.8 |

| Temporal consistency | 8.3 |

| Controllability | 7.5 |

| Audio / lip sync | 9.0 |

| Speed & reliability | 8.2 |

| Workflow & editing | 7.0 |

| Rights & safety | 7.5 |

| Value | 8.8 |

| Composite | 8.5 |

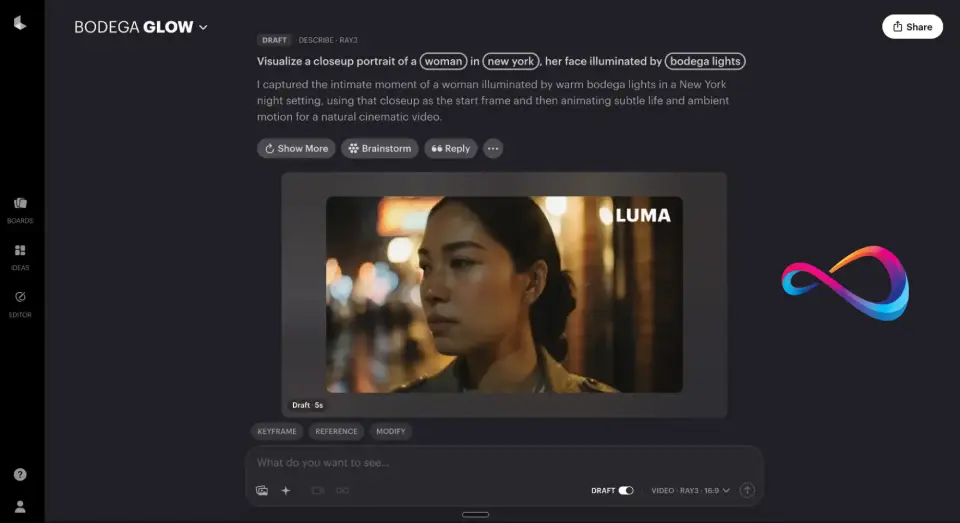

5. Luma (Ray 2)

- Best for: Stylized motion, artistic and abstract video, rapid prototyping

- What it creates: T2V, I2V

| Pros | Cons |

|---|---|

| Best stylized/non-photorealistic quality (painterly, anime, surreal) | Weak photorealistic humans (skin, hands, micro-expressions) |

| Fastest generation — clips in under 30 seconds | No native audio generation of any kind |

| Clean, intuitive Dream Machine UI + developer API | Max 20 s clips — shorter than most competitors |

| Generous free tier (30 clips/mo) | No V2V, no motion brush, no advanced camera controls |

Standout features (2026): Ray 2 excels in territory most competitors neglect: stylized, non-photorealistic video generation. Painterly, anime, surreal, and abstract motion styles come out looking intentional rather than artifacted.

Generation speed is a genuine differentiator — short clips often return in under 30 seconds, making Ray 2 the fastest tool for rapid creative prototyping. The Dream Machine web UI is clean and intuitive, with essentially no learning curve for non-technical users.

Keyframe support allows start-frame and end-frame conditioning, giving you control over the opening and closing compositions. The model handles camera motion smoothly on simpler scenes, and color palette consistency within a clip is strong. Luma’s API is developer-friendly, making it a solid choice for teams building generative video into apps or automated workflows.

Limitations / deal-breakers: Photorealistic humans remain a clear weakness — skin textures, facial micro-expressions, and hand anatomy are noticeably behind Veo, Sora, and Runway. There is no native audio generation of any kind, so all sound design happens in post.

Maximum clip length tops out at 20 seconds, shorter than most competitors now offering 30–60 seconds. Camera controls are limited to basic presets; there’s no motion brush, no bezier camera paths, and no multi-point keyframing.

V2V (video-to-video) capabilities are absent. Character consistency across multiple generations is unreliable without external reference tools. The free tier, while generous at 30 clips per month, produces lower-resolution output.

Pricing snapshot (as of March 2026): Free tier (30 clips/mo, lower resolution); Standard $24/mo; Pro $99/mo with higher resolution and priority queue. Check Luma’s pricing page for current plan details.

Commercial rights & watermarking: Commercial use permitted on all paid plans. No watermark on paid plans. Free-tier outputs may carry a small watermark. No IP indemnification offered.

Who should use it: Designers, artists, social media creators, and creative directors who value speed and aesthetic variety over photorealism. Ideal for mood boards, concept exploration, and stylized brand videos.

Who should avoid it: Anyone needing realistic human subjects, native audio, clips longer than 20 seconds, or advanced camera controls. Not suitable for commercial production requiring photorealism.

Score breakdown:

| Dimension | Score |

|---|---|

| Realism & detail | 8.0 |

| Motion fidelity | 8.5 |

| Temporal consistency | 8.3 |

| Controllability | 7.8 |

| Audio / lip sync | 5.0 |

| Speed & reliability | 9.0 |

| Workflow & editing | 7.5 |

| Rights & safety | 8.5 |

| Value | 8.5 |

| Composite | 8.2 |

6. Kling

- Best for: Short-form vertical video for TikTok, Reels, and Shorts

- What it creates: T2V, I2V, V2V

| Pros | Cons |

|---|---|

| Native 9:16 vertical video — purpose-built for TikTok/Reels/Shorts | Max 15 s clips — requires stitching for longer content |

| Strong human motion and facial expression handling | No motion brush, bezier paths, or advanced controls |

| Generous free tier (~66 clips/day) + Pro from ~$9.90/mo | Regional data-handling concerns for US enterprise |

| V2V style transfer on existing footage | Minimal editing tools — generator only |

Standout features (2026): Kling is purpose-built for the short-form vertical video workflow. The model generates native 9:16 content optimized for TikTok, Instagram Reels, and YouTube Shorts — not a cropped-down version of a 16:9 output, but a composition-aware vertical generation.

Human motion handling is a key strength: gestures, facial expressions, and full-body movement feel natural and fluid in clips up to 15 seconds. The free tier is among the most generous in the generative video space, making it genuinely accessible for individual creators who want to experiment without paying.

V2V mode supports style transfer on existing footage — useful for applying artistic looks to phone-shot content. Generation speed is fast (typically under 45 seconds), which matters for social media workflows where volume and iteration speed drive results.

Limitations / deal-breakers: The 15-second maximum clip length is Kling’s hardest constraint — anything longer requires stitching multiple generations. Audio support is partial, limited to basic SFX with no dialogue generation or synchronized speech.

Fine-grained creative controls are limited compared to Runway or Sora: there’s no motion brush, no bezier camera paths, and reference-image conditioning is basic. Operated by Kuaishou (a major Chinese tech company), which brings similar regional data-handling considerations as Seedance.

US enterprise buyers should review data residency and processing terms carefully. The web UI is functional but lacks advanced editing or timeline capabilities — Kling is a generator, not a suite.

Pricing snapshot (as of March 2026): Free tier (generous daily limit — reported at 66 clips/day; verify on platform as this has fluctuated); Pro plan approximately $9.90/mo with higher quality and priority queue. Check Kling’s pricing page for current rates.

Commercial rights & watermarking: Commercial use on paid Pro plans. No watermark on Pro tier. Free-tier outputs may carry a small watermark. Verify regional ToS for specific commercial use provisions in the US market.

Who should use it: TikTok, Reels, and Shorts creators who need fast vertical video on a budget. Social media managers producing daily content who value speed and volume over maximum fidelity.

Who should avoid it: Users who need clips longer than 15 seconds, advanced camera choreography, or detailed compositional control. Enterprise teams with strict data-residency requirements.

Score breakdown:

| Dimension | Score |

|---|---|

| Realism & detail | 8.2 |

| Motion fidelity | 8.5 |

| Temporal consistency | 8.0 |

| Controllability | 7.0 |

| Audio / lip sync | 6.5 |

| Speed & reliability | 8.8 |

| Workflow & editing | 7.0 |

| Rights & safety | 7.5 |

| Value | 9.0 |

| Composite | 8.1 |

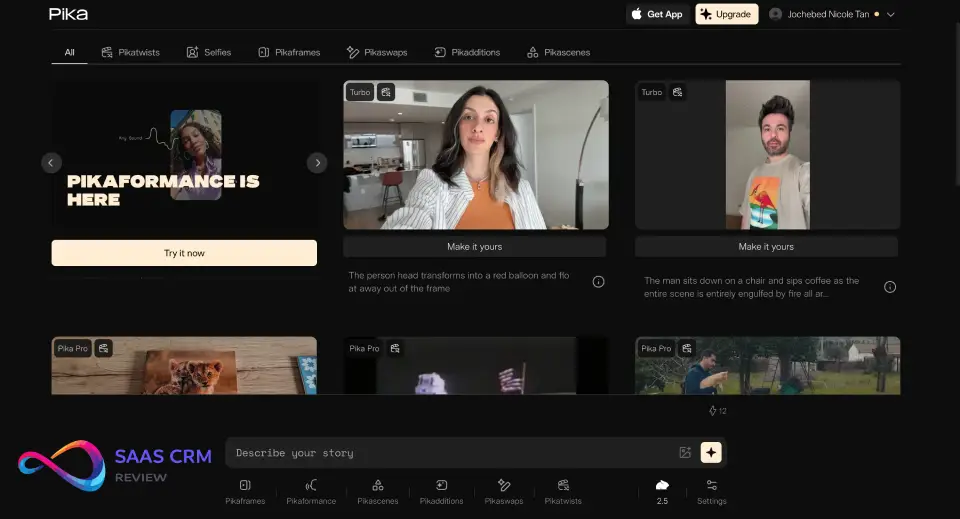

7. Pika 2.0

- Best for: Quick creative iterations, effects, and style exploration

- What it creates: T2V, I2V, V2V

| Pros | Cons |

|---|---|

| “Scenes” storyboard for multi-shot continuity | Max 10 s clips — shortest among top competitors |

| Unique creative tools: Inflate (2D→3D) and Modify (V2V) | No native audio generation |

| Fast generation + clean, approachable UI ($10/mo entry) | Photorealism trails Veo, Sora, and Runway noticeably |

Standout features (2026): Pika 2.0 introduced “Scenes” — a storyboard-like workflow for chaining clips with consistent characters and settings across shots, which addresses one of generative video’s biggest pain points: multi-shot continuity. The “Inflate” feature (image-to-3D-video) and “Modify” (V2V transformation) tools are creatively unique.

Inflate turns a 2D image into a rotating 3D-like video, while Modify lets you transform the style, lighting, or environment of existing footage. Fast turnaround on short clips (typically under 30 seconds).

The UI is clean and approachable, with a Discord-integrated community that shares prompts and workflows. Pika handles abstract and stylized content well, making it a strong ideation tool for creative exploration and concept testing before investing in higher-fidelity tools.

Limitations / deal-breakers: Maximum clip length remains capped at 10 seconds — a significant constraint for anything beyond social snippets. Photorealism still trails Veo, Sora, and Runway noticeably, particularly on human skin texture and complex physics.

No native audio generation of any kind. Export resolution on lower tiers is limited to 720p, which is below the standard for professional delivery.

Character consistency in Scenes mode is improved but not fully reliable — expect some variation between shots. Camera controls exist but are basic compared to Runway’s motion brush and bezier paths.

Pricing snapshot (as of March 2026): Free tier (limited daily generations); Standard $10/mo (250 credits); Pro $35/mo (unlimited); Unlimited $60/mo (priority + 4K export). Check Pika’s pricing page for current plan details.

Commercial rights & watermarking: Commercial use permitted on all paid plans. No watermark on paid tiers. Free-tier outputs include a Pika watermark. No IP indemnification.

Who should use it: Creative teams experimenting with AI video, agencies running quick concept tests, and social media teams producing short-form clips. Individual creators exploring stylized video effects.

Who should avoid it: Anyone needing clips over 10 seconds, photorealistic human output, or production-resolution exports without a Pro-tier subscription.

Score breakdown:

| Dimension | Score |

|---|---|

| Realism & detail | 7.5 |

| Motion fidelity | 8.0 |

| Temporal consistency | 7.8 |

| Controllability | 8.0 |

| Audio / lip sync | 5.0 |

| Speed & reliability | 8.5 |

| Workflow & editing | 7.8 |

| Rights & safety | 8.0 |

| Value | 8.0 |

| Composite | 7.8 |

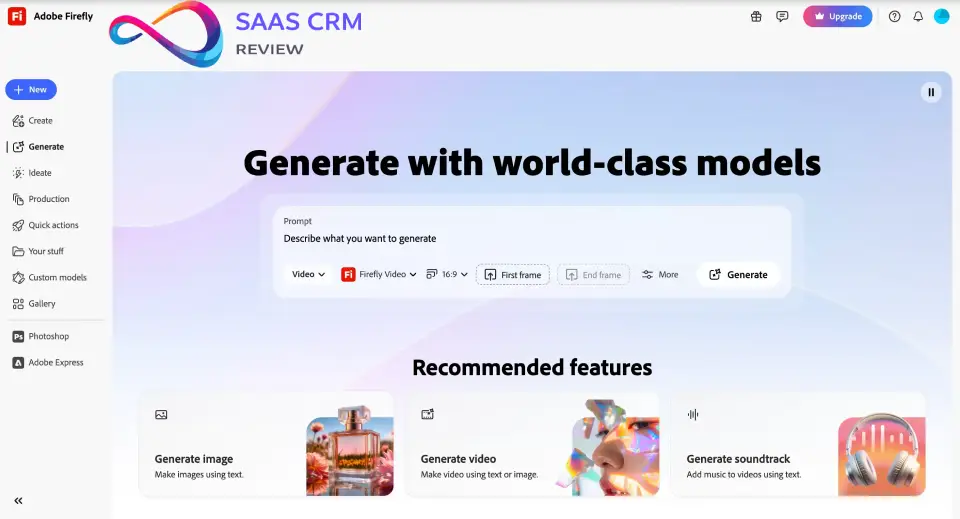

8. Adobe Firefly Video

- Best for: Brand-safe commercial production, Premiere Pro-integrated workflows

- What it creates: T2V, I2V, Editor

| Pros | Cons |

|---|---|

| Only AI video generator with full IP indemnification | Visual quality behind Veo 3.1 and Sora 2 |

| Trained exclusively on licensed/public-domain content | Max 15 s clip generation |

| Seamless Premiere Pro + After Effects integration | No native audio generation |

| C2PA Content Credentials on every output | Outputs skew generic “stock footage” aesthetics |

Standout features (2026): Firefly Video is Adobe’s answer to the question every brand manager asks: “Can we use this without getting sued?” Every output is trained exclusively on Adobe Stock licensed content, publicly-licensed data, and public-domain material — and Adobe provides full IP indemnification for Creative Cloud enterprise subscribers. This is the only major AI video generator offering that legal protection as of March 2026.

Integration with Premiere Pro and After Effects is seamless: generate clips inside your timeline, use “Generative Extend” to fill gaps or extend existing footage, and apply “Generative Remove” to erase objects from video.

The “Quick Cut” beta is an ambitious new feature — feed it raw footage plus a text prompt, and it generates a rough-cut edit with transitions, pacing, and shot selection handled by AI. Content Credentials (C2PA) are attached to every output, making provenance tracking automatic.

Limitations / deal-breakers: Visual quality is good but measurably behind Veo 3.1 and Sora 2 for cinematic realism. Outputs tend to look polished but generic — they skew “stock footage” rather than cinematic or editorial.

Clip lengths max at 15 seconds for generation, shorter than most dedicated generators. No native audio generation — voiceover and sound design require separate Adobe tools (Podcast, Audition).

The creative range feels constrained: Firefly Video is designed for commercial safety, and that conservatism shows in the stylistic variety of its outputs. Generative credit consumption can be high for iteration-heavy workflows within Creative Cloud’s quota system.

Pricing snapshot (as of March 2026): Included with Creative Cloud All Apps subscriptions (monthly generative credit allotment); standalone Firefly plans available for non-CC subscribers. Enterprise plans with expanded credits available. Check Adobe’s pricing page for current credit breakdowns.

Commercial rights & watermarking: Full commercial rights with IP indemnification on paid Creative Cloud plans — this is Firefly’s unique competitive advantage. Content Credentials (C2PA) attached to all outputs by default.

Who should use it: Brands, agencies, and enterprise teams where legal safety is non-negotiable. Teams already embedded in the Adobe ecosystem will get the most workflow value.

Who should avoid it: Creators prioritizing cutting-edge realism, stylistic experimentation, or cinematic narrative work. Solo creators who don’t use Adobe’s creative tools.

Score breakdown:

| Dimension | Score |

|---|---|

| Realism & detail | 7.8 |

| Motion fidelity | 7.5 |

| Temporal consistency | 8.0 |

| Controllability | 8.5 |

| Audio / lip sync | 5.5 |

| Speed & reliability | 8.5 |

| Workflow & editing | 9.0 |

| Rights & safety | 9.8 |

| Value | 7.5 |

| Composite | 7.9 |

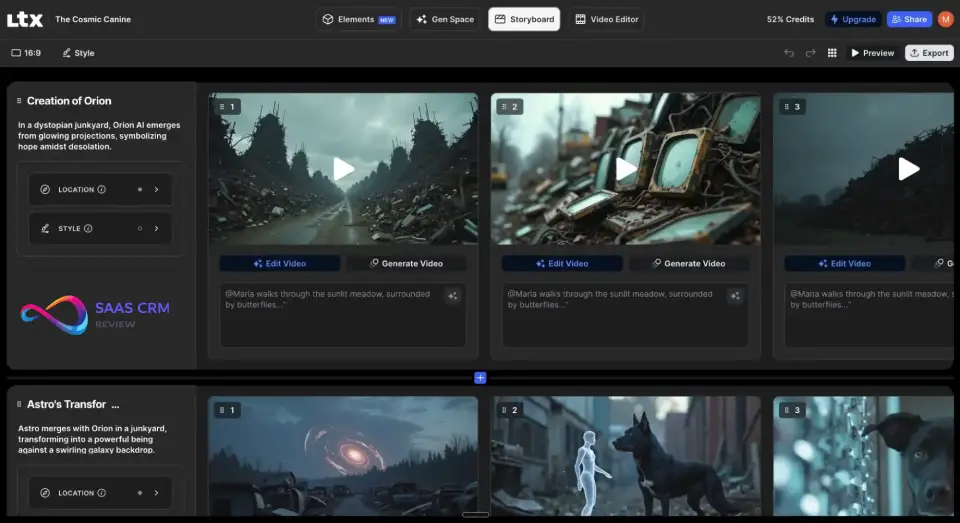

9. LTX Studio

- Best for: Storyboard-first multi-shot video creation

- What it creates: T2V, I2V, Editor

| Pros | Cons |

|---|---|

| Best storyboard-to-video pipeline for multi-shot projects | Per-frame quality a tier below Veo, Sora, Runway |

| Strong character consistency via reference images | No native audio generation |

| Built-in timeline editor — no NLE round-tripping | Longer generation times for multi-shot sequences |

| Ideal for pre-visualization, pitch decks, animatics | Storyboard structure can feel restrictive |

Standout features (2026): LTX Studio’s differentiator is its storyboard-to-video pipeline — the most structured approach to multi-shot video production in the generative space. You plan an entire sequence by writing per-shot descriptions, selecting camera angles, and uploading character reference images.

The platform then generates all shots with surprising character consistency, maintaining clothing, facial features, and color palette across the sequence. A built-in timeline editor lets you assemble, trim, and rearrange generated shots without leaving the platform.

This makes it uniquely valuable for pre-visualization, pitch decks, animatics, and short narrative projects. The character consistency engine uses reference-image conditioning and style anchoring, delivering better cross-shot coherence than most competitors’ ad-hoc approaches.

Limitations / deal-breakers: Raw per-frame visual quality is a tier below Veo, Sora, and Runway — textures can appear soft, and fine detail in faces and hands is less reliable. Generation times for multi-shot sequences are longer than single-clip tools, sometimes significantly so for sequences exceeding 8 shots.

No native audio generation — all voiceover and sound design happens in post. The platform is still actively evolving, with periodic UI changes and feature additions that can feel rough around the edges.

Export options are functional but limited compared to NLE-integrated tools. The storyboard workflow, while powerful, imposes structure that may feel restrictive for users who prefer to generate and explore freely.

Pricing snapshot (as of March 2026): Free tier (limited shots/mo); Standard $19/mo; Pro $59/mo with priority generation and higher resolution. Check LTX Studio’s pricing page for current plan details.

Commercial rights & watermarking: Commercial use on all paid plans. Free-tier outputs carry a watermark. No IP indemnification offered.

Who should use it: Creators and agencies planning multi-shot narratives, pitch decks, animatics, or pre-visualization. Ideal for teams that think in terms of shot lists and storyboards.

Who should avoid it: Users who need single-shot maximum-fidelity clips for final delivery. Anyone who finds storyboard-first workflows overly structured for their creative process.

Score breakdown:

| Dimension | Score |

|---|---|

| Realism & detail | 7.5 |

| Motion fidelity | 7.5 |

| Temporal consistency | 8.0 |

| Controllability | 8.8 |

| Audio / lip sync | 5.0 |

| Speed & reliability | 7.0 |

| Workflow & editing | 8.8 |

| Rights & safety | 7.8 |

| Value | 7.5 |

| Composite | 7.7 |

10. Haiper

- Best for: Budget-friendly short-form video for social and content marketing

- What it creates: T2V, I2V

| Pros | Cons |

|---|---|

| Most generous free tier: 10 clips/day | Max 8 s clips — shortest in this guide |

| Lowest-cost paid plan: $8/mo with no watermark | Noticeably lower visual quality than mid-tier tools |

| Fast generation (~30 s) with minimal UI | No audio, no advanced controls, no reference conditioning |

| Best quality-to-cost ratio for B-roll and transitions | Not suitable as primary tool for hero content |

Standout features (2026): Haiper’s value proposition is straightforward: it’s the most accessible entry point into AI video generation. The free tier allows up to 10 clips per day — more generous than any competitor at this writing.

Paid plans start at just $8/mo (Explorer tier) with no watermark, making it the lowest-cost option for producing watermark-free AI video for commercial use. Generation speed is fast, typically returning clips in under 30 seconds.

The UI is intentionally minimal — almost no learning curve, designed for users who want to type a prompt and get a usable clip without configuring camera angles, keyframes, or reference images. For straightforward social media content — backgrounds, transitions, abstract B-roll — the quality-to-cost ratio is hard to beat.

Limitations / deal-breakers: Visual quality is noticeably lower than mid- and top-tier models across every dimension: textures are softer, motion artifacts are more frequent, and human faces show more distortion. Maximum clip length is 8 seconds — the shortest in this guide.

There is no audio generation of any kind, no advanced controls (no motion brush, no keyframing, no camera paths), and no reference-image conditioning. V2V capabilities are limited and basic.

The output ceiling means Haiper works for supporting content (B-roll, transitions, background visuals) but is rarely suitable as a primary tool for hero content or client deliverables. 1080p is the maximum resolution on most plans.

Pricing snapshot (as of March 2026): Free tier (10 clips/day, watermarked, lower resolution); Explorer $8/mo (no watermark, higher resolution); Pro $24/mo (priority, 4K). Check Haiper’s pricing page for current generation limits.

Commercial rights & watermarking: Commercial use on all paid plans. Visible watermark on free tier; removed on Explorer and above. No IP indemnification. No C2PA metadata embedding.

Who should use it: Solo creators, small businesses, and social media managers testing AI video on a tight budget. Content marketers who need quick background clips or transitions.

Who should avoid it: Anyone needing production-quality output, clips longer than 8 seconds, human subjects with reliable fidelity, or any advanced generative control.

Score breakdown:

| Dimension | Score |

|---|---|

| Realism & detail | 6.8 |

| Motion fidelity | 7.0 |

| Temporal consistency | 7.2 |

| Controllability | 5.5 |

| Audio / lip sync | 4.0 |

| Speed & reliability | 8.5 |

| Workflow & editing | 6.0 |

| Rights & safety | 7.5 |

| Value | 9.0 |

| Composite | 7.3 |

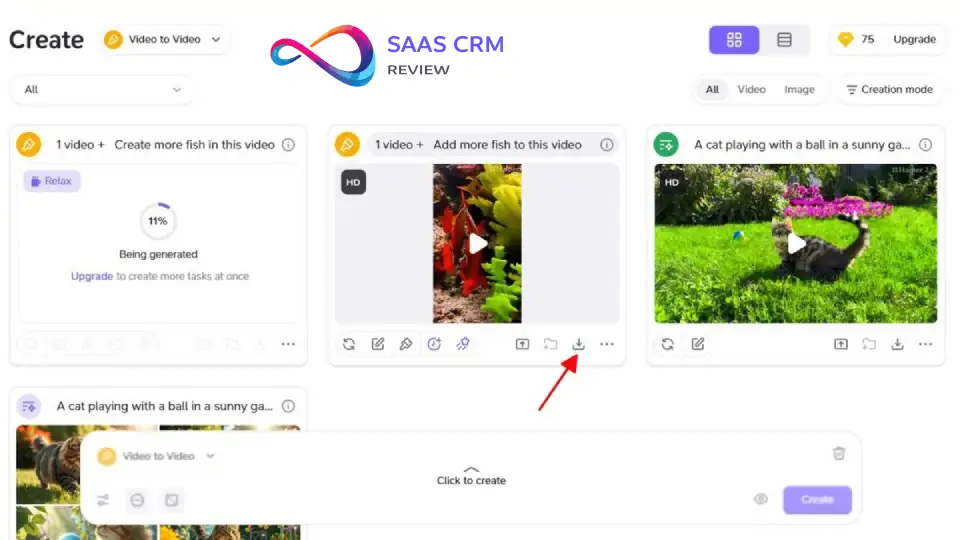

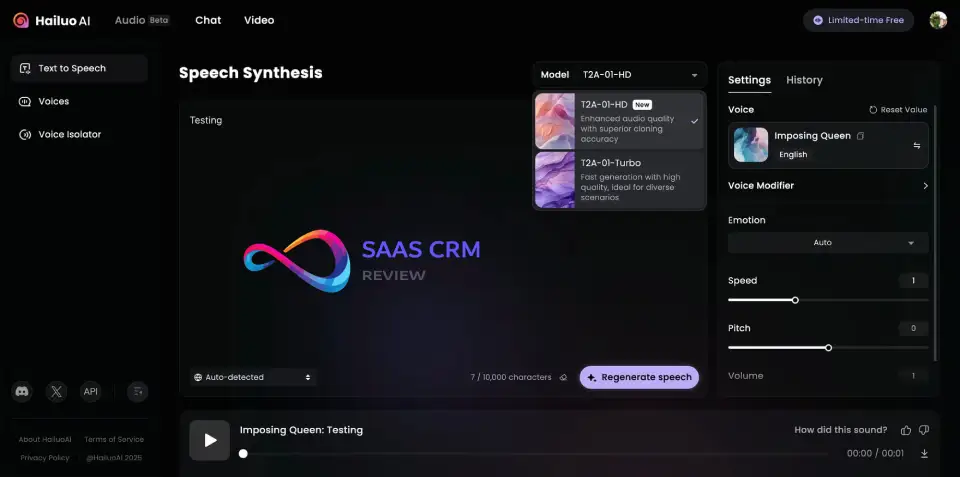

11. MiniMax Hailuo

- Best for: Experimental realism with native audio at competitive cost

- What it creates: T2V, I2V

| Pros | Cons |

|---|---|

| Photorealistic landscapes/architecture rival top-tier tools | Inconsistent human face quality in complex scenes |

| Native audio generation (ambient, SFX, basic dialogue) | No enterprise controls (SSO, audit logs, SOC 2) |

| Generous free tier + pricing undercuts Western competitors | Vague English-language commercial rights ToS |

| Active community sharing prompts and results | Minimal editing tools — generator only |

Standout features (2026): Hailuo has quietly become one of the most impressive value-to-quality players in the generative video space. Its photorealistic capabilities on landscapes, architecture, and objects rival tools costing three to five times more.

Native audio generation is a differentiator — Hailuo generates ambient sound, environmental SFX, and basic dialogue attempts synchronized to the video, a feature only Veo 3.1 and Seedance 2.0 match at this level. The free tier is generous enough for genuine experimentation, not just a teaser.

The community around Hailuo is active and transparent, sharing prompts, comparison galleries, and workaround techniques. Generation speed is competitive, with most clips returning within 60 seconds. The model handles wide landscape shots, architectural walkthroughs, and atmospheric scenes particularly well.

Limitations / deal-breakers: Inconsistent quality on human faces in complex, multi-character scenes — close-ups can show morphing artifacts, particularly around eyes and mouths. The Terms of Service and commercial rights language is less clear than Western competitors.

Enterprise controls that agencies and large companies expect — SSO, audit logs, role-based access, SOC 2 compliance — are absent. English-language customer support is improving but still patchy compared to Runway or Adobe.

The editing and workflow tools are minimal: Hailuo is a generation engine, not a creative suite. You generate clips and export them — there’s no timeline, no compositing, no storyboard mode.

Pricing snapshot (as of March 2026): Free tier with daily generation limits; subscription and credit-based paid options available at rates that undercut most Western competitors. Check Hailuo’s official site for current plans.

Commercial rights & watermarking: Commercial rights on paid plans, but the English-language ToS can be vague on specific use cases. Verify current terms carefully before deploying in client-facing campaigns.

Who should use it: Experimenters, indie creators, and researchers exploring high-quality generative video at low cost. Creators producing landscape, architectural, or atmospheric content.

Who should avoid it: Enterprise buyers needing clear commercial licensing and compliance documentation. Agencies producing client-facing deliverables requiring unambiguous IP rights.

Score breakdown:

| Dimension | Score |

|---|---|

| Realism & detail | 8.5 |

| Motion fidelity | 8.2 |

| Temporal consistency | 7.8 |

| Controllability | 7.0 |

| Audio / lip sync | 8.0 |

| Speed & reliability | 7.5 |

| Workflow & editing | 6.5 |

| Rights & safety | 6.8 |

| Value | 8.8 |

| Composite | 8.0 |

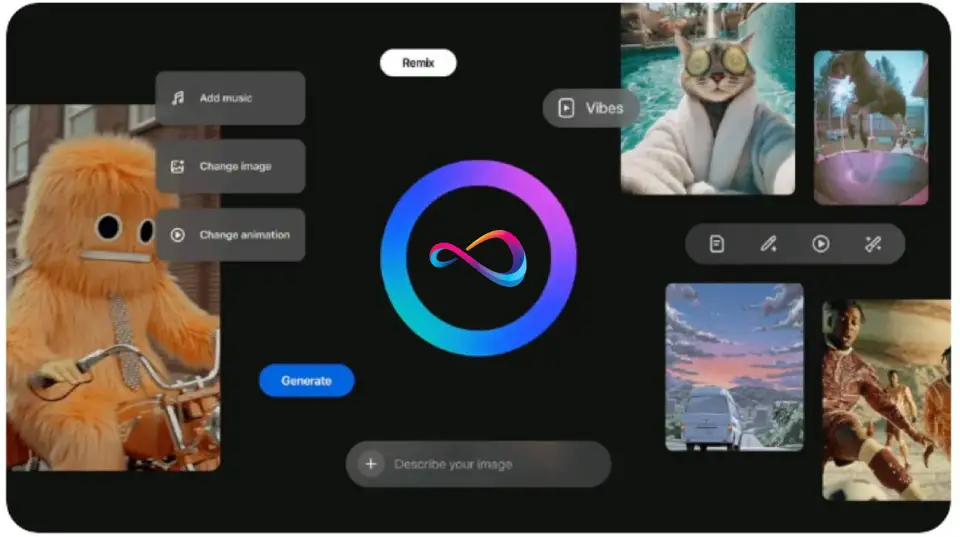

12. Meta Vibes

- Best for: Social-first video generation within the Meta ecosystem

- What it creates: T2V, I2V

| Pros | Cons |

|---|---|

| Zero context-switching for Meta ad campaigns | Outputs designed primarily for Meta platforms only |

| Native audio generation (ambient + music) | No motion brush, keyframing, or advanced controls |

| Auto AI provenance metadata for compliance | Quality below professional/cinematic tier |

| Lowest friction for small businesses on Meta | Feature set depends on Meta’s evolving strategy |

Standout features (2026): Meta Vibes integrates generative video directly into Facebook, Instagram, and WhatsApp creative tools, making it the lowest-friction AI video generator for businesses already running Meta ad campaigns. The key value proposition is zero context-switching: you create AI video content within the same platform where you publish and promote it.

Native audio generation produces ambient sound and music tracks synced to the video. Outputs are optimized for short-form social formats — Stories, Reels, and feed posts — with automatic aspect ratio handling.

AI provenance metadata is attached to all outputs automatically, keeping you compliant with Meta’s evolving disclosure policies. For small businesses running Meta ads without a dedicated creative team, Vibes dramatically lowers the barrier to producing video ad content.

Limitations / deal-breakers: Tight Meta ecosystem integration is both the product’s strength and its most significant constraint. Outputs are designed primarily for use within Meta’s platforms — exporting for YouTube, your website, or other channels may be restricted or unsupported.

Creative control is intentionally minimal, designed for casual users and small business owners, not professional video producers. There’s no motion brush, no keyframing, no camera path controls, and limited prompt refinement options.

Video quality is competitive for social consumption but falls short of tools like Veo, Sora, or Runway for professional or cinematic use. The platform’s availability and feature set can shift based on Meta’s broader product strategy, making long-term workflow dependency risky.

Pricing snapshot (as of March 2026): Available through Meta’s creative and advertising tools; some features are tied to active Meta advertising spend. Check the Meta for Business portal for current access details.

Commercial rights & watermarking: Permitted for use in Meta ad campaigns and organic social posting on Meta platforms. An AI-generated label is automatically applied to all outputs per Meta’s content labeling policy.

Who should use it: Social media marketers, small business owners running Meta ads, and teams who want to produce quick video content without leaving the Meta advertising ecosystem.

Who should avoid it: Professional video producers, agencies needing platform-agnostic output, or anyone requiring advanced generative controls.

Score breakdown:

| Dimension | Score |

|---|---|

| Realism & detail | 7.8 |

| Motion fidelity | 7.5 |

| Temporal consistency | 7.5 |

| Controllability | 5.5 |

| Audio / lip sync | 7.5 |

| Speed & reliability | 8.5 |

| Workflow & editing | 6.5 |

| Rights & safety | 8.0 |

| Value | 8.0 |

| Composite | 7.5 |

AI Avatar / Spokesperson / Training Platforms

These platforms create videos featuring AI-generated human presenters (avatars). They’re built for training, marketing, and internal communications — not cinematic scenes.

13. Synthesia

- Best for: Enterprise training videos, onboarding, and corporate communications

- What it creates: Avatar, T2V

| Pros | Cons |

|---|---|

| 230+ stock avatars, 140+ language/voice combos | “Uncanny valley” on close-up shots |

| SCORM + xAPI export for LMS; SOC 2 Type II | Per-seat Enterprise pricing expensive at scale |

| Mature collaboration: review workflows, approval chains | Limited to presenter format — no cinematic scenes |

| Script-to-video in under 1 hour | Gesture variety limited vs. real presenters |

Standout features (2026): Synthesia is the market leader in AI avatar video for enterprise, and the gap has widened in 2026. The platform offers 230+ stock avatars with diverse demographics and professional presentation styles. Custom avatar creation requires only a short studio recording session.

140+ language and voice combinations enable true global content deployment from a single script. SCORM and xAPI export integrates directly with major LMS platforms (Cornerstone, Docebo, TalentLMS), making Synthesia purpose-built for L&D workflows.

SOC 2 Type II compliance, SSO support, and role-based access controls satisfy enterprise security requirements. Collaboration features are mature: review workflows, approval chains, version history, and team commenting are all built in. The script-to-video pipeline is extremely efficient — an L&D team can produce a complete training module in under an hour.

Limitations / deal-breakers: Avatars, while improving significantly, still exhibit visible “uncanny valley” characteristics in close-up shots — micro-expressions, blinking patterns, and mouth movements can feel slightly artificial to attentive viewers. The platform is purpose-built for training and corporate communications, not for cinematic or entertainment content.

Per-seat pricing on Enterprise plans gets expensive at scale — organizations with 50+ content creators should negotiate volume terms carefully. Template-based workflows are efficient but constraining for teams wanting to deviate from the standard presenter-plus-slides format.

Gesture variety for avatars remains limited compared to real human presenters. The custom avatar quality depends heavily on the source recording conditions.

Pricing snapshot (as of March 2026): Starter $29/mo (limited features); Creator $89/mo (full feature set, individual use); Enterprise (custom pricing, per seat, includes SSO, advanced analytics). Check Synthesia’s pricing page for current plan details.

Commercial rights & watermarking: Full commercial rights on all paid plans for internal and external use. Enterprise plans include data processing agreements. No watermark on any paid tier.

Who should use it: L&D teams, HR departments, and global enterprises that need to produce multilingual training and onboarding content at scale. Compliance teams producing mandatory regulatory training.

Who should avoid it: Creators needing cinematic quality or generative scenes. Marketing teams wanting stylistic flexibility beyond the avatar-presenter format.

Score breakdown:

| Dimension | Score |

|---|---|

| Realism & detail | 8.0 |

| Motion fidelity | 7.8 |

| Temporal consistency | 8.5 |

| Controllability | 8.5 |

| Audio / lip sync | 9.0 |

| Speed & reliability | 9.0 |

| Workflow & editing | 8.8 |

| Rights & safety | 9.0 |

| Value | 7.5 |

| Composite | 8.4 |

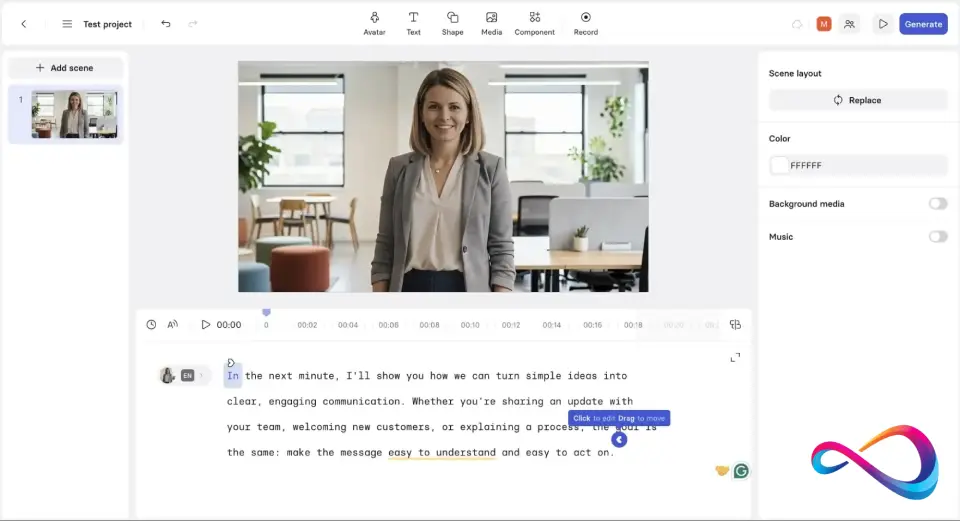

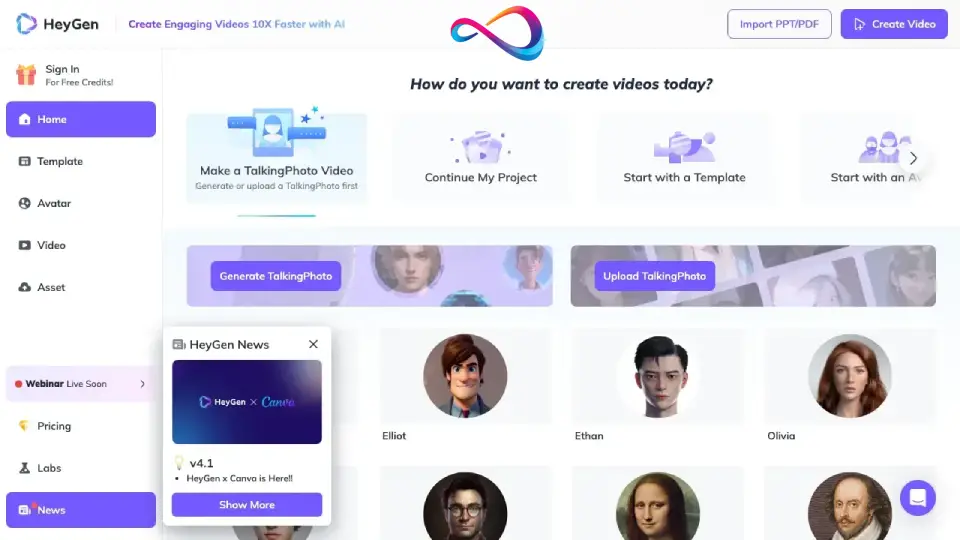

14. HeyGen

- Best for: Avatar-based marketing videos, video translation, and localization

- What it creates: Avatar, T2V

| Pros | Cons |

|---|---|

| Video Translate: lip-sync re-rendering in 40+ languages | Per-minute pricing can create cost surprises at volume |

| 175+ avatars + Instant Avatar from 2-minute recording | Most realistic avatars locked behind Enterprise plan |

| Interactive Avatar for real-time conversations | Not a scene generator — avatar/presenter format only |

| Production-ready API for marketing automation | Video Translate quality varies by language pair |

Standout features (2026): HeyGen’s defining feature is “Video Translate” — a tool that takes existing video of a real speaker, translates the content into 40+ languages, and re-renders the speaker’s lip movements to match the translated audio, all while preserving the original speaker’s voice timbre and tone through voice cloning. The result is remarkably convincing cross-language video.

Beyond translation, HeyGen offers 175+ stock avatars with strong customization options. “Instant Avatar” lets you create a custom digital twin from a 2-minute recording, and “Interactive Avatar” enables real-time avatar-driven conversations for customer support and lead qualification.

The API is well-documented and production-ready, enabling integration into marketing automation workflows, CRM-triggered video email campaigns, and product-led onboarding sequences.

Limitations / deal-breakers: Like all avatar platforms, quality degrades on spontaneous gestures and emotional range — avatars work best for structured, scripted content. Pricing scales with minutes produced, which can create cost surprises for teams producing high volumes.

The most realistic and customizable avatars are locked behind the Enterprise plan. Custom avatar creation, while fast, requires consent workflows and identity verification to prevent unauthorized deepfake use — a necessary friction but one that adds onboarding time.

Video Translate quality varies by language pair, with major languages (English, Spanish, Mandarin) performing better than lower-resource languages.

Pricing snapshot (as of March 2026): Creator $29/mo (limited credits); Business $89/mo (expanded credits, more avatars); Enterprise (custom pricing, custom avatars, dedicated support). Check HeyGen’s pricing page for current credit allocations.

Commercial rights & watermarking: Full commercial rights on all paid plans. Enterprise plans include custom avatar consent workflows and identity verification for voice/face cloning. No watermark on paid plans.

Who should use it: Marketing teams running multilingual campaigns, agencies creating localized ad content at scale, and companies needing spokesperson videos across markets without re-shooting.

Who should avoid it: Users needing fully generative cinematic scenes — HeyGen is an avatar platform, not a scene generator. Teams on tight budgets where per-minute pricing could escalate.

Score breakdown:

| Dimension | Score |

|---|---|

| Realism & detail | 8.2 |

| Motion fidelity | 8.0 |

| Temporal consistency | 8.5 |

| Controllability | 8.5 |

| Audio / lip sync | 9.2 |

| Speed & reliability | 8.8 |

| Workflow & editing | 8.5 |

| Rights & safety | 8.8 |

| Value | 7.8 |

| Composite | 8.6 |

15. DeepBrain AI

- Best for: AI news anchors, training modules, and kiosk-style interactive presentations

- What it creates: Avatar, T2V

| Pros | Cons |

|---|---|

| High-fidelity news-anchor and presenter avatars | Smaller avatar library than Synthesia or HeyGen |

| Real-time avatar: kiosks, virtual receptionists, live Q&A | No full-body or walking avatars |

| Built-in editor with lower-thirds, backgrounds, transitions | Steep Starter→Pro price jump ($30→$225/mo) |

| 80+ language TTS | No NLE integrations (Premiere, After Effects) |

Standout features (2026): DeepBrain AI carves a niche around high-fidelity “talking head” formats — news anchors, training instructors, and interactive kiosk presenters. The avatars are optimized for upper-body shots with natural head movement, eye contact, and professional presentation posture.

The AI Studios platform includes a built-in editor with scene layouts, lower-third graphics, background customization, and transition effects — so you can produce broadcast-ready content without an external editor.

The standout differentiator is real-time avatar generation: DeepBrain powers interactive kiosk experiences, virtual receptionists, and live AI presenters that respond to user input in real time. Multilingual TTS supports 80+ languages with improving naturalness.

Limitations / deal-breakers: The avatar library is smaller than Synthesia’s or HeyGen’s — fewer options for demographic diversity. Motion range is limited to seated and standing presenter formats: walking, full-body gestures, and physical interaction with objects are not supported.

NLE integrations (Premiere, After Effects) are absent — you work within AI Studios or export. Video translation features are less mature than HeyGen’s side-by-side comparison.

The price jump from Starter ($30/mo) to Pro ($225/mo) is steep, and the Starter tier is restrictive enough that most professional users will need Pro. SCORM export for LMS delivery is available but less mature than Synthesia’s or Colossyan’s implementation.

Pricing snapshot (as of March 2026): Starter $30/mo (limited minutes, basic avatars); Pro $225/mo (expanded library, priority rendering, interactive features); Enterprise (custom pricing, kiosk deployment). Check DeepBrain AI’s pricing page for current plan details.

Commercial rights & watermarking: Full commercial rights on all paid plans. No watermark on paid tiers. Enterprise plans include deployment licensing for kiosk and interactive installations.

Who should use it: News desks producing AI-anchored content, companies deploying interactive kiosk or virtual receptionist experiences, and training producers who prioritize broadcast-quality upper-body presentation.

Who should avoid it: Users who need walking or physically dynamic avatars. Teams that require large avatar libraries. Budget-conscious buyers who find the Starter-to-Pro jump prohibitive.

Score breakdown:

| Dimension | Score |

|---|---|

| Realism & detail | 7.5 |

| Motion fidelity | 7.0 |

| Temporal consistency | 8.0 |

| Controllability | 7.5 |

| Audio / lip sync | 8.5 |

| Speed & reliability | 8.0 |

| Workflow & editing | 7.5 |

| Rights & safety | 8.0 |

| Value | 7.0 |

| Composite | 7.6 |

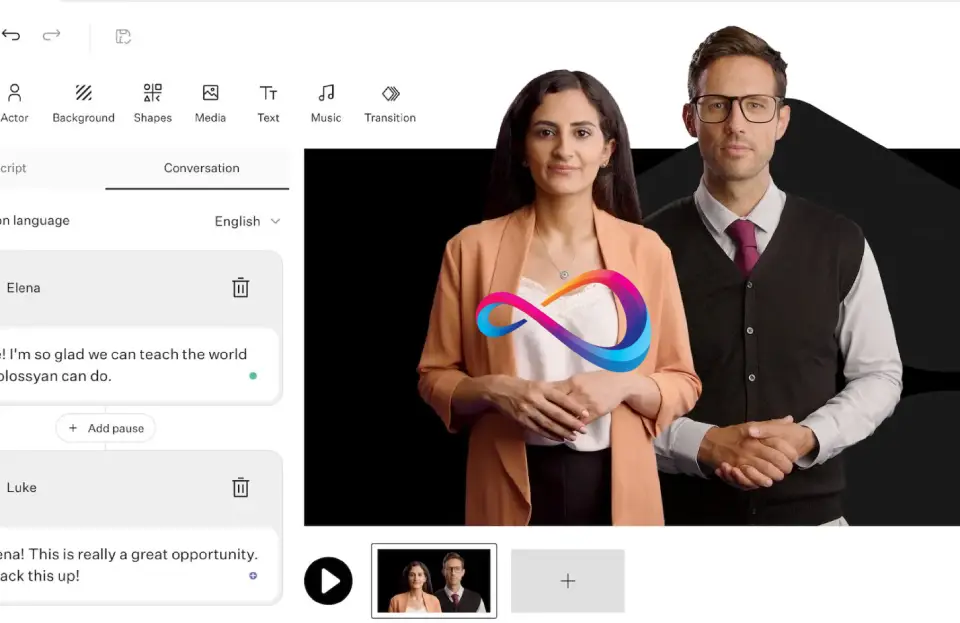

16. Colossyan

- Best for: Compliance training, L&D at scale, workplace learning

- What it creates: Avatar, T2V

| Pros | Cons |

|---|---|

| Scenario branching for choose-your-own-path training | Not designed for marketing or creative content |

| Built-in quiz/assessment creation + SCORM/xAPI export | Avatar realism below Synthesia and HeyGen |

| 70+ language support with natural TTS | Template-based — limited customization outside frames |

| Workspace collaboration: approval chains, version control | Per-seat pricing can scale quickly |

Standout features (2026): Colossyan focuses on the L&D use case more explicitly than any competitor in this guide, and that focus shows in its feature set. Scenario branching lets you build choose-your-own-path training modules where learners make decisions that affect the video’s direction — dramatically improving engagement for compliance and soft-skills training.

Built-in quiz and assessment creation means you can embed knowledge checks directly in the video without external tools. SCORM and xAPI export integrates cleanly with major LMS platforms.

The template library is designed specifically for workplace training: compliance, onboarding, safety, DEI, and customer service scenarios are well covered. Multilingual avatars with natural-sounding TTS support 70+ languages. Workspace collaboration features — approval chains, version control, team commenting — make Colossyan practical for L&D teams with review processes.

Limitations / deal-breakers: Colossyan is not designed for marketing, creative, or entertainment content — the avatar styles and template structures are explicitly enterprise-L&D. Avatar realism is a step below Synthesia and HeyGen, particularly in facial expressiveness and natural gestural range.

Per-seat enterprise pricing follows the standard pattern for this category and can scale quickly for large organizations. Customization beyond the template structure is limited: if your training design doesn’t fit Colossyan’s frameworks, the tool will feel constraining.

The platform’s focus on L&D means features like video translation, marketing templates, and social-format export receive less development attention than competitors.

Pricing snapshot (as of March 2026): Starter $35/mo (basic features, limited minutes); Pro plans with expanded minutes; Enterprise (custom pricing, per seat). Check Colossyan’s pricing page for current plan details.

Commercial rights & watermarking: Full commercial rights on all paid plans for training and distribution. Enterprise data processing agreements available. No watermark on paid plans.

Who should use it: L&D managers, compliance teams, and HR departments producing mandatory training at scale. Organizations that need scenario-based interactive training with built-in assessments.

Who should avoid it: Anyone not in the L&D or training space. Marketing teams and creative agencies will find the platform too narrowly focused.

Score breakdown:

| Dimension | Score |

|---|---|

| Realism & detail | 7.5 |

| Motion fidelity | 7.2 |

| Temporal consistency | 8.0 |

| Controllability | 8.0 |

| Audio / lip sync | 8.2 |

| Speed & reliability | 8.2 |

| Workflow & editing | 8.5 |

| Rights & safety | 8.5 |

| Value | 7.5 |

| Composite | 7.8 |

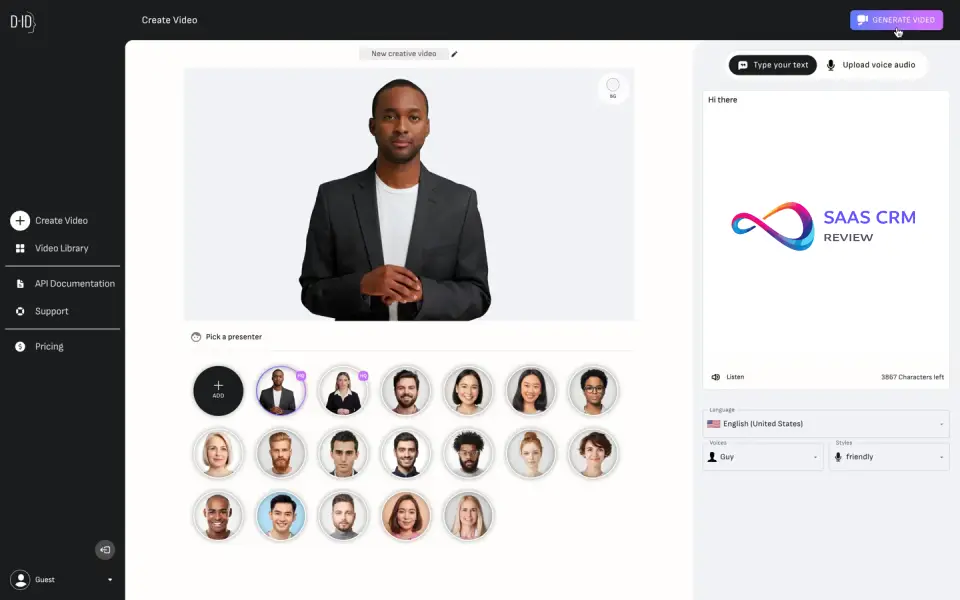

17. D-ID

- Best for: Talking photos, animated headshots, quick avatar snippets

- What it creates: Avatar, I2V

| Pros | Cons |

|---|---|

| Turn any still photo into a talking, animated video | Quality degrades on clips over 1 minute |

| Clean developer API for embedding avatar features | Head/face motion only — no full-body movement |

| Low entry price ($5.90/mo Lite) | “Deepfake” perception can concern compliance teams |

| Works with photos, illustrations, and AI portraits | Falling behind full avatar platforms in feature scope |

Standout features (2026): D-ID’s core capability is turning a single still photo into a talking, animated video — the face moves, the lips sync to audio, and the head provides natural micro-movements. This “talking photo” approach is useful for social media content, personalized sales outreach, educational content featuring illustrated characters, and customer engagement campaigns.

The developer API is clean and well-documented, making D-ID a popular choice for teams building avatar-based features into apps, websites, or chatbots. “Creative Reality Studio” provides a web-based editor for non-developers.

Lip-sync quality on short segments (under 60 seconds) is decent, and the platform supports a range of input image types — photos, illustrations, AI-generated portraits. Pricing starts lower than most avatar competitors, making it accessible for experimentation.

Limitations / deal-breakers: Quality degrades noticeably on clips longer than one minute — temporal drift in facial movements becomes visible. Motion is strictly limited to head and face; there is no full-body movement, no gestures, no walking, and no physical interaction with objects.

The “deepfake” association makes some brands and compliance teams uncomfortable, even when the use is legitimate. Enterprise features (SSO, audit logs, team roles) are less mature than Synthesia’s or HeyGen’s.

The gap between D-ID’s talking-photo approach and full avatar platforms has widened — D-ID feels increasingly like a specialized tool rather than a comprehensive avatar video platform. Custom voice cloning is available but less refined than HeyGen’s implementation.

Pricing snapshot (as of March 2026): Free trial (limited); Lite $5.90/mo; Pro $26/mo (API access); Advanced $87/mo (priority rendering); Enterprise (custom). Check D-ID’s pricing page for current plans.

Commercial rights & watermarking: Commercial use on all paid plans. Watermark on free/trial tier; removed on paid plans. No IP indemnification.

Who should use it: Social media marketers producing personalized content, educators bringing historical or illustrated characters to life, and developers building avatar-based interactions into apps.

Who should avoid it: Enterprises needing full-body avatars or long-form training modules. Brands uncomfortable with the deepfake association.

Score breakdown:

| Dimension | Score |

|---|---|

| Realism & detail | 7.2 |

| Motion fidelity | 6.8 |

| Temporal consistency | 7.5 |

| Controllability | 7.0 |

| Audio / lip sync | 8.0 |

| Speed & reliability | 8.0 |

| Workflow & editing | 7.0 |

| Rights & safety | 7.5 |

| Value | 7.8 |

| Composite | 7.4 |

Marketing & Repurposing Suites

These platforms combine AI video generation with editing, templates, and publishing tools. They’re optimized for speed-to-publish, not raw generative quality.

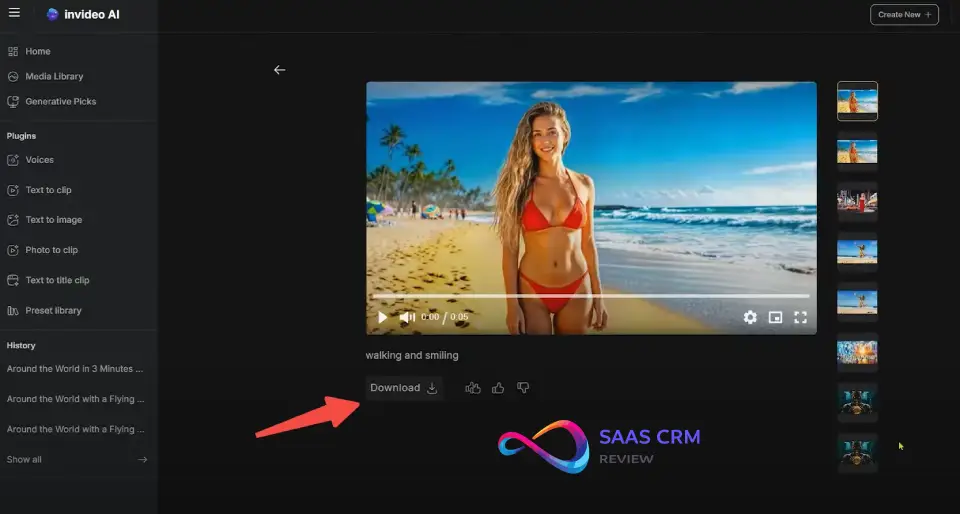

18. InVideo AI

- Best for: Fast marketing video creation from a text prompt

- What it creates: T2V, Editor, Templates

| Pros | Cons |

|---|---|

| Type a prompt (or paste a blog post) → finished video | Based on stock footage assembly, not true generative video |

| Built-in script, voiceover, stock footage, and music | Template constraints limit creative differentiation |

| 50+ language TTS with natural-sounding voices | Quality varies by template and topic coverage |

| Up to 50 videos/mo on Plus ($25/mo) | Audio is TTS-only — no ambient sound or SFX |

Standout features (2026): InVideo AI is the closest thing in this guide to a “describe it and get a finished video” experience. You type a single text prompt — or paste a blog post, product description, or campaign brief — and InVideo generates a complete video: script, voiceover, stock footage selection, transitions, captions, and background music.

The output is immediately editable in a timeline editor where you can swap clips, change voiceover, adjust text overlays, and modify pacing. The template library covers a wide range of marketing formats: social ads, product explainers, listicles, testimonial compilations, and YouTube intros.

Multilingual voiceover supports 50+ languages with natural-sounding TTS. The AI script generation is surprisingly good for marketing copy — it produces structured scripts with hooks, benefit statements, and CTAs that need minimal editing. For teams producing 10–50 marketing videos per month, InVideo’s speed-to-publish is unmatched.

Limitations / deal-breakers: Outputs lean heavily on curated stock footage rather than true generative video — InVideo is assembling and editing existing media, not creating novel visual content from scratch. This means the creative ceiling is lower than tools like Runway, Sora, or Veo.

Advanced users will bump into template constraints quickly — the structures are efficient but rigid. Quality varies significantly by template and stock footage availability in your topic area.

The platform is better suited for volume production of “good enough” marketing content than for crafting distinctive creative work. Audio generation is TTS-only — no native ambient sound or SFX generation.

Pricing snapshot (as of March 2026): Free tier (watermarked, limited); Plus $25/mo (50 videos/mo, no watermark); Max $60/mo (unlimited videos, priority rendering). Check InVideo’s pricing page for current plan details.

Commercial rights & watermarking: Commercial use on all paid plans. Stock footage licensing included. Watermark on free tier; removed on Plus and above.

Who should use it: Small businesses, solo marketers, and content teams producing high volumes of marketing videos without dedicated video editors. Ideal for social media ads and repurposed blog-to-video content.

Who should avoid it: Creative professionals needing original, brand-differentiating video. Anyone requiring true generative video rather than assembled stock content.

Score breakdown:

| Dimension | Score |

|---|---|

| Realism & detail | 7.0 |

| Motion fidelity | 6.5 |

| Temporal consistency | 7.5 |

| Controllability | 7.0 |

| Audio / lip sync | 7.5 |

| Speed & reliability | 8.5 |

| Workflow & editing | 8.0 |

| Rights & safety | 7.8 |

| Value | 8.0 |

| Composite | 7.5 |

19. VEED.io

- Best for: Social media video editing, repurposing, and subtitling

- What it creates: Editor, T2V (limited), Subtitles

| Pros | Cons |

|---|---|